Unlocking Value: Mastering Low-Cost Big Data Management for Modern Enterprises

In today’s data-driven landscape, the ability to manage vast quantities of information efficiently and economically is no longer a luxury but a strategic imperative. Organizations are constantly seeking robust strategies for Low-Cost Big Data Management to extract valuable insights without incurring prohibitive operational expenses. This deep dive explores how businesses can effectively control costs while harnessing the full potential of their data, ensuring competitive agility and fostering continuous innovation in an ever-expanding digital universe.

Our analysis focuses on leveraging open-source technologies, optimized cloud architectures, and intelligent data lifecycle management to achieve significant cost savings. We will delve into how a data lake/lakehouse approach, underpinned by serverless computing and cloud object storage, provides a scalable yet affordable foundation for modern data initiatives, including advanced AI and ML workloads.

Introduction: The Strategic Imperative of Affordable Data Solutions

The exponential growth of data across all industries presents both immense opportunities and significant challenges. While the promise of big data lies in its capacity to drive business intelligence, personalized customer experiences, and operational efficiencies, the costs associated with storing, processing, and analyzing these massive datasets can quickly become unsustainable. This has made Low-Cost Big Data Management a critical focus for CIOs and data leaders alike. The objective is clear: unlock the full potential of enterprise data for strategic decision-making and innovation, all while maintaining stringent control over expenditure. Achieving this balance requires a nuanced understanding of available technologies, architectural patterns, and operational best practices.

Modern enterprises are navigating a complex ecosystem where data volumes are measured in petabytes and even exabytes, demanding scalable yet inherently economical solutions. The pursuit of affordable data solutions isn’t merely about cutting corners; it’s about making intelligent, strategic investments that yield maximum return on investment. This article will dissect the key components and methodologies that empower organizations to implement effective, low-cost big data management strategies, emphasizing approaches that scale with demand without escalating costs disproportionately.

Core Breakdown: Architecting for Economical Data at Scale

Achieving Low-Cost Big Data Management is fundamentally about making informed, strategic decisions across technology stacks, infrastructure choices, and operational processes. The modern paradigm emphasizes flexible, scalable, and open architectures that minimize proprietary vendor lock-in and optimize resource consumption.

Platform Category: Data Lake / Data Lakehouse Foundations

The foundation of a low-cost big data strategy often lies in adopting a Data Lake or Data Lakehouse architecture. Unlike traditional data warehouses with their rigid schemas and high licensing costs, data lakes leverage inexpensive object storage (like Amazon S3, Azure Blob Storage, or Google Cloud Storage) to store raw, unstructured, semi-structured, and structured data at massive scale. A data lakehouse extends this concept by adding data warehousing capabilities, such as ACID transactions, schema enforcement, and improved query performance, directly on the data lake, often utilizing open-source formats like Apache Iceberg or Delta Lake. This approach offers the flexibility and cost-efficiency of a data lake combined with the reliability and performance of a data warehouse, all built on commodity cloud storage.

Core Technology/Architecture: Open Source and Serverless on Cloud Object Storage

The bedrock of low-cost solutions lies in open-source technologies and serverless paradigms.

-

Leveraging Open-Source Power:

- Hadoop and Spark Foundations: Robust frameworks like Apache Hadoop and Apache Spark remain cornerstones for processing and storing vast datasets. Hadoop’s Distributed File System (HDFS) and YARN for resource management, coupled with Spark’s in-memory processing capabilities, offer powerful, free alternatives to commercial solutions. These technologies, often deployed on cloud compute instances or as managed services, provide the computational backbone for complex analytics without licensing fees.

- PostgreSQL and NoSQL Alternatives: For structured and semi-structured data, open-source databases like PostgreSQL, MongoDB (Community Edition), and Apache Cassandra provide flexible, scalable, and cost-effective options. These databases support various data models and use cases, allowing organizations to select the most appropriate and economical solution for specific data types rather than relying on a single, expensive proprietary database.

- Community-Driven Innovation: The vibrant and active community supporting open-source tools ensures continuous improvement, security patches, and a wealth of shared knowledge, significantly reducing development and support costs.

-

Cloud Optimization Strategies:

- Right-Sizing Compute Resources: A crucial aspect of cost control in the cloud is meticulously matching virtual machine instances or container capacities to actual workload demands. Over-provisioning compute resources leads to unnecessary expenditure. Tools for monitoring and auto-scaling ensure that resources dynamically adjust to demand, minimizing idle capacity costs.

- Smart Storage Tiers: Cloud object storage offers various tiers (e.g., standard, infrequent access, archive). Utilizing cold storage for infrequently accessed or archival data, and intelligent tiering policies, significantly reduces cloud storage bills without sacrificing data availability for critical workloads.

- Serverless Data Processing: Event-driven and serverless architectures, such as AWS Lambda, Azure Functions, or Google Cloud Functions, minimize idle resource costs by executing code only when triggered and automatically scaling up or down. For big data processing, serverless services like AWS Athena, Google BigQuery, and Azure Synapse Serverless SQL Pool allow users to query data directly in object storage, paying only for the data scanned, which is highly economical for ad-hoc queries and bursty workloads.

Key Data Governance Feature: Cloud IAM and Centralized Permission Services

Effective data governance, particularly access control, is vital for security and compliance but can also impact costs through operational overhead. Leveraging native cloud Identity and Access Management (IAM) services (e.g., AWS IAM, Azure AD, Google Cloud IAM) combined with centralized permission services like AWS Lake Formation, simplifies and automates data access management across the data lake. This minimizes the manual effort required to manage permissions, reduces the risk of misconfigurations, and ensures that sensitive data is only accessible to authorized users and applications, all without requiring expensive third-party governance tools.

Primary AI/ML Integration: Integration with Cloud ML Platforms and Open Source Libraries

The ability to integrate seamlessly with AI/ML platforms is critical for extracting advanced insights. Low-Cost Big Data Management systems facilitate this by:

- Cloud ML Platforms: Providing direct integrations with managed cloud ML platforms like Amazon SageMaker, Google Vertex AI, and Azure Machine Learning. These platforms offer managed services for model development, training, and deployment, reducing the need for extensive in-house MLOps expertise and expensive dedicated GPU infrastructure.

- Open Source Libraries: Supporting open-source ML libraries (e.g., Spark MLlib, TensorFlow, PyTorch) that can run efficiently on cloud compute resources. This allows data scientists to leverage a vast ecosystem of tools and algorithms without incurring licensing costs for proprietary ML platforms. The data lakehouse structure ensures data quality and accessibility for these ML workloads.

Challenges/Barriers to Adoption in Low-Cost Big Data Management

While the promise of low-cost big data management is compelling, several challenges can impede successful adoption and implementation:

- Complexity of Open Source: While free, open-source technologies often come with a steeper learning curve and require more technical expertise for setup, configuration, and maintenance compared to commercial off-the-shelf solutions. This can translate into higher staffing costs or the need for specialized training.

- Data Governance Overhead: Implementing comprehensive data governance, including data quality, lineage, and compliance, across diverse and often raw data in a data lake can be complex and labor-intensive without robust automated tools, potentially offsetting some cost savings.

- Vendor Lock-in (Even in Cloud): While open-source aims to avoid vendor lock-in, heavy reliance on specific cloud provider services (even serverless or managed open-source offerings) can create a degree of stickiness, making migration to another cloud or on-premises environment challenging and costly.

- Skill Gap: There’s a persistent shortage of skilled professionals proficient in big data technologies, cloud architecture, and MLOps. This can make it difficult to build and maintain efficient low-cost big data platforms, leading to potential project delays and increased recruitment costs.

- Cost Sprawl and Optimization Drift: Without continuous monitoring and proactive optimization, cloud costs can quickly spiral out of control. It’s easy for resources to be over-provisioned, or for outdated data to remain in expensive storage tiers, eroding the “low-cost” advantage.

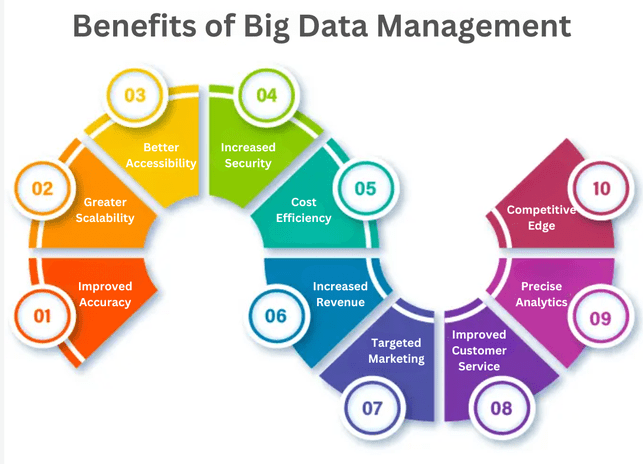

Business Value and ROI of Low-Cost Big Data Management

Despite the challenges, the return on investment from effective Low-Cost Big Data Management strategies is substantial and multifaceted:

- Faster Time to Insight: By reducing infrastructure procurement and deployment times, and facilitating easier data access, businesses can analyze data and derive insights much more rapidly. This agility translates directly into faster decision-making and quicker responses to market changes.

- Reduced Total Cost of Ownership (TCO): Leveraging open-source software, scalable cloud object storage, and serverless compute significantly lowers infrastructure, licensing, and operational costs. Organizations pay only for what they use, eliminating large upfront capital expenditures.

- Enhanced Scalability and Flexibility: Cloud-native, open-source architectures provide unparalleled scalability to handle fluctuating data volumes and processing demands without requiring expensive hardware upgrades or over-provisioning.

- Improved Data Quality for AI/ML: A well-managed data lakehouse provides a unified, high-quality data source for AI and machine learning initiatives, leading to more accurate models and better predictive capabilities, ultimately driving innovation and competitive advantage.

- Greater Innovation: Lower costs free up budget and resources that can be reallocated to experimentation, research and development, and exploring new data-driven products and services, fostering a culture of innovation.

- Competitive Advantage: Businesses that can efficiently manage and derive value from their data at a lower cost gain a significant edge, enabling them to offer more competitive pricing, better products, and superior customer experiences.

Comparative Insight: Low-Cost Big Data Management vs. Traditional Models

To truly appreciate the value proposition of modern Low-Cost Big Data Management, it’s essential to compare it against traditional approaches like monolithic Cloud Data Warehouses and On-Premises Hadoop Clusters.

-

Against Cloud Data Warehouses (e.g., Snowflake, BigQuery):

While cloud data warehouses offer convenience and powerful SQL analytics, their cost model can be significantly higher, especially for large volumes of raw, unstructured data or complex transformations. They typically charge for compute and storage separately, and often have higher per-gigabyte storage costs than object storage. The strength of low-cost data lake/lakehouse solutions lies in their ability to store all data types in inexpensive object storage, with compute decoupled and often serverless, meaning you only pay for query execution. This makes them ideal for exploratory analytics, data science workloads, and storing vast amounts of historical data where the full power of a dedicated data warehouse might be overkill or prohibitively expensive for certain use cases.

-

Against On-Premises Hadoop Clusters:

On-premises Hadoop clusters, while utilizing open-source software, come with substantial upfront capital expenditure for hardware, ongoing operational costs for power, cooling, and maintenance, and the complexity of managing a large distributed system. Scaling an on-premises cluster is a time-consuming and expensive endeavor. In contrast, cloud-based low-cost big data management leverages the elasticity and pay-as-you-go model of cloud infrastructure. This eliminates CapEx, reduces OpEx by offloading infrastructure management to the cloud provider, and offers near-infinite scalability on demand, significantly reducing the total cost of ownership and operational burden. The shift to serverless and managed services further enhances this cost advantage by abstracting away infrastructure concerns entirely.

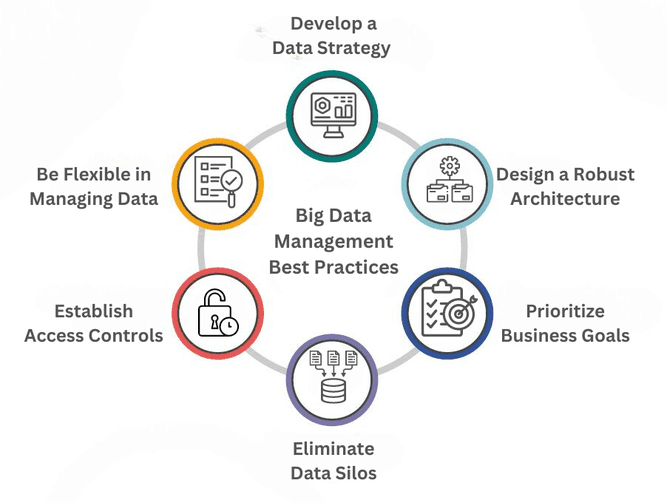

World2Data Verdict: Embracing a Hybrid, Optimized Future

The future of Low-Cost Big Data Management is not about sacrificing capability for cost savings, but rather about strategic optimization. World2Data.com advocates for a pragmatic, hybrid approach that combines the best aspects of open-source flexibility with the elasticity and managed services of cloud providers. Organizations should prioritize a data lakehouse architecture built on cloud object storage, leveraging serverless compute for cost-effective processing and open-source tools for robust analytics. Continuous cost monitoring, automated data lifecycle management, and a strong emphasis on cloud-native data governance (e.g., Cloud IAM and centralized permission services like AWS Lake Formation) are paramount. The actionable recommendation is to invest in upskilling data teams to master these modern, open-source, and cloud-native paradigms, enabling the construction of agile, scalable, and truly economical big data platforms that drive innovation and maintain a clear competitive edge in a data-saturated world. The journey towards low-cost big data management is ongoing, requiring vigilance and adaptability, but the strategic rewards are undeniable.