A/B Testing: Powering Performance and Conversion Growth Through Experimentation

Adopting A/B testing is essential for achieving digital excellence in today’s fiercely competitive landscape. This scientific method rigorously compares two versions of a digital asset to reveal which performs superiorly, often leading to significant improvements in key metrics. By systematically testing hypotheses against real user behavior, businesses can move beyond assumptions, enabling data-driven decisions that fuel sustained growth and optimize their digital experiences for maximum impact.

Platform Category: Experimentation Platform

Core Technology/Architecture: Statistical Inference Engines, Event Tracking, Dynamic Variant Delivery

Key Data Governance Feature: User Consent Management, Experiment Audit Trails, Metric Definition Consistency

Primary AI/ML Integration: Multi-Armed Bandit Optimization, Automated Experiment Design, Predictive Personalization

Main Competitors/Alternatives: Optimizely, VWO, Adobe Target, Split.io

Introduction to A/B Testing: The Cornerstone of Data-Driven Optimization

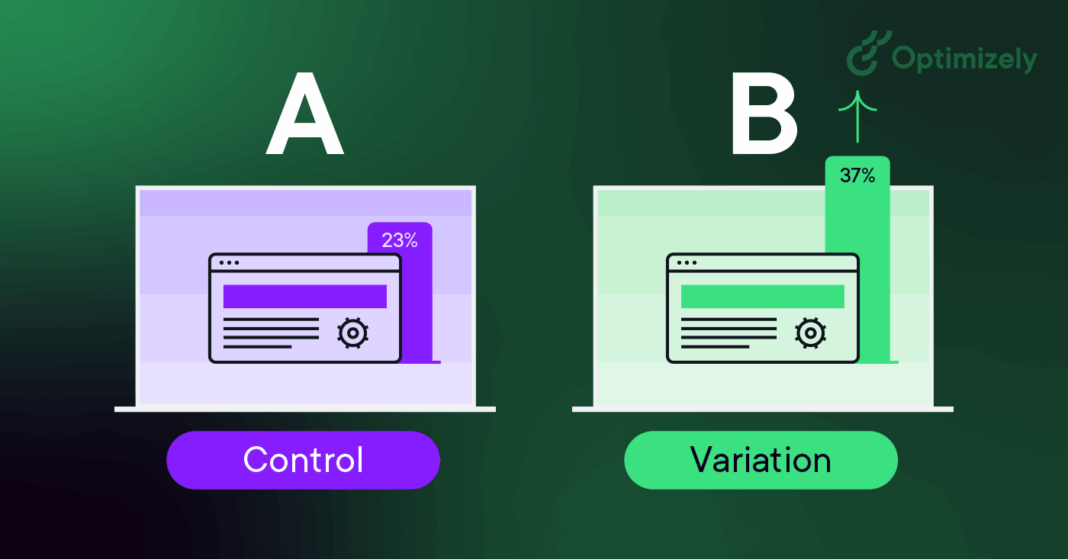

In the dynamic realm of digital marketing and product development, understanding what truly resonates with users is paramount. This is where A/B testing, also known as split testing, emerges as an indispensable tool. At its core, A/B testing is a randomized control experiment involving two (or more) variants of a webpage, app screen, email, or any other digital asset. These variants are shown to different segments of your audience simultaneously, and their performance is measured against predefined metrics. The objective is clear: to identify which version achieves better results, whether that’s higher conversion rates, increased engagement, or improved user satisfaction.

The fundamental principle behind A/B testing is its ability to isolate the impact of a single change. By comparing a ‘control’ (Version A) with a ‘variant’ (Version B) that has only one distinct modification, businesses can confidently attribute any observed performance differences to that specific change. This rigorous, controlled experimentation ensures clarity and provides actionable insights, removing the guesswork from strategic decisions. This article delves deep into how A/B testing improves performance and conversions, its technical underpinnings, challenges, business value, and its evolution beyond traditional methods.

Core Breakdown: The Mechanics and Components of A/B Testing

A successful A/B testing program relies on a robust methodological framework and a suite of technological components working in concert. It’s more than just showing two versions; it’s about controlled data collection, sophisticated analysis, and iterative refinement.

The A/B Testing Workflow Explained

- Hypothesis Formulation: Every A/B test begins with a clear, testable hypothesis. This usually takes the form of “If we change X, then Y will happen (e.g., conversion rate will increase) because Z (reason based on user psychology or existing data).”

- Variant Creation: Based on the hypothesis, a variant (or multiple variants) is created, differing from the control by only one key element. This could be a headline, button color, image, layout, or even an entire user flow.

- Audience Segmentation & Traffic Splitting: The total audience is randomly divided into segments. Traffic to the digital asset is then split, with each segment seeing a different variant (e.g., 50% see A, 50% see B). Randomization is crucial to ensure statistical validity and minimize bias.

- Data Collection: User interactions with each variant are tracked meticulously. This involves event tracking for clicks, scrolls, form submissions, purchases, and other relevant behaviors.

- Statistical Analysis: Once enough data is collected (reaching statistical significance), the performance of each variant is compared. Statistical inference engines determine if the observed differences are likely due to the change being tested or merely random chance. Key metrics include conversion rate, click-through rate, average session duration, and revenue per user.

- Interpretation and Implementation: If a variant significantly outperforms the control, it is deemed the “winner” and can be fully implemented. If not, insights are gathered, and new hypotheses are formed for subsequent tests.

Key Architectural Components Powering A/B Tests

- Dynamic Variant Delivery Systems: These systems are responsible for showing the correct version (A or B) to each user in real-time. They often integrate with content delivery networks (CDNs) or directly into application code to minimize latency.

- Event Tracking & Analytics Engines: Comprehensive tracking infrastructure captures every relevant user interaction. This data then feeds into powerful analytics engines that aggregate, process, and visualize test results, often providing real-time dashboards.

- Statistical Inference Engines: These are the brains of the operation, applying statistical models (e.g., t-tests, chi-squared tests, Bayesian methods) to determine the significance and confidence level of test outcomes. They help avoid false positives or premature conclusions.

- Experiment Management Platforms: Tools that allow users to design, launch, monitor, and analyze multiple experiments simultaneously. They provide user interfaces for defining variants, target audiences, goals, and metrics.

Challenges and Barriers to A/B Testing Adoption

Despite its proven benefits, implementing effective A/B testing can present several challenges:

- Traffic Volume: Small websites or low-traffic areas may struggle to gather enough data to reach statistical significance within a reasonable timeframe, leading to inconclusive tests.

- Test Duration: Conversely, tests run for too short a period might capture anomalies, while overly long tests risk being affected by external factors (e.g., marketing campaigns, seasonality).

- Statistical Misinterpretation: A lack of understanding of statistical significance, confidence intervals, and power can lead to incorrect conclusions or the deployment of changes that don’t actually move the needle.

- Technical Debt & Implementation Complexity: Integrating A/B testing tools, ensuring accurate data tracking, and managing multiple concurrent experiments can be technically demanding and resource-intensive.

- Organizational Silos: Effective A/B testing requires collaboration between product, design, marketing, and engineering teams. Siloed operations can hinder hypothesis generation, implementation, and result interpretation.

- Multivariate Testing Complexity: While A/B tests isolate single changes, testing multiple variables simultaneously (multivariate testing) can quickly become statistically complex and require immense traffic.

- Data Quality & Consistency: Inaccurate event tracking or inconsistent metric definitions can corrupt results, making insights unreliable.