ETL Pipeline Best Practices: Architecting Efficient Data Movement in 2025

The strategic importance of a robust ETL pipeline has never been more pronounced than in 2025. As organizations navigate an increasingly complex data landscape, efficient data movement is not just an operational necessity but a critical enabler for competitive advantage. This article delves into the best practices for designing, implementing, and maintaining cutting-edge ETL pipelines, ensuring data flows seamlessly to fuel analytics, machine learning models, and strategic decision-making.

In an era dominated by data-driven insights, the capability to extract, transform, and load data effectively forms the backbone of any successful digital enterprise. This deep dive will explore how modern technologies and methodologies are reshaping the traditional ETL pipeline, making it more agile, scalable, and secure for the demands of tomorrow.

Introduction: The Evolution of ETL in a Data-Driven World

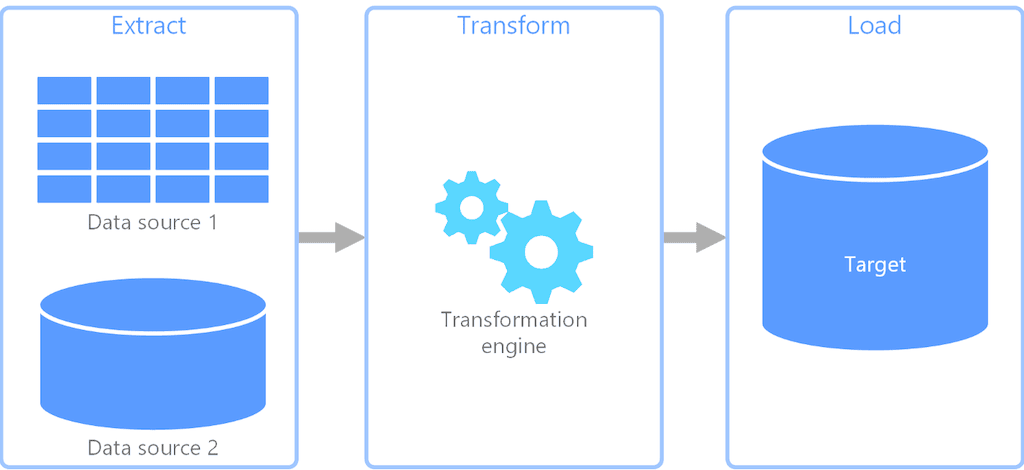

The journey of data from disparate sources to actionable insights is often spearheaded by the Extract, Transform, Load (ETL) process. What began as a foundational technique for moving data into data warehouses has evolved dramatically, especially with the proliferation of cloud computing and the escalating demands of real-time analytics and AI. Today, the modern ETL pipeline transcends its traditional role, becoming a dynamic and intelligent system integral to Cloud Data Integration Platforms.

Our objective is to illuminate the advanced strategies and architectural patterns that define effective ETL pipeline best practices for 2025. We will explore how an ELT-First Approach, coupled with Cloud-Native Modular Design and sophisticated Data Streaming capabilities, is transforming data integration. Furthermore, we’ll examine the crucial role of embedded Data Governance features, such as automated data quality checks and end-to-end data lineage, in maintaining trust and compliance. This article aims to provide a comprehensive guide for data professionals striving to build resilient, high-performance ETL pipelines that power the future of data science and business intelligence.

Core Breakdown: Crafting the Modern ETL Pipeline Architecture

Building an efficient and future-proof ETL pipeline in 2025 requires a multi-faceted approach, integrating advanced technical components with strategic operational considerations. The cornerstone of this architecture is its adaptability and intelligence.

Emphasizing Data Quality and Validation

At the heart of any reliable ETL pipeline lies an unwavering commitment to Data Quality. Poor data quality can cripple analytics, mislead AI models, and erode trust. Therefore, establishing robust validation rules and proactive data cleansing techniques is not merely a best practice; it is a foundational requirement. This involves implementing rules at ingestion points, during transformation, and before loading, ensuring data integrity from the outset. Advanced platforms now offer Automated Data Quality Checks, leveraging machine learning to detect anomalies and inconsistencies that human oversight might miss, thereby preventing costly errors from propagating downstream.

Leveraging Modern Cloud-Native Architectures

The future of efficient data movement is inextricably linked to modern Cloud-Native Architectures. Serverless ETL stands out for its unparalleled scalability and cost-effectiveness, automatically adjusting compute resources based on workload demand without requiring manual provisioning or management. This elasticity ensures that the ETL pipeline can handle fluctuating data volumes efficiently. Furthermore, integrating these pipelines with evolving Data Lakehouses provides a unified platform for both structured and unstructured data, enhancing analytical capabilities and simplifying data management. The move towards an ELT-First Approach in these cloud environments is also prominent, where raw data is loaded directly into the data lakehouse before transformation, offering greater flexibility and agility for diverse analytical needs.

Automation and Orchestration for Efficiency

True efficiency in ETL pipeline operations demands sophisticated Automation and orchestration. Streamlining workflow management tools ensure seamless data flow, dependency handling, and error recovery. Beyond basic automation, the integration of AI-powered solutions for Predictive Monitoring and Alerting allows teams to anticipate and address potential issues proactively. This often involves ML for Predictive Pipeline Maintenance, where machine learning algorithms analyze historical pipeline performance data to predict bottlenecks, failures, or resource contention before they impact operations, minimizing downtime and optimizing resource utilization.

Prioritizing Security and Compliance

Data Security and Compliance are paramount considerations for any ETL pipeline, especially with stringent regulations like GDPR, CCPA, and HIPAA. Best practices include encrypting data both in-transit and at-rest, safeguarding sensitive information from unauthorized access. Adhering strictly to evolving data governance standards requires robust capabilities like End-to-End Data Lineage and comprehensive Metadata Management. These features provide transparency into data origins, transformations, and destinations, crucial for auditability and maintaining trust. Implementing fine-grained access controls and regular security audits further fortifies the pipeline against vulnerabilities.

Performance Optimization and Tuning

Continuous performance optimization is vital for maintaining an efficient ETL pipeline. This involves carefully evaluating whether batch processing or Real-time ETL (often facilitated by Data Streaming platforms) is appropriate for specific use cases. While batch ETL remains suitable for large, scheduled data loads, real-time streaming is essential for applications requiring immediate data availability, such as fraud detection or personalized recommendations. Techniques like indexing and advanced query optimization within the target data store further enhance the speed and efficiency of data loading and subsequent retrieval, significantly impacting overall system responsiveness.

Challenges/Barriers to Adoption

Despite the undeniable benefits, organizations face several challenges in adopting and optimizing modern ETL pipeline best practices. One significant hurdle is Data Drift, where the statistical properties of data change over time, rendering existing transformations or models ineffective. This often requires constant monitoring and adaptive pipeline adjustments. Another major barrier is the inherent complexity of integrating ETL pipeline outputs into sophisticated MLOps workflows. The demands of ensuring consistent, high-quality data for machine learning models can be substantial, making MLOps Complexity a critical factor. Furthermore, the sheer volume and velocity of modern data necessitate specialized skills that can be difficult to acquire, leading to a skill gap. Legacy system integration also poses a significant challenge, as older systems may lack the APIs or data formats compatible with cloud-native, real-time integration methods, requiring intricate custom solutions or middleware.

Business Value and ROI

The strategic investment in advanced ETL pipeline best practices yields substantial business value and return on investment. Efficient data movement leads to faster time-to-insight, enabling quicker and more informed decision-making across all business functions. For AI initiatives, high Data Quality for AI is non-negotiable, directly correlating with model accuracy and reliability, which translates into superior predictive capabilities and automated processes. By automating data flows and implementing predictive maintenance, organizations achieve significant operational efficiencies, reducing manual effort and minimizing downtime. This not only lowers operational costs but also allows data teams to focus on higher-value analytical tasks rather than troubleshooting pipelines. The improved data governance and compliance capabilities mitigate regulatory risks, safeguarding the organization’s reputation and avoiding hefty fines. Ultimately, a well-architected ETL pipeline serves as a core competitive differentiator, enabling agile responses to market changes and fostering innovation.

Comparative Insight: ETL vs. Traditional Data Architectures

The landscape of data integration has undergone a profound transformation, moving beyond the confines of traditional data warehouses and data lakes. While conventional data warehouses excel at structured data for business intelligence and data lakes offer vast storage for raw, multi-structured data, modern data platforms, empowered by sophisticated ETL pipeline strategies, bridge these gaps and introduce new paradigms. Traditional ETL processes were often batch-oriented, designed for highly structured data, and performed transformations before loading into a data warehouse. This rigid structure, while effective for its time, struggled with the scale, variety, and velocity of modern data.

The emergence of ELT Methodologies (Extract, Load, Transform) represents a significant shift. Here, data is loaded into a target system (often a cloud data warehouse or Data Lakehouse) in its raw form first, with transformations performed inside the target system using its scalable compute resources. This approach offers greater flexibility, reduces bottlenecks, and allows for schema-on-read flexibility, making it ideal for exploratory analytics and evolving data requirements. Furthermore, the rise of Data Streaming Platforms fundamentally alters the traditional batch-processing model, enabling real-time data ingestion and processing for immediate insights, a capability largely absent in older ETL designs. These platforms integrate seamlessly with event-driven architectures, feeding dashboards, alerting systems, and AI models with fresh data continuously.

Another key development is Data Virtualization Solutions, which offer an alternative to physical data movement. Instead of extracting and loading data, data virtualization creates a virtual data layer that integrates data from multiple sources in real-time without moving it. While not a direct replacement for ETL pipeline in all scenarios, it serves as a powerful complement, especially for use cases requiring immediate access to federated data sources without the overhead of building and maintaining physical data pipelines for every requirement. Modern Cloud Data Integration Platforms now often combine aspects of ELT, data streaming, and sometimes data virtualization, offering a holistic approach to data management that far surpasses the capabilities of disconnected, legacy systems. This evolution highlights a move towards more dynamic, integrated, and intelligent data ecosystems.

World2Data Verdict: The Intelligent and Adaptive Future of Data Integration

In 2025, the future of the ETL pipeline is unequivocally intelligent and adaptive. World2Data.com believes that organizations must move beyond reactive data management to embrace proactive, AI-augmented strategies. The imperative is to implement a modular, cloud-native ETL pipeline architecture that not only scales effortlessly but also self-optimizes, leveraging AI-powered anomaly detection and predictive maintenance to ensure continuous data flow and quality. An unshakeable focus on Data Governance, facilitated by automated lineage and robust security, will differentiate leading enterprises.

Our recommendation is to strategically invest in solutions that champion an ELT-First Approach, prioritizing Data Streaming for real-time needs, and deeply embedding MLOps principles throughout the data lifecycle. The seamless integration of a well-architected ETL pipeline into a broader data strategy, inclusive of Data Lakehouses and advanced analytical workloads, will be the true determinant of success. The continuous evolution of data means the principles guiding an effective ETL pipeline will always adapt. Staying abreast of emerging tools and methodologies will ensure data movement remains a catalyst for innovation and growth, not a bottleneck.