Data Integration Governance: Aligning Systems and Standards for Trusted Data

Platform Category: Data Governance and Integration Platforms

Core Technology/Architecture: Metadata-Driven Integration, Policy-Based Automation, Centralized Data Lineage

Key Data Governance Feature: Data Quality Rules Enforcement, Automated Data Lineage, Role-Based Access Control for Integrated Data, Metadata Management

Primary AI/ML Integration: AI-driven Data Discovery, Predictive Data Quality, Automated Data Mapping Suggestions

Main Competitors/Alternatives: Informatica, IBM, Collibra, Talend, Microsoft Azure Purview, Google Cloud Data Catalog

Data Integration Governance: Aligning Systems and Standards is no longer just an IT buzzword; it is a strategic imperative for any organization navigating the complexities of modern data landscapes. Effective Data Integration Governance ensures that information flowing across various systems is consistent, accurate, and reliable, forming the bedrock for sound business decisions. It provides the essential framework for managing how data is combined from disparate sources, creating a unified and trustworthy view crucial for advanced analytics, regulatory compliance, and operational efficiency.

The Strategic Imperative of Robust Data Integration Governance

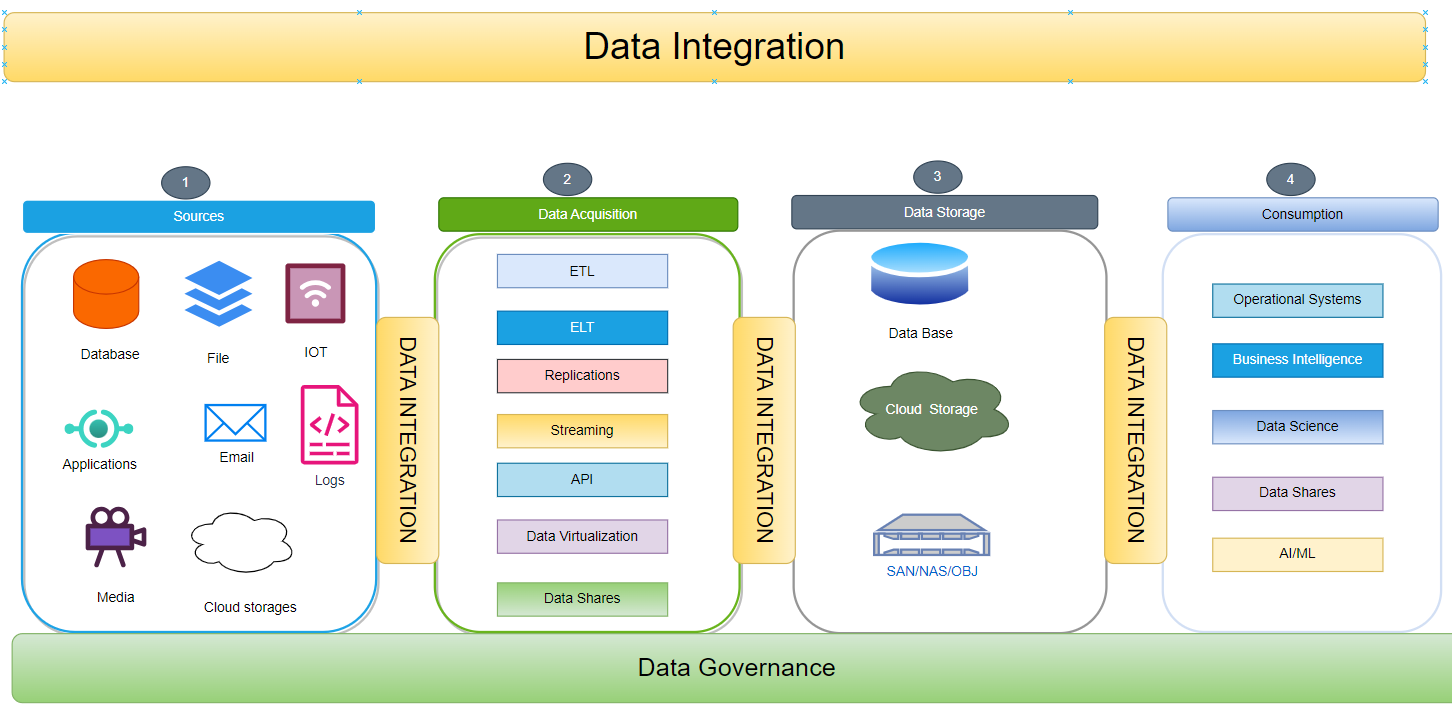

In today’s hyper-connected enterprise, data rarely resides in a single, monolithic system. Instead, it is scattered across a myriad of applications, databases, cloud services, and legacy platforms. This fragmentation, while a natural outcome of digital transformation, introduces significant challenges related to data quality, consistency, security, and usability. This is precisely where Data Integration Governance steps in, providing the overarching framework to manage and orchestrate the flow of data across these diverse sources. Its primary objective is to create a unified, consistent, and trustworthy view of organizational data by standardizing formats, definitions, and access policies.

At its core, Data Integration Governance involves establishing policies, processes, and responsibilities for managing how data is combined from different sources. This includes standardizing data formats and definitions, implementing robust data quality checks, and enforcing security protocols to ensure seamless data flow and integrity. Without effective governance, data integration efforts can lead to a chaotic landscape of conflicting information, data silos, compliance risks, and ultimately, poor business decisions. This article will provide a deep dive into the technical and architectural components of an effective Data Integration Governance framework, explore the common challenges organizations face, highlight the immense business value it delivers, and compare its paradigm to traditional data management approaches.

Dissecting the Architecture and Components of Data Integration Governance

A robust Data Integration Governance framework is built upon several interconnected pillars, each contributing to the overall integrity, usability, and trustworthiness of integrated data. Understanding these components is crucial for any organization aiming to establish a sustainable and scalable governance strategy.

Key Architectural Pillars and Features:

- Metadata Management: This is the backbone of effective governance. Metadata, or “data about data,” provides context, definitions, relationships, and lineage for all integrated datasets. A centralized metadata repository enables a comprehensive understanding of data assets, facilitating data discovery, impact analysis, and consistent interpretation across different systems. Technologies leveraging Metadata-Driven Integration are pivotal here, automating much of the mapping and transformation processes.

- Data Quality Management: Ensuring the accuracy, completeness, consistency, validity, and timeliness of data is paramount. Data Quality Rules Enforcement, including profiling, validation, cleansing, and monitoring, must be applied at every stage of the integration pipeline. This proactive approach prevents erroneous data from propagating across systems, thereby maintaining trust in integrated datasets. AI-driven Data Quality tools are increasingly used for predictive identification and remediation of data quality issues.

- Data Lineage and Traceability: This feature provides a complete audit trail of data’s journey from its source to its destination, including all transformations and aggregations performed along the way. Centralized Data Lineage is critical for understanding data origins, diagnosing errors, ensuring compliance, and assessing the impact of changes. Automated Data Lineage tools greatly simplify this complex task.

- Policy-Based Automation and Compliance: Governance policies define rules for data usage, security, quality, and retention. These policies must be translated into automated workflows and controls within the integration platform. This ensures consistent application of rules across all data flows and helps organizations meet stringent regulatory mandates such as GDPR, HIPAA, and CCPA, which often have specific requirements for integrated and shared data.

- Security and Access Control: Protecting integrated data from unauthorized access, modification, or disclosure is non-negotiable. Role-Based Access Control for Integrated Data ensures that only authorized users or applications can access specific datasets or perform certain operations. This also extends to data encryption, anonymization, and auditing of access logs to maintain confidentiality and integrity.

- Data Catalog and Discovery: A comprehensive data catalog acts as a central inventory of all data assets, making them easily discoverable and understandable for business users and data scientists. Coupled with AI-driven Data Discovery capabilities, it can automatically classify data, suggest relationships, and provide a holistic view of the integrated data landscape, significantly reducing the time spent searching for and understanding data.

- AI/ML Integration for Enhanced Governance: Modern platforms are increasingly incorporating AI and Machine Learning to supercharge governance efforts. This includes AI-driven Data Discovery for automatically identifying and tagging data assets, Predictive Data Quality to anticipate and prevent data errors before they occur, and Automated Data Mapping Suggestions that leverage historical patterns to accelerate integration projects.

Challenges/Barriers to Adoption:

Organizations often face significant hurdles in achieving robust Data Integration Governance. One primary challenge is managing the sheer diversity of data sources, ranging from antiquated legacy systems to contemporary cloud applications, APIs, and streaming data feeds. Each source often comes with its own data models, formats, and quality levels, making harmonization a complex undertaking. Overcoming internal organizational silos where data is fragmented and managed independently by different departments requires not just technical solutions but also significant cultural shifts and collaborative efforts.

Furthermore, adapting to continuously evolving regulatory landscapes adds another layer of complexity. Compliance requirements are constantly changing, demanding agile governance frameworks that can quickly incorporate new rules and ensure adherence across all integrated datasets. Scalability and performance issues also arise as data volumes and velocity grow exponentially, challenging existing integration infrastructure. Finally, a persistent barrier is the lack of skilled personnel who possess both data governance expertise and technical proficiency in modern integration technologies, leading to bottlenecks in implementation and maintenance. Data drift, where the statistical properties of integrated data change over time, can silently degrade model performance and data quality, posing a subtle yet significant governance challenge.

Business Value and ROI:

The advantages of a well-executed Data Integration Governance strategy are profound and yield significant returns on investment. It leads directly to improved data accuracy and reliability, empowering better analytics and more confident decision-making capabilities that emerge from having a trusted single source of truth. By reducing data discrepancies and ensuring consistent data quality, organizations significantly reduce operational risks and associated costs from errors, rework, and compliance failures.

Moreover, strong governance fosters operational efficiency by automating data flows and standardizing processes, freeing up valuable resources. Faster time-to-insight and innovation are achieved as data scientists and analysts spend less time wrangling data and more time extracting value. This also translates into an improved customer experience through a unified and accurate view of customer data, enabling personalized interactions and services. Ultimately, robust Data Integration Governance builds trust in data assets, transforming them from a liability into a strategic advantage that drives competitive differentiation.

Comparative Insight: Data Integration Governance in Contrast to Traditional Data Architectures

To fully appreciate the modern approach to Data Integration Governance, it’s beneficial to contrast it with traditional data management paradigms like the Data Lake and Data Warehouse. While these architectures have served their purpose, they often fall short in addressing the comprehensive governance needs of today’s complex data ecosystems.

Traditional Data Lake/Data Warehouse:

- Focus: Primarily on storage, aggregation, and reporting. Data warehouses excel at structured data for business intelligence, while data lakes handle vast amounts of raw, multi-structured data.

- Governance Approach: Governance is often an afterthought, implemented reactively, or fragmented across different tools and processes. In data lakes, the “schema-on-read” approach can lead to data swamps without proactive governance. Data warehouses typically have more structured governance for defined ETL processes, but it can be rigid and less adaptable to new data sources.

- Integration: Often relies on ad-hoc ETL scripts or point-to-point integrations that can quickly become unmanageable, leading to inconsistencies, data redundancy, and a lack of a unified view. Data quality checks might occur at specific ingestion points but lack end-to-end visibility.

- Metadata and Lineage: Manual or limited metadata management. Comprehensive data lineage tracking is frequently a manual, labor-intensive process, making it difficult to trace data origins and transformations effectively.

- Agility and Scalability: Can struggle with the velocity and variety of real-time data or rapidly evolving business requirements. Adding new data sources often requires significant re-engineering.

Modern Data Integration Governance Platforms:

- Focus: Proactive, embedded, and holistic management of data from ingestion to consumption, with a strong emphasis on data quality, security, and compliance across integrated datasets.

- Governance Approach: Governance is central and integrated into the entire data lifecycle. It leverages Metadata-Driven Integration and Policy-Based Automation to enforce rules and standards automatically. This allows for a “schema-on-write” mentality even for raw data, preventing data swamps and ensuring data quality from the outset.

- Integration: Utilizes advanced integration capabilities, including real-time streaming, APIs, and sophisticated ETL/ELT tools, all governed by centralized policies. This ensures consistent data definitions and transformations across all connected systems. Automated Data Mapping Suggestions powered by AI significantly accelerate integration projects.

- Metadata and Lineage: Features robust, automated metadata management and Centralized Data Lineage. Tools provide a graphical, end-to-end view of data flow, enabling quick impact analysis and compliance auditing. AI-driven Data Discovery enhances the cataloging process.

- Agility and Scalability: Designed for agility, supporting diverse data types (structured, unstructured, semi-structured) and scales to handle massive data volumes and high-velocity streams. They are inherently more adaptable to new data sources and evolving business needs, making them ideal for MLOps and AI readiness by providing trusted, governed datasets.

In essence, while traditional architectures provide the storage and foundational processing, modern Data Integration Governance platforms provide the intelligence, automation, and oversight needed to transform raw data into a truly trusted and actionable enterprise asset. They elevate data integration beyond mere technical connectivity to a strategic capability that ensures data reliability and compliance.

World2Data Verdict: Charting the Future with Holistic Data Integration Governance

The intricate tapestry of modern enterprise data demands more than just integration; it requires intelligent, proactive, and comprehensive governance. The World2Data perspective is clear: Data Integration Governance is not a project with an endpoint but a continuous, evolving strategic imperative. Organizations that view it as such will be best positioned to unlock the full potential of their data assets, transforming them from a chaotic liability into a powerful engine for innovation and competitive advantage.

The future of data management is inextricably linked with robust governance, and AI and Machine Learning will serve as powerful enablers, automating mundane tasks, predicting data quality issues, and providing deeper insights into data relationships. Platforms from key players like Informatica, IBM, Collibra, Talend, Microsoft Azure Purview, and Google Cloud Data Catalog are continually evolving, integrating advanced capabilities such as Metadata-Driven Integration, Policy-Based Automation, and AI-driven Data Discovery. For any enterprise embarking on or maturing its data strategy, the recommendation is unequivocal: invest strategically in a holistic Data Integration Governance framework. Foster a culture of data stewardship across all departments, leverage advanced technologies for automation and monitoring, and ensure that every data flow, from ingestion to consumption, is underpinned by clear policies and robust oversight. Only then can data truly become the trusted, aligned, and standard-compliant asset it needs to be in the digital age.