Optimizing Data Quality with Next-Generation AI: Driving Data Excellence and Strategic Insight

Platform Category: Data Quality/Data Observability

Core Technology/Architecture: Machine Learning Models for Anomaly Detection

Key Data Governance Feature: Automated Data Discovery and Classification

Primary AI/ML Integration: Built-in ML for proactive data monitoring and issue resolution

Main Competitors/Alternatives: Monte Carlo, Bigeye, Anomalo, Soda, Collibra DQ

Optimizing data quality with Next-Generation AI presents a transformative shift for businesses navigating an increasingly complex data landscape. Organizations worldwide grapple with inconsistent, incomplete, or outdated information, often leading to flawed insights and misguided strategies. Traditional data management tools frequently fall short, struggling to keep pace with the sheer volume and velocity of modern data streams, hindering effective decision-making and operational efficiency.

This is where Next-Gen AI Data Quality solutions emerge as game-changers. Unlike conventional methods reliant on rigid rule-based processing, these advanced AI systems leverage machine learning and deep learning to autonomously identify anomalies, cleanse inaccuracies, and enrich datasets with unprecedented precision. Their core capability lies in intelligent automation, proactively detecting issues before they impact operations and ensuring a robust foundation for all analytics.

Introduction: Elevating Data Integrity in the AI Era

In today’s hyper-competitive and data-driven world, the integrity of information is paramount. Every strategic decision, every customer interaction, and every operational process hinges on the reliability of the underlying data. Yet, the rapid expansion of data sources, formats, and volumes presents unprecedented challenges to maintaining high data quality. Businesses are drowning in data but starving for trusted insights, often crippled by issues ranging from simple typos and missing values to complex schema drifts and data inconsistencies across disparate systems. This scenario underscores a critical need for advanced solutions that can not only cope with the scale but also proactively ensure data trustworthiness.

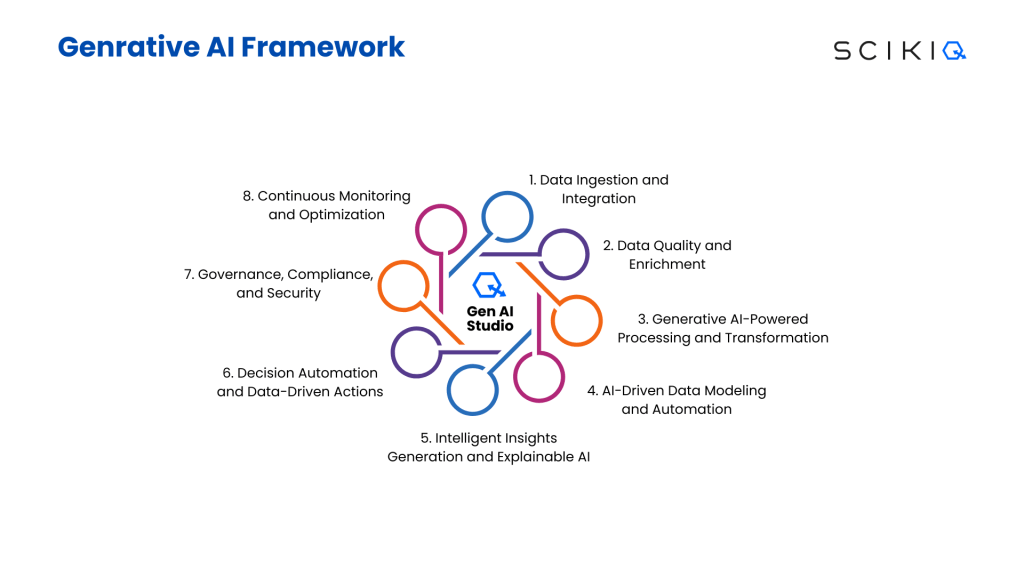

This article delves into the paradigm shift introduced by Next-Gen AI Data Quality platforms. We aim to provide a comprehensive analysis of how these innovative solutions fundamentally redefine data governance and management. By moving beyond traditional, reactive, rule-based approaches, AI-powered platforms offer a proactive, intelligent, and scalable framework for identifying, resolving, and preventing data quality issues. We will explore their core architectural components, highlight their significant business value, dissect the challenges associated with their adoption, and compare them against conventional data management paradigms, culminating in World2Data.com’s expert verdict on their future impact.

Core Breakdown: Architectural Pillars of Next-Gen AI Data Quality

The architecture of a Next-Gen AI Data Quality platform is fundamentally different from its predecessors, built upon advanced machine learning and deep learning models designed for autonomy, adaptability, and scale. These platforms integrate several sophisticated components that work in concert to deliver unparalleled data integrity:

Intelligent Data Profiling and Discovery

- Automated Schema Inference: AI algorithms analyze raw data to automatically infer schemas, data types, and relationships, significantly reducing manual effort and improving accuracy compared to traditional metadata management.

- Contextual Data Understanding: Beyond basic profiling, AI understands the semantic meaning and context of data, identifying sensitive information, linking related datasets, and understanding business concepts through natural language processing (NLP) and pattern recognition.

- Proactive Anomaly Detection: Unlike static thresholds, ML models continuously learn normal data patterns and automatically flag deviations, outliers, drift, or sudden changes in data distribution that indicate potential quality issues. This extends to detecting anomalies in data freshness, volume, and schema over time.

Advanced Anomaly Detection and Monitoring

- Machine Learning for Data Drift: Specialized ML models monitor for data drift—changes in the input data characteristics that can degrade model performance—across various dimensions, from statistical distributions to feature correlations.

- Deep Learning for Complex Patterns: Deep learning techniques are employed to identify subtle, non-obvious data quality issues that traditional rule engines would miss, such as complex data integrity violations in unstructured or semi-structured data.

- Continuous Data Validation: AI systems perform real-time and continuous validation, ensuring that data arriving from streaming sources or ingested in batches adheres to expected quality standards and flagging anomalies instantly.

Automated Data Cleansing and Enrichment

- AI-Powered Remediation Suggestions: Platforms leverage AI to suggest and even automate data cleansing tasks, such as correcting inconsistencies, standardizing formats, deduping records, and filling missing values based on learned patterns and external knowledge bases.

- Data Enrichment: AI can enrich existing datasets by integrating information from external, trusted sources, improving the completeness and utility of data for analytics and AI/ML model training, including critical components like a Feature Store. Ensuring high data quality is paramount before features are curated and stored for reuse.

Data Lineage, Observability, and Governance

- Automated Data Lineage Mapping: AI autonomously maps data flows from source to consumption, providing end-to-end visibility into data transformations and origins. This is crucial for debugging quality issues and ensuring compliance.

- Predictive Quality Insights: By analyzing historical data quality trends and usage patterns, AI can predict potential future data quality bottlenecks or issues, allowing for proactive intervention rather than reactive fixes.

- Support for Data Labeling: While not directly labeling data, Next-Gen AI Data Quality platforms ensure that data fed into data labeling processes is clean, consistent, and relevant, drastically improving the efficiency and accuracy of human and automated labeling efforts.

Challenges and Barriers to Adoption

Despite the immense promise, integrating Next-Gen AI Data Quality solutions is not without its hurdles. One significant challenge is Data Drift, where the statistical properties of the target variable, or the relationship between input variables and the target variable, change over time. While AI data quality tools are designed to detect this, managing and mitigating its effects on downstream models and applications remains complex.

Another barrier is the inherent complexity of MLOps (Machine Learning Operations). Deploying, monitoring, and maintaining AI models for data quality requires specialized skills and robust infrastructure. Ensuring the explainability and interpretability of AI-driven quality decisions can also be a challenge, particularly in highly regulated industries where auditability is critical. Integration with existing legacy systems, managing data governance policies across hybrid environments, and the initial investment in new technologies and talent are further considerations that organizations must address.

Business Value and ROI

The return on investment (ROI) from implementing Next-Gen AI Data Quality platforms is multifaceted and substantial:

- Faster Model Deployment and Better AI Outcomes: Clean, reliable data is the lifeblood of effective AI/ML models. By automating data quality assurance, these platforms significantly accelerate the data preparation phase, leading to faster model development cycles and more accurate, robust AI applications.

- Enhanced Data-Driven Decision Making: Leaders can make strategic decisions with confidence, knowing their insights are based on high-quality, trustworthy data, leading to improved business outcomes across the board.

- Reduced Operational Costs: Automated anomaly detection and remediation drastically reduce the manual effort and time traditionally spent on data cleansing, freeing up data teams for more strategic initiatives.

- Improved Regulatory Compliance: By ensuring data accuracy, consistency, and lineage, organizations can more easily meet stringent regulatory requirements (e.g., GDPR, CCPA, HIPAA), mitigating risks and avoiding costly fines.

- Increased Customer Trust and Experience: Accurate customer data enables personalized experiences, reduces errors in service delivery, and fosters greater customer loyalty.

Comparative Insight: Next-Gen AI Data Quality vs. Traditional Approaches

To truly appreciate the transformative impact of Next-Gen AI Data Quality platforms, it’s essential to compare them with traditional data management paradigms like Data Lakes and Data Warehouses. While these foundational data architectures remain crucial for storage and structured reporting, they often fall short in addressing modern data quality challenges without significant manual intervention.

Traditional Data Lakes and Data Warehouses are designed primarily for storage, aggregation, and structured querying. Data Lakes, known for their ability to store raw, unstructured, and semi-structured data at scale, often become “data swamps” due to a lack of inherent quality controls. Data Warehouses, conversely, impose strict schemas and ETL (Extract, Transform, Load) processes to ensure quality, but these processes are typically static, rule-based, and highly manual. They struggle with:

- Scalability for Real-time Quality: Rule-based systems are often overwhelmed by the velocity and volume of real-time data streams, making proactive quality enforcement difficult.

- Adaptability to Evolving Data: Traditional ETL processes require significant re-engineering when data schemas or business rules change, leading to rigidity and delays.

- Complex Anomaly Detection: Relying on predefined rules, they often fail to detect subtle or novel data quality issues, especially in new or diverse datasets.

- Manual Remediation: Identifying and fixing issues is largely a human-driven process, prone to error, and time-consuming.

In contrast, Next-Gen AI Data Quality platforms are designed from the ground up to be dynamic, intelligent, and autonomous. They act as an intelligent layer on top of or integrated within existing data infrastructures, significantly enhancing their capabilities:

- Proactive and Predictive: Instead of reacting to issues after they occur, AI models continuously monitor data, predict potential problems, and even suggest preventative measures.

- Self-Learning and Adaptive: AI algorithms learn from historical data, user feedback, and changing data patterns to continuously refine their understanding of “good” data, automatically updating quality rules and detection thresholds.

- Holistic Data Observability: They provide comprehensive visibility into data health across the entire data lifecycle, from ingestion to consumption, making data lineage and quality metrics easily accessible.

- Automated Remediation: Many AI platforms can automatically fix common data quality issues or provide highly accurate, actionable recommendations for human intervention, drastically reducing manual effort.

- Scalability and Efficiency: Built on distributed computing frameworks, these platforms can process vast amounts of data efficiently, handling diverse data types and high velocities without performance degradation.

Ultimately, while Data Lakes and Warehouses provide the foundational storage and processing, Next-Gen AI Data Quality solutions provide the critical intelligence layer that transforms raw data into a trusted, high-value asset, enabling organizations to truly harness the power of their data for advanced analytics and AI initiatives.

World2Data Verdict: The Imperative of Intelligent Data Stewardship

The journey towards data excellence is no longer a choice but a strategic imperative, and Next-Gen AI Data Quality platforms are the undisputed vehicle for this transformation. World2Data.com asserts that these solutions represent the critical missing link between raw, often chaotic, enterprise data and the high-fidelity information required to power truly impactful AI, advanced analytics, and intelligent automation. The era of manual, reactive data quality management is rapidly drawing to a close, proven inadequate against the backdrop of modern data complexity and velocity.

Our analysis indicates that organizations that fail to adopt intelligent, AI-driven approaches to data quality will increasingly find themselves at a severe competitive disadvantage. They will grapple with prolonged development cycles for AI models, suffer from unreliable insights, face heightened regulatory risks, and struggle to build customer trust. The future belongs to those who embrace proactive data governance, leveraging AI to not only detect and resolve data issues but to prevent them from occurring in the first place.

Therefore, World2Data.com strongly recommends that enterprises prioritize investment in Next-Gen AI Data Quality platforms. This involves not just technological adoption but also a cultural shift towards intelligent data stewardship, integrating these tools deeply into MLOps pipelines and data governance frameworks. The immediate actionable recommendation is to pilot these solutions on critical datasets, focusing on use cases where data quality directly impacts business KPIs, such as customer churn prediction, fraud detection, or supply chain optimization. By doing so, businesses can unlock unparalleled accuracy, efficiency, and confidence in their data assets, paving the way for sustained innovation and market leadership in the age of AI.