AI Accelerating Data Ingestion Processes: Unlocking Real-Time Insights

The relentless explosion of data from myriad sources presents both immense opportunities and significant challenges for modern enterprises. Traditional data ingestion methods often falter under the sheer volume, velocity, and variety of information, leading to bottlenecks and delayed insights. This comprehensive article delves into how artificial intelligence (AI) is revolutionizing data ingestion processes, providing unprecedented speed, accuracy, and efficiency to data pipelines. We will explore the technical underpinnings, business advantages, and future outlook of AI data ingestion acceleration, highlighting its pivotal role in empowering data-driven decision-making.

Introduction: The Imperative for AI Data Ingestion Acceleration

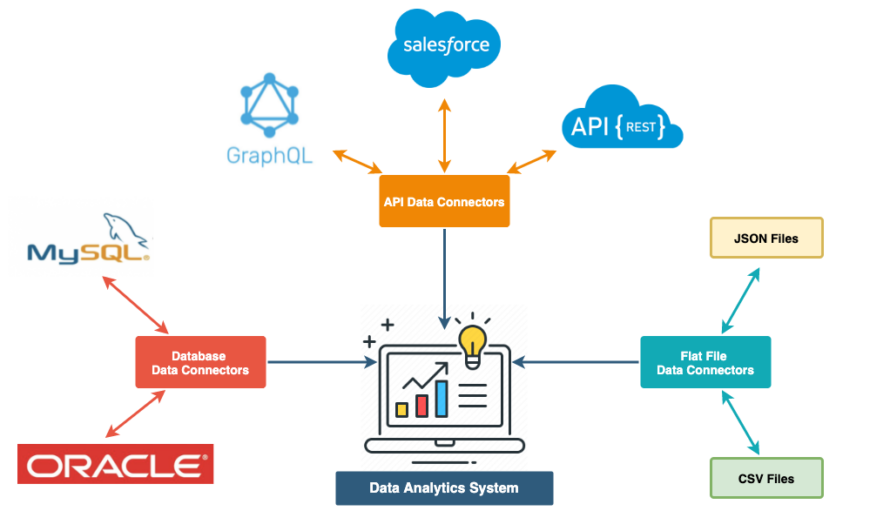

In today’s hyper-connected digital economy, data is the lifeblood of innovation and competitive advantage. Organizations are grappling with petabytes of structured, semi-structured, and unstructured data generated daily from IoT devices, social media, transactional systems, and more. The ability to quickly and accurately bring this data into analytical systems is paramount. Herein lies the critical role of AI data ingestion acceleration – a transformative approach that leverages advanced machine learning (ML) and natural language processing (NLP) to automate, optimize, and streamline the journey of data from source to insight.

As a leading Data Integration Platform, the modern AI-powered ingestion system goes far beyond simple ETL (Extract, Transform, Load). It incorporates sophisticated Machine Learning Models for pattern recognition and NLP for unstructured data parsing, making sense of even the most complex datasets. This article will dissect the core technologies driving this shift, examine the competitive landscape featuring solutions like Informatica (CLAIRE), Databricks (Auto Loader), Talend, and Matillion, and articulate the profound impact of this evolution on data governance, operational efficiency, and business intelligence.

Core Breakdown: The Mechanics of AI-Driven Data Ingestion

AI data ingestion acceleration is built upon a foundation of intelligent automation that addresses common pain points in traditional data pipelines. At its heart, it’s about making the ingestion process self-aware, adaptive, and predictive.

Automated Schema Inference and Data Mapping

One of the most time-consuming and error-prone aspects of data ingestion is schema definition and mapping. AI, particularly through advanced machine learning algorithms, can automatically infer schemas from diverse data sources, whether they are relational databases, JSON files, or Kafka streams. Built-in AI for automated schema inference intelligently analyzes data patterns, identifies data types, and suggests optimal schema structures. This capability extends to intelligent data mapping, where AI algorithms propose mappings between source and target schemas, significantly reducing manual effort and the risk of human error. This is a critical component for AI data ingestion acceleration, as it eliminates manual guesswork and allows for quicker integration of new data sources.

NLP for Unstructured Data Parsing and Enrichment

The vast majority of modern data exists in unstructured formats – text documents, emails, social media posts, sensor readings, and more. Traditional ingestion tools struggle with this complexity. NLP becomes a core technology/architecture, enabling the AI data platform to parse, understand, and extract meaningful entities, sentiments, and topics from unstructured text. This not only allows for the ingestion of previously inaccessible data but also enriches it, transforming raw text into structured features ready for analysis. For example, NLP can automatically identify product names, customer feedback categories, or critical events within log files, making this data actionable.

Proactive Anomaly Detection and Data Quality Assurance

Data quality is paramount for reliable AI/ML models and accurate business insights. AI-driven ingestion systems employ continuous learning models to monitor incoming data streams for anomalies and inconsistencies. This primary AI/ML integration uses algorithms to establish baselines, detect outliers, and flag potential data quality issues in real-time. For instance, a sudden spike in a particular data field’s values or a deviation from historical patterns can be immediately identified, preventing corrupted data from polluting downstream systems. This proactive approach ensures a higher degree of data integrity from the very beginning of the data pipeline, which is vital for maintaining the trustworthiness of data for AI initiatives.

Automated PII Detection and Data Classification

Data governance and compliance, especially with regulations like GDPR and CCPA, are non-negotiable. AI data ingestion acceleration integrates advanced capabilities for automated PII (Personally Identifiable Information) detection and data classification. As data streams into the system, ML models can automatically scan and identify sensitive information, ensuring that PII is properly masked, anonymized, or routed according to corporate policies and regulatory requirements. This key data governance feature streamlines compliance efforts and reduces the risk of data breaches, adding an essential layer of security and trust to the ingestion process.

Challenges and Barriers to Adoption

Despite the undeniable benefits, adopting AI data ingestion acceleration comes with its own set of challenges. One significant barrier is the complexity of integrating AI models into existing legacy data infrastructures. Many organizations rely on deeply entrenched, often disparate, systems that are not inherently designed for AI-driven automation. Another challenge lies in ensuring the continuous training and relevance of AI models used for schema inference and anomaly detection; data drift, where data characteristics change over time, can degrade model performance if not continuously monitored and retrained. Furthermore, the initial investment in specialized AI talent and robust infrastructure can be substantial. Organizations also face the hurdle of developing MLOps complexity, specifically how to manage the lifecycle of these AI models within the ingestion pipeline, from development and deployment to monitoring and maintenance, requiring new skill sets and operational frameworks. Data security and privacy concerns, particularly when dealing with automated PII detection, also necessitate rigorous oversight and robust access controls.

Business Value and ROI of AI Data Ingestion Acceleration

The return on investment (ROI) from implementing AI in data ingestion processes is multi-faceted and significant.

First, it leads to dramatically Faster Model Deployment for AI/ML projects. By providing clean, validated, and readily available data, the time spent on data preparation—which often consumes 70-80% of an AI project’s effort—is drastically cut. This accelerates the entire machine learning lifecycle, from experimentation to production.

Second, AI ensures superior Data Quality for AI and analytical endeavors. Automated validation and anomaly detection lead to fewer errors and more reliable datasets, fostering greater trust in insights and decisions derived from the data.

Third, operational efficiency is greatly enhanced. Automation reduces manual labor, allowing data engineers and analysts to focus on higher-value tasks rather than repetitive data preparation. This translates into significant cost savings and optimized resource utilization.

Finally, AI data ingestion acceleration facilitates real-time analytics and decision-making. The ability to ingest and prepare data at high velocity enables businesses to react instantly to market changes, customer behavior, and operational events, providing a distinct competitive edge.

Comparative Insight: AI-Accelerated vs. Traditional Data Ingestion

To fully appreciate the impact of AI data ingestion acceleration, it’s crucial to compare it with traditional approaches rooted in Data Lake and Data Warehouse models. Historically, data ingestion involved rigid, rule-based ETL (Extract, Transform, Load) pipelines. These pipelines were typically hand-coded, requiring extensive manual effort for schema definition, data cleaning, and mapping. Any change in source data formats, or the introduction of new data sources, meant significant re-engineering, leading to slow integration cycles and bottlenecks.

Traditional data ingestion, while robust for well-defined structured data, struggles immensely with the volume and variety of modern data, especially semi-structured and unstructured formats. Data lakes attempted to solve this by ingesting raw data “as is,” deferring schema-on-read, but still required significant manual effort for cataloging, discovery, and preparation before it could be used for analytics or AI. Data warehouses, optimized for structured, curated data, were even more constrained by their predefined schemas, making real-time or flexible ingestion nearly impossible without extensive, time-consuming transformations.

In contrast, AI data ingestion acceleration transforms this paradigm. Instead of fixed rules, it employs adaptive machine learning models that learn from data patterns. Automated schema inference and intelligent data mapping significantly reduce manual intervention. NLP capabilities allow for seamless ingestion and understanding of unstructured data, a monumental challenge for traditional systems. Real-time anomaly detection replaces batch-based quality checks, preventing bad data from entering the system. Competitors like Informatica’s CLAIRE engine and Databricks’ Auto Loader exemplify this shift, offering intelligent automation that traditional rule-based ETL systems simply cannot match in terms of speed, flexibility, and scalability. This makes AI-driven ingestion not just an improvement, but a fundamental redesign of how data pipelines operate, aligning them with the demands of modern, real-time analytics and AI applications.

World2Data Verdict: The Unstoppable Momentum of AI in Data Ingestion

The era of manual, labor-intensive data ingestion is rapidly drawing to a close. World2Data.com asserts that AI data ingestion acceleration is not merely an evolutionary upgrade but a foundational shift indispensable for any organization aiming for true data-driven agility. The ability to intelligently automate schema inference, parse complex unstructured data with NLP, proactively detect anomalies, and ensure robust data governance through automated PII detection and classification directly translates into faster insights, superior data quality, and substantial operational efficiencies. As data volumes continue to swell and real-time demands intensify, embracing AI in the initial stages of the data pipeline is no longer optional but a strategic imperative. We predict that future data platforms will be inherently intelligent, with AI deeply embedded across all ingestion layers, moving beyond just acceleration to predictive self-optimization. Organizations failing to adopt these AI-powered capabilities risk being overwhelmed by data complexity and losing their competitive edge in an increasingly data-centric world.