Data Integration Strategies for a Unified Data Ecosystem

In today’s hyper-connected, data-driven world, robust Data Integration strategies are no longer an option but an absolute necessity for organizations striving for agility and insight. Effective Data Integration transforms raw, disparate information from diverse sources into a coherent, actionable intelligence fabric, providing a critical competitive edge. This article delves into the fundamental approaches, architectural patterns, and cutting-edge tools that empower businesses to forge a truly unified data ecosystem, paving the way for enhanced decision-making and sustainable innovation.

Understanding the Imperative of Data Integration

The modern enterprise generates and consumes data at an unprecedented rate, spanning across numerous applications, legacy systems, cloud platforms, and external sources. This proliferation often leads to the formidable Challenge of Disparate Data Sources, resulting in fragmented views, inconsistent information, and ultimately, hindered decision-making. Data silos, a common byproduct of unmanaged growth, prevent a holistic understanding of business operations, customer behavior, and market trends. The overarching objective of effective Data Integration is to overcome these hurdles by establishing a Single Source of Truth. The Benefits of a Single Source of Truth are profound: enhanced data accuracy, consistency across all departments, streamlined reporting, and improved collaborative efforts. This foundational consistency is crucial not just for operational efficiency but also for advanced analytics, machine learning initiatives, and regulatory compliance.

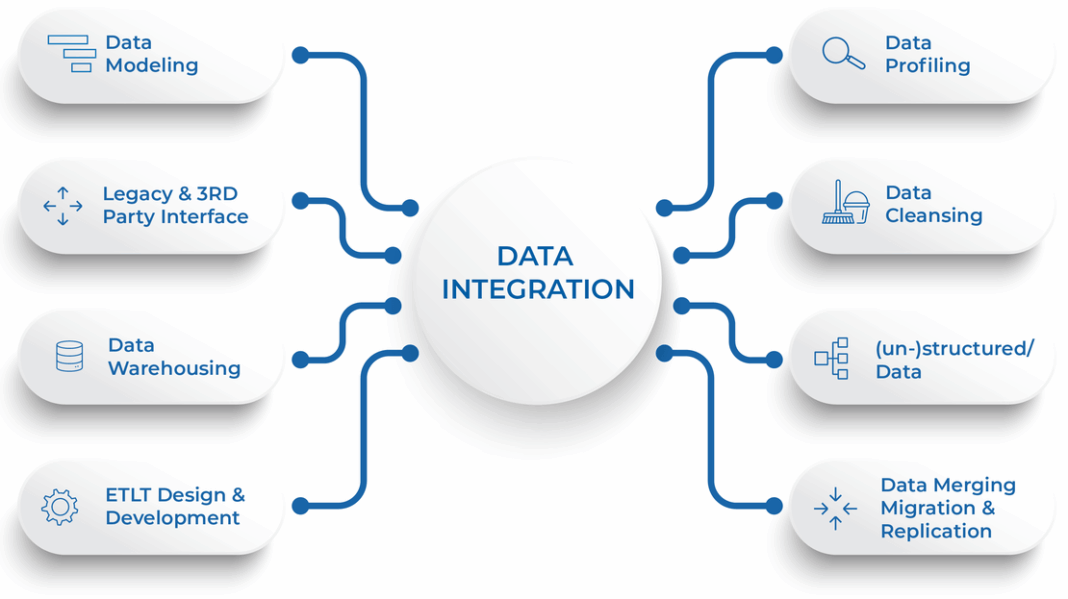

Core Breakdown: Architecting a Seamless Data Flow

At the heart of any successful data strategy lies a well-conceived Data Integration architecture. This involves selecting the right approaches and technologies to move, transform, and consolidate data effectively. Several platform categories and core technologies define this landscape:

Key Data Integration Approaches and Technologies

- Batch Integration for Scheduled Tasks: This traditional method involves moving large volumes of data at specific, predetermined intervals. Ideal for routine reporting, data warehousing, and non-time-sensitive analytics, it relies on processes like nightly ETL jobs.

- Real-time Integration for Immediate Needs: As demand for instant insights grows, real-time integration has become critical. This approach ensures data is available as soon as it’s generated, empowering instant decision-making and rapid responses. Use cases include fraud detection, real-time dashboards, and personalized customer experiences.

- Stream Processing for Continuous Flows: A specialized form of real-time integration, stream processing handles continuous streams of data from sources like IoT devices, clickstreams, and financial transactions. Technologies like Apache Kafka and Flink are central here, enabling real-time analytics and event-driven architectures.

- ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) Platforms for Transformation: These are foundational for data warehousing and analytics.

- ETL (Extract, Transform, Load): Data is extracted from source systems, transformed in a staging area (e.g., cleaned, aggregated, standardized), and then loaded into the target system.

- ELT (Extract, Load, Transform): Data is extracted, loaded directly into the target (often a data lake or modern data warehouse with strong processing capabilities), and then transformed using the target system’s power. ELT is favored in cloud environments for its flexibility and scalability.

- iPaaS (Integration Platform as a Service): Cloud-native platforms that provide a suite of services for developing, executing, and governing integration flows between disparate applications, both cloud-based and on-premises. iPaaS solutions like Mulesoft offer pre-built connectors, low-code/no-code interfaces, and robust monitoring capabilities, enabling efficient API-led Integration.

- Data Virtualization Solutions: These technologies create a virtual layer that provides a unified, real-time view of data from multiple sources without physically moving or replicating the data. Denodo is a prominent player in this space, offering agility, reducing storage costs, and simplifying data access.

- Change Data Capture (CDC) Solutions: CDC efficiently identifies and captures only the data that has changed in source systems, minimizing the load on networks and databases. This is crucial for enabling near real-time synchronization and reducing processing overhead for incremental updates in large-scale data environments.

- Master Data Management (MDM) Systems: Master Data Management (MDM) focuses on creating a single, consistent, and accurate view of an organization’s critical business data (e.g., customers, products, locations) across all systems. This ensures data quality and consistency at a fundamental level, vital for effective Data Integration and downstream analytics.

Modern Architectural Paradigms: Data Fabric and Data Mesh

Beyond traditional integration tools, modern paradigms are reshaping how organizations think about their data ecosystems, often leveraging cloud-native solutions:

- Data Fabric: An architectural concept that provides a single, unified, and intelligent layer over disparate data sources, using AI and machine learning to automate data discovery, integration, transformation, and governance. It aims to deliver a consistent user experience for data access and utilization across hybrid and multi-cloud environments, enhancing Automated Data Discovery and Mapping.

- Data Mesh: A decentralized data architecture approach that treats data as a product. It organizes data by business domains, each owning and serving its data products. This promotes domain autonomy, data discoverability, and data quality through a self-service paradigm, reducing central bottlenecks and improving scalability.

Challenges and Barriers to Adoption in Data Integration

Despite its critical importance, implementing effective Data Integration strategies is fraught with challenges:

- Data Quality Management: Inconsistent, inaccurate, or incomplete data is a pervasive issue. Without rigorous Data Quality Management processes, integrated data can become unreliable, leading to flawed insights and decisions, especially for AI/ML initiatives.

- Data Governance and Compliance: Managing data across diverse systems requires stringent Key Data Governance Feature implementation, including comprehensive Metadata Management, intuitive Data Catalog creation, and granular Role-Based Access Control. Ensuring compliance with regulations like GDPR, CCPA, or HIPAA adds layers of complexity and risk.

- Scalability and Performance: As data volumes grow exponentially, driven by IoT and transactional systems, maintaining the performance and throughput of integration pipelines becomes a significant hurdle. Solutions must be designed for extreme scalability, often leveraging cloud-native architectures and distributed processing.

- Complexity of Diverse Sources: Integrating data from legacy systems, modern APIs, IoT devices, streaming platforms, and external data providers demands specialized connectors, intricate transformation logic, and robust error handling capabilities.

- Skill Gap: Implementing and managing sophisticated data integration solutions requires specialized skills in data engineering, architecture, and governance, which can be scarce within many organizations.

- Security Concerns: Moving sensitive data across various systems and platforms introduces potential security vulnerabilities, necessitating robust encryption, access controls, and auditing throughout the integration lifecycle.

Business Value and ROI of Unified Data Ecosystems

Overcoming these challenges yields substantial returns, making Data Integration a high-ROI investment:

- Faster Model Deployment and Enhanced Analytics: A unified data ecosystem provides clean, accessible data, significantly accelerating the development and deployment of analytical models, including those for AI and ML. This enables quicker time-to-insight and faster response to market dynamics.

- Improved Data Quality for AI: High-quality, integrated data is the lifeblood of effective AI and Machine Learning models. Better data directly translates to more accurate predictions, intelligent automation, and reduced model drift. Capabilities like Predictive Data Quality enhance this further.

- Operational Efficiency and Cost Reduction: Eliminating manual data handling, reducing data reconciliation efforts, and automating data pipelines lead to significant operational efficiencies and cost savings, freeing up resources for innovation.

- Agility and Innovation: A holistic view of data empowers businesses to respond quickly to market changes, identify new opportunities, and innovate with confidence, fostering a culture of continuous improvement.

- Better Customer Experiences: By integrating customer data from all touchpoints, organizations can create a 360-degree view, leading to highly personalized services, proactive support, and improved customer satisfaction and retention.

- Regulatory Compliance: Robust integration, coupled with strong data governance features, simplifies compliance efforts by providing a clear lineage of data and controlled access, reducing legal and reputational risks.

Comparative Insight: Modern vs. Traditional Data Integration

Traditionally, Data Integration often involved point-to-point connections, custom scripts, and monolithic ETL jobs feeding into a centralized Data Warehouse or Data Lake Architecture. While effective for structured data and batch processing, these traditional approaches faced increasing strain with the advent of big data, diverse data types (including unstructured and semi-structured), and real-time demands. The reliance on a central team for all data transformations and movements often led to bottlenecks, making these systems brittle, difficult to scale, and slow to adapt to new business requirements.

Modern Data Integration strategies, particularly those centered around Data Fabric and Data Mesh, offer a stark contrast. Instead of a centralized, often brittle hub-and-spoke model, these paradigms advocate for more distributed, agile, and self-service approaches. Data Fabric, for instance, uses intelligent automation for Automated Data Discovery and Mapping, streamlining the process of understanding and connecting disparate datasets across hybrid and multi-cloud environments. It leverages AI-driven capabilities for Predictive Data Quality, anticipating and mitigating data issues before they impact downstream systems. It also facilitates Smart Data Transformation and Preparation, reducing manual effort and accelerating data readiness for analytics. Data Mesh, on the other hand, revolutionizes ownership by empowering domain teams to manage their data as “products,” inherently improving data quality, discoverability, and accessibility through domain expertise and a focus on data as a service.

Furthermore, modern integration heavily relies on API-led Integration and Event-Driven Architecture. APIs provide a standardized, reusable, and secure way for applications and services to communicate, moving away from brittle direct database connections and fostering microservices architectures. Event-Driven Architecture enables systems to react to real-time events, fostering greater responsiveness and decoupling of services, a far cry from the scheduled, batch-centric processes of yesteryear. These contemporary approaches, supported by advanced ETL/ELT Platforms for Transformation and capabilities like Anomaly Detection in Data Pipelines, allow for a much more flexible, scalable, and resilient data ecosystem capable of handling complex data landscapes and supporting advanced analytics, AI, and Machine Learning at scale.

World2Data Verdict: Embracing a Unified, Intelligent Future

The trajectory of data management clearly points towards increasingly sophisticated and automated Data Integration strategies. Organizations must move beyond ad-hoc connections and embrace holistic architectures like Data Fabric or Data Mesh, recognizing that data is a strategic asset requiring consistent governance and seamless flow. The future lies in intelligent, self-healing data pipelines that leverage AI/ML for automated data quality, anomaly detection, and smart transformations, proactively ensuring data reliability and availability. Companies should invest in platforms that offer robust Metadata Management and Data Catalog features, fostering data discoverability and trust. Furthermore, evaluating solutions from leading competitors such as Informatica, Talend, Mulesoft, Fivetran, Stitch, Denodo, and IBM DataStage is crucial to find the best fit for specific enterprise needs, balancing flexibility, scalability, and cost-effectiveness. The actionable recommendation from World2Data is to prioritize a proactive, rather than reactive, approach to Data Integration, focusing on building an adaptive, intelligent, and governed data ecosystem that serves as the bedrock for all future-proofed digital initiatives. This forward-thinking stance will not only overcome current data silos but also position the organization to harness emerging technologies and maintain a competitive edge through data-driven excellence.