Elevating Enterprise Data: A Deep Dive into Achieving Superior Data Quality

In the fiercely competitive landscape of modern business, the adage “garbage in, garbage out” has never been more relevant. Achieving high Data Quality across your entire enterprise is not merely a technical aspiration but a fundamental strategic imperative. It underpins every critical business decision, from market analysis and product development to customer engagement and regulatory compliance. This comprehensive guide will explore the intricacies of improving Data Quality, leveraging advanced platforms, and fostering a culture of data stewardship to unlock an organization’s full potential.

The Imperative of Pristine Data: Setting the Stage for Data Quality

The reliability of your business decisions hinges entirely on the integrity of your Data Quality. Ensuring high standards across all operations is not merely an IT task but a strategic imperative that empowers better analytics, regulatory compliance, and overall operational efficiency. As data volumes explode and sources proliferate, organizations face unprecedented challenges in maintaining data accuracy, consistency, and relevance. Poor Data Quality leads to flawed insights, wasted resources, missed opportunities, and significant financial losses. Therefore, understanding the foundational principles and adopting robust strategies for its improvement is paramount for any data-driven enterprise.

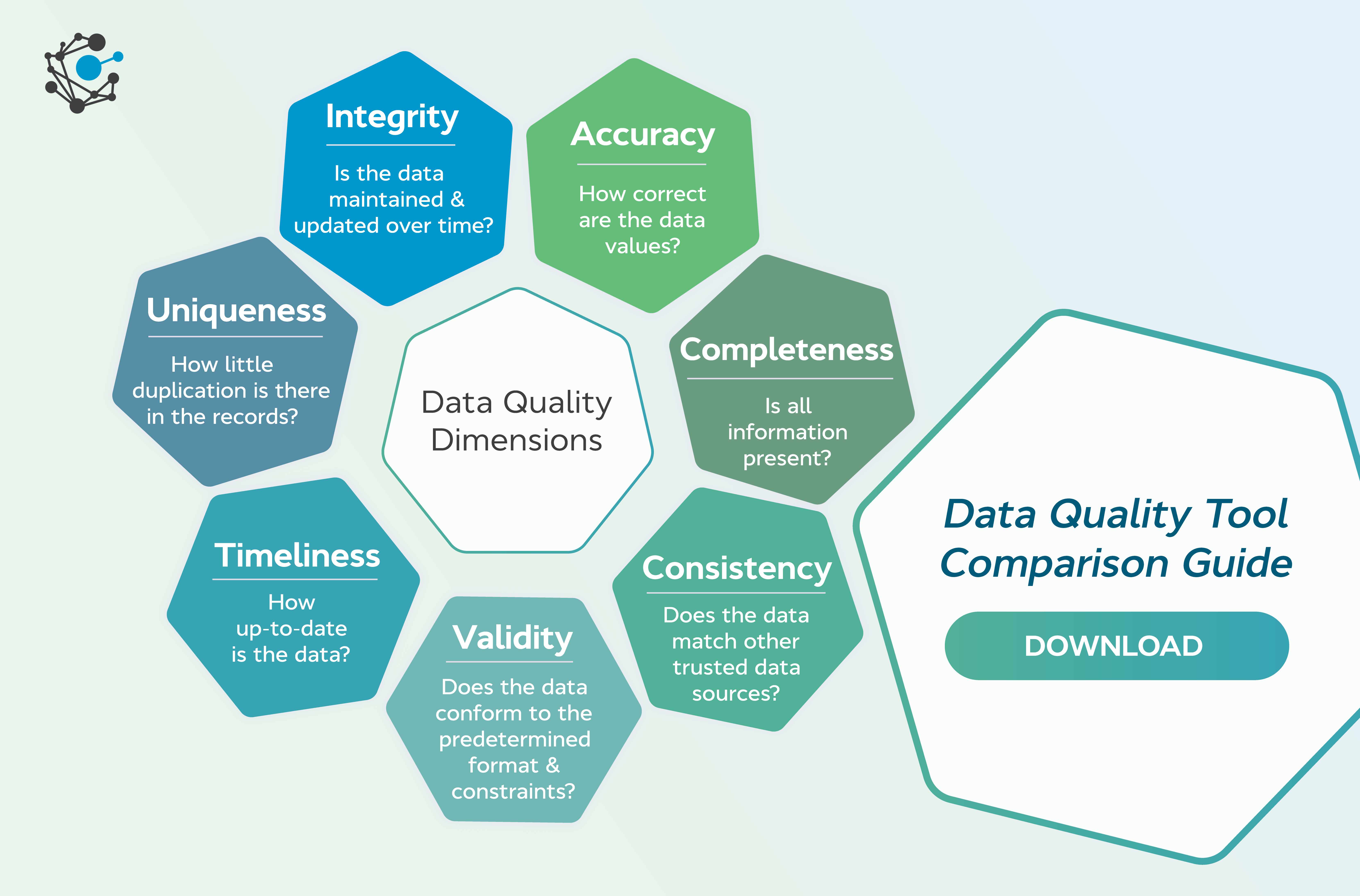

Defining What Good Data Means involves identifying data that is accurate, complete, consistent, timely, and relevant for its intended use. This holistic definition moves beyond simple correctness to encompass the fitness of data for a specific purpose. Identifying Common Data Quality Issues frequently reveals problems like duplicate records, inconsistent formatting, missing values, and outdated information which can corrupt insights and erode trust in data-driven initiatives. Addressing these issues proactively is the core objective of any enterprise-wide Data Quality program.

Core Breakdown: Architecture, Technologies, and Value of Data Quality Platforms

Improving Data Quality across your entire enterprise necessitates a structured approach, often powered by specialized platforms. These platforms serve as the backbone for establishing, maintaining, and monitoring data integrity throughout its lifecycle. Let’s delve into the core components, underlying technologies, and the business value they deliver.

Platform Categories and Core Technologies

Modern Data Quality initiatives are typically supported by a suite of interconnected platform categories:

- Data Quality Platform: Dedicated solutions focused on profiling, cleansing, monitoring, and enriching data.

- Master Data Management (MDM): Systems designed to create and maintain a consistent, accurate, and single version of core business data (e.g., customers, products, locations) across the enterprise. MDM is crucial for eliminating duplicates and inconsistencies.

- Data Governance Platform: Provides frameworks and tools to define, implement, and enforce data policies, roles, responsibilities, and processes, ensuring data is managed consistently and ethically.

Underpinning these platforms are sophisticated core technologies and architectures:

- Rule-based Validation: Pre-defined or user-configured rules to check data against business logic and expected formats (e.g., email address format, valid date ranges).

- Data Profiling: Analyzing source data to understand its structure, content, and quality. This involves statistical analysis of data values, patterns, and relationships to identify anomalies and infer metadata.

- Matching and Merging Algorithms: Advanced algorithms to identify and link records that refer to the same entity but exist in different systems or formats (e.g., different spellings of a customer name). Once matched, these records can be merged into a golden record.

- Data Standardization Engines: Tools that transform data into a consistent format, correcting variations in spelling, capitalization, abbreviations, and addressing schemes.

- Workflow Automation for Remediation: Automated processes to route identified data quality issues to data stewards or subject matter experts for review and correction, ensuring efficient resolution.

- Cloud-native or Hybrid Deployment Options: Modern platforms often leverage cloud scalability and elasticity, offering flexible deployment models to suit organizational infrastructure preferences.

Key Data Governance Features for Quality Assurance

Effective Data Quality is inextricably linked with robust data governance. Key features include:

- Data Profiling: As mentioned, it’s the first step to understand the ‘health’ of your data.

- Data Validation: Enforcing rules to ensure new data entries or updates conform to established standards.

- Data Cleansing: The process of detecting and correcting (or removing) corrupt or inaccurate records from a record set, table, or database.

- Duplicate Detection: Identifying redundant records that represent the same real-world entity.

- Data Standardization: Transforming data into a uniform format, critical for data consistency across systems.

- Data Lineage Tracking: Providing an audit trail that shows where data originated, how it has been transformed, and where it has moved over time. This is vital for understanding data’s trustworthiness.

- Metadata Management Integration: Connecting Data Quality processes with metadata repositories to understand data context, definitions, and relationships.

- Role-Based Access Control (RBAC) for Data Quality Rules and Processes: Ensuring that only authorized personnel can define, modify, or execute Data Quality rules and workflows.

Primary AI/ML Integration for Intelligent Data Quality

Artificial Intelligence and Machine Learning are revolutionizing Data Quality management by introducing unprecedented levels of automation and insight:

- AI-driven Anomaly Detection for Data Errors: ML algorithms can learn normal data patterns and flag deviations that indicate potential errors or fraud, often catching issues that rule-based systems might miss.

- Machine Learning for Predictive Data Quality Issues: By analyzing historical data and system behaviors, ML can predict where and when Data Quality issues are likely to arise, enabling proactive intervention.

- Automated Data Classification: ML models can automatically categorize data, such as identifying PII (Personally Identifiable Information) or sensitive financial data, which is crucial for compliance and security.

- Intelligent Matching and Merging of Records: AI can enhance traditional matching algorithms by recognizing complex relationships and fuzzy matches, significantly improving the accuracy of master data creation.

- Natural Language Processing (NLP) for Unstructured Data Quality: NLP techniques can extract, understand, and standardize information from unstructured text fields, improving the quality of qualitative data.

Challenges and Barriers to Adoption

Despite the clear benefits, improving Data Quality isn’t without its hurdles:

- Data Silos and Integration Complexity: Data often resides in disparate systems (CRMs, ERPs, legacy databases), each with its own format and standards, making a unified Data Quality view challenging.

- Lack of Clear Data Ownership and Accountability: Without defined roles, responsibility for data quality can become diffused, leading to neglect.

- Resistance to Change: Employees accustomed to certain data entry practices may resist new, stricter Data Quality protocols.

- Initial Investment and ROI Justification: Implementing comprehensive Data Quality platforms requires significant investment, and quantifying the exact ROI can sometimes be difficult in the short term.

- Evolving Data Landscape: New data sources, formats, and regulatory requirements constantly emerge, necessitating continuous adaptation of Data Quality strategies.

- Data Drift: Over time, the nature or context of data can change, rendering existing Data Quality rules or models less effective.

Business Value and ROI

The return on investment for robust Data Quality initiatives is substantial and multifaceted:

- Improved Decision-Making: Reliable data leads to more accurate analytics, better strategic planning, and more effective operational decisions.

- Enhanced Customer Experience: Accurate customer data enables personalized marketing, efficient service, and fewer errors, fostering loyalty.

- Reduced Operational Costs: Eliminating duplicates, correcting errors, and streamlining data processes reduces rework, manual efforts, and wasted resources.

- Regulatory Compliance and Risk Mitigation: High Data Quality is essential for meeting compliance mandates (e.g., GDPR, CCPA, HIPAA) and mitigating risks associated with inaccurate reporting or fines.

- Faster Model Deployment and Better AI Outcomes: For AI and ML projects, high-quality input data is non-negotiable. It leads to more accurate models, quicker deployment, and reliable predictive capabilities.

- Increased Revenue Opportunities: Better data can identify new market segments, improve cross-selling opportunities, and optimize pricing strategies.

Main Competitors/Alternatives

The market for Data Quality and governance platforms is robust, with several key players offering comprehensive solutions:

- Informatica Data Quality: A leader known for its comprehensive suite of data management tools, including profiling, cleansing, and monitoring.

- Talend Data Quality: Offers open-source and commercial solutions with strong integration capabilities for various data sources.

- IBM InfoSphere QualityStage: Part of IBM’s InfoSphere Information Server, providing powerful data quality capabilities for large enterprises.

- Collibra Data Quality: Integrates data quality directly into its broader data governance platform, emphasizing collaboration and lineage.

- Ataccama ONE: A unified platform offering data quality, MDM, and data governance functionalities with strong AI integration.

- SAS Data Management: Known for its robust data integration and quality capabilities, particularly strong in statistical analysis.

- Syniti: Specializes in enterprise data management, with a focus on data quality, migration, and master data management for complex environments.

Comparative Insight: Modern Data Quality Platforms vs. Traditional Approaches

The shift towards dedicated Data Quality platforms represents a significant evolution from traditional, often fragmented, approaches. Historically, organizations might have relied on a mix of manual checks, custom scripts, point solutions, or ad-hoc departmental efforts to address Data Quality issues. While these methods could offer temporary fixes for specific problems, they lacked scalability, consistency, and enterprise-wide visibility.

Traditional methods often suffered from:

- Siloed Efforts: Data Quality initiatives were often confined to individual departments, leading to inconsistent standards and a lack of holistic insight.

- Manual and Error-Prone Processes: Relying on human intervention for data cleansing and validation is slow, expensive, and susceptible to errors, especially with large datasets.

- Lack of Centralized Governance: Without a unified framework, data ownership and accountability remained ambiguous, hindering effective remediation.

- Reactive, Not Proactive: Issues were typically addressed only after they caused problems, rather than being prevented or predicted.

- Limited Integration: Custom scripts were hard to maintain and integrate across diverse data ecosystems, leading to integration nightmares.

In contrast, modern Data Quality platforms offer a holistic, proactive, and automated solution. They provide a centralized environment for:

- Enterprise-Wide Consistency: Enforcing uniform Data Quality rules and standards across all systems and departments.

- Automation and Scalability: Leveraging advanced algorithms, AI, and workflow automation to process vast amounts of data efficiently and continuously.

- Proactive Monitoring and Predictive Analytics: Identifying potential issues before they impact business operations, often using AI/ML.

- Integrated Data Governance: Tightly coupling Data Quality with data lineage, metadata management, and role-based access controls for comprehensive data stewardship.

- Comprehensive Reporting and Dashboards: Providing clear visibility into Data Quality metrics, progress, and areas needing attention.

By moving from reactive, disparate manual efforts to integrated, automated Data Quality platforms, enterprises can transform their data landscape from a liability into a strategic asset, ensuring trust and reliability in every data point.

World2Data Verdict: The Unstoppable March Towards Intelligent Data Quality

Achieving high Data Quality is not a one-time project but an ongoing commitment that strengthens an organization’s analytical capabilities and strategic foresight, preparing it for future challenges and opportunities with confidence. World2Data.com asserts that the future of Data Quality lies firmly in the intelligent, automated realm, deeply integrated within broader data governance and MDM strategies. Enterprises must move beyond rudimentary cleansing efforts and embrace AI-driven platforms that not only identify and remediate data errors but also predict potential issues and actively enforce quality standards at the point of data creation. Our recommendation is clear: Invest in unified Data Quality platforms with robust AI/ML capabilities, integrate them tightly with your data governance frameworks, and cultivate an enterprise-wide culture of data stewardship. This integrated approach will not only eliminate data headaches but unlock profound competitive advantages by fostering unwavering trust in your most valuable asset: your data.