The Data Quality Layer: Blueprint for Ensuring Clean and Accurate Data in Modern Enterprises

In an era where data drives every strategic decision, from operational efficiencies to groundbreaking innovations, the integrity of information is paramount. The Data Quality Layer emerges as an indispensable component of any modern data architecture, serving as the critical infrastructure that guarantees the cleanliness, accuracy, and reliability of an organization’s most valuable asset. Without a robust Data Quality Layer, analytical insights become speculative, machine learning models falter, and operational processes are prone to inefficiencies and costly errors.

Introduction: The Imperative for a Data Quality Layer

The journey towards data maturity often highlights a stark reality: raw data, regardless of its volume or velocity, is rarely perfect. It arrives riddled with inconsistencies, errors, redundancies, and incompleteness, originating from diverse sources and captured through myriad systems. This inherent messiness necessitates a dedicated mechanism to refine and prepare data before it can fuel informed decisions or power advanced analytics. This is precisely the role of the Data Quality Layer, acting as a sophisticated processing and enforcement hub within the broader data ecosystem.

Functioning as a crucial Data Integration Component, the Data Quality Layer is not merely a feature but a foundational discipline under the umbrella of Data Quality Management and Data Governance. Its primary objective is to transform raw, inconsistent, or unreliable data into a trustworthy asset that provides a solid foundation for all data-driven initiatives. By systematically identifying, assessing, and remediating data quality issues, it ensures that every piece of information adheres to predefined standards, making it fit for purpose. This proactive approach mitigates significant business risks, enhances regulatory compliance, and unlocks the true potential of an organization’s data assets.

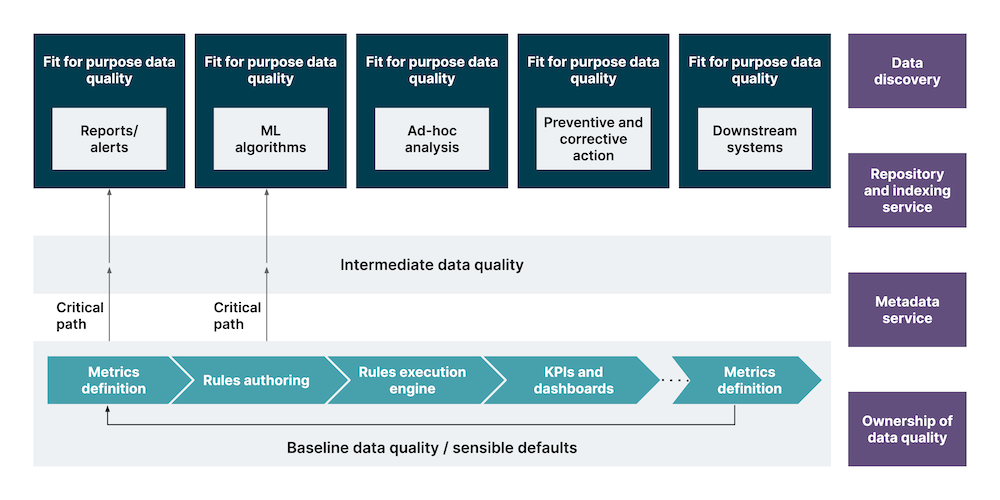

Core Breakdown: Architecture and Mechanisms of the Data Quality Layer

The efficacy of a Data Quality Layer stems from its multifaceted architecture, integrating various technologies and processes designed to uphold data integrity. At its heart, it leverages a combination of automated and human-guided interventions across the data lifecycle.

Key Components and Technologies

- Data Profiling: This initial step involves systematically examining the content, structure, and quality of data sources. Data Profiling tools analyze data attributes, identify patterns, detect anomalies, and uncover relationships, providing a comprehensive understanding of data characteristics and potential quality issues. This foundational analysis informs the creation of targeted quality rules.

- Rules-based Validation: Central to the Data Quality Layer is a powerful engine for Rules-based validation. This involves defining and applying a set of business rules and constraints to check data accuracy, completeness, consistency, and validity. Examples include checking for valid date formats, ensuring numerical ranges, verifying referential integrity, or confirming adherence to specific enumerations.

- Data Standardization: Raw data often lacks uniformity. Data Standardization processes transform data into a consistent format, ensuring that values are represented identically across different systems. This includes standardizing addresses, names, product codes, units of measure, and other critical identifiers, which is crucial for accurate aggregation and analysis.

- Data Cleansing: Once errors and inconsistencies are identified through profiling and validation, Data Cleansing tools come into play. This involves correcting, modifying, or removing erroneous or incomplete data. Cleansing operations can range from simple fixes like correcting spelling mistakes and resolving formatting issues to more complex tasks such as reconciling conflicting records or imputing missing values.

- Data Enrichment: Beyond mere cleaning, an effective Data Quality Layer can also perform Data Enrichment. This process involves augmenting existing records with valuable external data points, providing a more comprehensive view. For instance, customer records might be enriched with demographic information, geographical data, or firmographic details from third-party sources.

- Deduplication: A common challenge in data management is the presence of duplicate records, especially for entities like customers, products, or suppliers. The Data Quality Layer employs sophisticated algorithms for Deduplication, identifying and merging redundant entries to create a single, accurate “golden record.” This is critical for maintaining a unified view and preventing skewed analytics.

- Data Monitoring: Data quality is not a static state but a continuous process. Data Monitoring involves establishing ongoing surveillance of data quality metrics and trends. This proactive approach uses dashboards and alerts to notify data stewards when quality thresholds are breached or when new issues emerge, allowing for timely intervention.

- Metadata Management for Quality Rules: Effective data quality relies heavily on robust Metadata Management for quality rules. This involves documenting the definitions of data elements, the rules applied to them, the history of data quality issues, and the remediation steps taken. Metadata provides context and auditability, ensuring transparency and understanding of data quality processes.

Key Data Governance Features within the Data Quality Layer

The Data Quality Layer is inextricably linked with Data Governance, providing the operational mechanisms to enforce governance policies:

- Data Policy Enforcement: It acts as the technical arm for Data Policy Enforcement, translating abstract governance policies into executable rules that automatically apply to data flows.

- Data Stewardship Workflows: For issues that require human intervention, the layer facilitates Data Stewardship Workflows, assigning tasks to data stewards for review, remediation, and approval, ensuring accountability.

- Audit Trails for Data Quality: Maintaining comprehensive Audit Trails for data quality is crucial for compliance and accountability. The layer records all changes, validations, and transformations, providing a clear history of data quality activities.

Primary AI/ML Integration

Modern Data Quality Layers increasingly leverage Artificial Intelligence and Machine Learning to enhance their capabilities:

- Anomaly Detection: AI algorithms can perform advanced Anomaly Detection, identifying unusual data patterns or outliers that might indicate data quality issues more rapidly and comprehensively than traditional rule-based methods.

- Automated Data Profiling: ML can significantly enhance Automated Data Profiling by intelligently suggesting data types, formats, and potential relationships, reducing manual effort.

- Predictive Quality: Advanced analytics enable Predictive Quality, anticipating potential data quality issues before they manifest by analyzing trends and historical patterns.

- Automated Rule Generation: ML models can assist in Automated Rule Generation, learning from clean datasets and proposing new data quality rules.

- Data Pattern Recognition: AI excels at Data Pattern Recognition, identifying subtle inconsistencies or complex relationships within large datasets that would be difficult for humans or simple rules to detect.

Challenges/Barriers to Adoption

Despite its undeniable benefits, implementing and sustaining an effective Data Quality Layer faces several hurdles:

- Technical Complexity and Integration: Integrating a sophisticated Data Quality Layer into existing diverse and often legacy data architectures can be technically challenging. Ensuring seamless data flow, synchronizing metadata, and managing transformations across various systems require significant architectural expertise.

- Defining and Evolving Quality Rules: Establishing comprehensive and accurate data quality rules, especially in large enterprises with complex data landscapes, is a continuous process. Rules need to evolve with changing business requirements, new data sources, and regulatory updates.

- Organizational Buy-in and Data Stewardship: Successful data quality initiatives require strong organizational commitment and a culture of data ownership. Securing funding, allocating dedicated resources for data stewardship, and fostering collaboration across departments can be significant barriers.

- Cost of Implementation and Maintenance: Investing in specialized Data Quality Management tools (e.g., from competitors like Informatica Data Quality, Talend Data Quality, IBM DataStage QualityStage, SAS Data Quality) can be substantial. Beyond software licenses, there are costs associated with infrastructure, skilled personnel, and ongoing operational maintenance.

- Data Drift and Evolving Data Landscape: Data sources and their characteristics are constantly changing. Data Drift, where the statistical properties of the target variable (or input features) change over time, can quickly render established data quality rules obsolete. The Data Quality Layer must be agile enough to adapt to new data types, formats, and sources.

- Measuring ROI: Quantifying the direct Return on Investment (ROI) from data quality initiatives can be difficult. While the benefits are clear, attributing specific financial gains directly to improved data quality often requires robust metrics and baseline comparisons.

Business Value and ROI

The strategic investment in a robust Data Quality Layer yields substantial business value and a tangible return on investment across multiple facets of an organization:

- Improved Decision Making: Clean and accurate data provides leaders with reliable information, enabling them to make informed, confident decisions based on factual evidence rather than assumptions. This leads to better strategic planning, more effective resource allocation, and ultimately, superior business outcomes.

- Enhanced Customer Experience and Loyalty: Precise customer data enables truly personalized interactions, targeted marketing campaigns, and proactive customer service. By understanding customer needs accurately, businesses can foster stronger relationships, increase customer satisfaction, and build lasting loyalty.

- Regulatory Compliance and Risk Mitigation: Many industries face stringent data privacy and governance regulations (e.g., GDPR, HIPAA). A strong Data Quality Layer helps organizations meet these compliance requirements by ensuring data accuracy, completeness, and auditability, significantly reducing the risk of penalties, legal issues, and reputational damage.

- Operational Efficiency and Cost Savings: Poor data quality leads to costly errors, rework, and wasted resources. By automating data cleansing and validation, the Data Quality Layer streamlines operations, reduces manual effort, and minimizes the financial impact of data-related mistakes across all business processes.

- Foundation for AI/ML Initiatives: For organizations leveraging artificial intelligence and machine learning, Data Quality for AI is non-negotiable. High-quality, reliable data is the lifeblood of effective AI/ML models. A robust Data Quality Layer ensures that models are trained on accurate data, leading to more precise predictions, better model performance, and faster model deployment.

- Accelerated Innovation: With a trusted data foundation, organizations can confidently explore new opportunities, experiment with advanced analytics, and drive innovation without being hampered by unreliable data. This fosters agility and competitive advantage.

Comparative Insight: Data Quality Layer vs. Traditional Data Architectures

To fully appreciate the role of the Data Quality Layer, it’s essential to compare its function with traditional data architectures like Data Lakes and Data Warehouses. While often discussed in conjunction, their core purposes differ significantly.

Traditional Data Warehouses are designed for structured, clean, and transformed data, primarily for reporting and business intelligence. Data enters a Data Warehouse after a rigorous ETL (Extract, Transform, Load) process, where data quality checks are typically embedded within the ‘Transform’ stage. While these checks are vital, they often happen in batch, are highly schema-dependent, and can be rigid, making them less adaptable to diverse, semi-structured, or unstructured data sources.

Data Lakes, on the other hand, are built to store vast quantities of raw, unprocessed data in its native format, often from a multitude of sources including IoT, social media, and transactional systems. The principle is “store everything now, ask questions later.” While Data Lakes offer immense flexibility and scalability, the inherent rawness of the data presents a significant challenge: the “data swamp” phenomenon. Without proper governance and quality layers, a Data Lake can become a repository of unusable, untrustworthy information.

The Data Quality Layer is not a replacement for Data Warehouses or Data Lakes; rather, it is a crucial, complementary, and often independent layer that enhances their utility. It acts as an active, continuous processing layer that can sit before data enters a Data Warehouse (ensuring clean ingestion), within a Data Lake (curating zones of trusted data), or even across multiple data stores. Unlike the integrated, schema-bound transformation logic of a traditional ETL, a dedicated Data Quality Layer often employs a more agile, rule-driven, and sometimes AI-powered approach to identify and rectify quality issues irrespective of the data’s ultimate destination or structure. It provides a more flexible and granular control over data integrity, allowing for immediate feedback loops and continuous improvement.

Furthermore, the Data Quality Layer, especially when augmented by Data Observability Platforms (e.g., Monte Carlo, Soda), provides a level of proactive monitoring and alerting that is often missing from traditional architectures. While a Data Warehouse might surface an issue after a report is generated, a modern Data Quality Layer with observability capabilities can detect anomalies and quality degradations in real-time, preventing bad data from propagating downstream. It shifts the paradigm from reactive data cleaning to proactive data quality assurance, making it an indispensable component for any organization aiming for true data mastery.

World2Data Verdict: Embracing Data Quality as a Strategic Asset

At World2Data, we assert that a well-implemented Data Quality Layer is no longer a technical nice-to-have but a strategic imperative. In a landscape increasingly defined by data-driven competition and complex regulatory demands, the ability to trust your data is the ultimate differentiator. Organizations must move beyond viewing data quality as a one-time project or a mere compliance exercise. Instead, it must be integrated as a continuous, intelligent, and automated process, fundamental to every stage of the data lifecycle.

The future success of enterprises hinges on their capacity to not just collect vast amounts of data, but to effectively transform it into a reliable, actionable asset. Investing in a sophisticated and AI-augmented Data Quality Layer will future-proof businesses, enabling them to harness the full potential of advanced analytics, machine learning, and digital transformation initiatives. We recommend that organizations prioritize the establishment of a dynamic and adaptable Data Quality Layer, focusing on robust governance frameworks, continuous monitoring, and the strategic integration of AI/ML technologies to unlock unparalleled insights and foster enduring trust in their data ecosystem.