Data SLAs: Setting Expectations for Data Availability and Quality

In today’s data-driven world, the reliability and integrity of information are paramount. Data SLAs, or Service Level Agreements for data, are no longer optional but essential for any organization leveraging data for critical operations and strategic decision-making. A well-defined Data SLA provides a clear, mutually agreed-upon framework, outlining explicit commitments between data providers and consumers regarding the accessibility, integrity, and performance of vital information assets. It serves as an indispensable cornerstone for fostering trust, ensuring reliability, and maintaining accountability within the complex and ever-expanding enterprise data ecosystem.

Introduction: The Imperative of Data SLAs in Modern Enterprises

The exponential growth of data, coupled with its increasing criticality to business functions ranging from operational analytics to advanced AI/ML models, has brought data governance to the forefront of organizational priorities. Enterprises are realizing that simply having data isn’t enough; they need data that is consistently available, accurate, and fit for purpose. This is precisely where the concept of a Data SLA becomes indispensable. It shifts data management from a reactive, issue-driven approach to a proactive, expectation-setting framework, ensuring that data assets meet predefined performance benchmarks. Our objective here at World2Data.com is to provide a comprehensive, in-depth analysis of Data SLAs, exploring their components, benefits, challenges, and their transformative impact on data-reliant organizations. By understanding and implementing robust Data SLAs, businesses can unlock greater strategic value from their data, minimize risks, and build unwavering confidence in their analytical outputs.

Core Breakdown: Architecture, Components, and Strategic Imperatives of Data SLAs

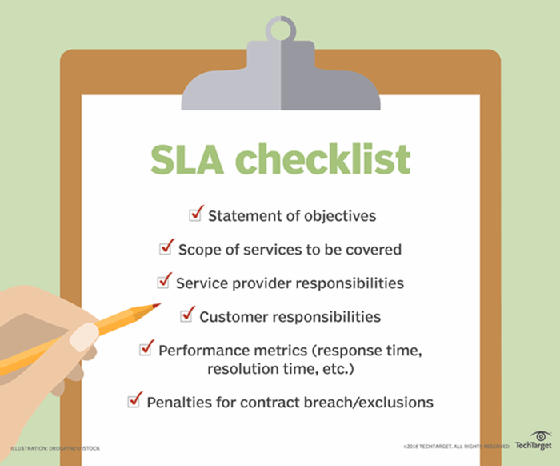

A robust Data SLA framework is fundamentally an extension of a comprehensive Data Governance Framework. It provides the actionable metrics and accountability mechanisms that transform governance principles into measurable outcomes. At its core, a Data SLA is a contractual agreement, whether formal or informal, that details the services a data provider will deliver to a data consumer, specifying crucial metrics around data availability, quality, freshness, and security.

Establishing Clear Data Availability Benchmarks

A foundational component of any effective Data SLA is the explicit specification of data availability. This dimension addresses how reliably and quickly data can be accessed and used by its consumers. Key metrics and considerations include:

- Uptime Guarantees: Often expressed as a percentage (e.g., 99.9% availability), this ensures that data sources, pipelines, and access mechanisms are operational and accessible within defined periods. It signifies the reliability of the underlying infrastructure and data delivery mechanisms.

- Latency for Data Retrieval and Delivery: Crucial for applications requiring real-time or near real-time data, this metric defines the acceptable delay between a data request and its fulfillment, or between data generation and its availability in a consumer-facing system. High-performance analytics, fraud detection, and operational dashboards heavily rely on stringent latency SLAs.

- Data Freshness: This defines how recently the data has been updated and processed. For some applications, data updated hourly is acceptable, while for others, minutes or even seconds might be required. A Data SLA will specify the maximum permissible age of data for different use cases.

- Data Recovery Objectives (RTO & RPO): In the event of data loss or system failure, the Recovery Time Objective (RTO) specifies the maximum acceptable downtime before data systems are restored. The Recovery Point Objective (RPO) defines the maximum acceptable amount of data loss measured in time (e.g., last 15 minutes of data). These are critical for disaster recovery planning and ensuring business continuity.

Defining Measurable Data Quality Standards

Beyond mere availability, a truly robust Data SLA also sets concrete expectations for data quality – the fitness of data for its intended purpose. Poor data quality can lead to flawed insights, erroneous decisions, and significant operational costs. This involves outlining specific, quantifiable metrics for various dimensions of quality:

- Accuracy: The degree to which data correctly reflects the real-world object or event it represents. This can be measured by acceptable error rates or deviation from a golden record.

- Completeness: The extent to which all required data is present. This is often measured by the percentage of non-null values for critical fields or the presence of all expected records in a dataset.

- Consistency: The degree to which data values are consistent across different systems or over time. Inconsistencies can arise from data integration issues or conflicting definitions.

- Timeliness: Similar to freshness but more focused on the data being available *when needed* for a specific process or decision. For example, monthly reports needing data by the 3rd business day.

- Validity: The extent to which data conforms to defined syntax and value ranges (e.g., a phone number having a specific format, a date falling within a valid range).

Organizations must define acceptable error rates, expected data freshness, and thresholds for missing values. Such a proactive approach ensures that data, once available, is also truly fit for its intended purpose, preventing downstream issues and building trust.

Leveraging Core Technology for Data SLA Enforcement

The practical implementation and continuous monitoring of Data SLAs heavily rely on sophisticated technological solutions. These solutions form the “Core Technology/Architecture” that underpins the commitments made within an SLA:

- Data Observability Platforms: These platforms provide end-to-end visibility into the health, lineage, and performance of data pipelines and datasets. They automatically detect anomalies, track changes in data schema and volume, and provide insights into potential SLA breaches.

- Data Quality Monitoring Tools: Dedicated tools are essential for continuous profiling, cleansing, and validation of data against predefined quality rules. They can automatically identify deviations from quality standards and trigger alerts.

- Metadata Management: Robust metadata management systems provide the context for data assets, including definitions, lineage, ownership, and usage. This metadata is crucial for understanding what an SLA covers and for tracing the impact of issues.

- Automated Monitoring and Alerting: The ability to automatically track SLA metrics and generate alerts when thresholds are breached is paramount. This shifts from manual checks to proactive, system-driven enforcement.

AI/ML Integration for Advanced Data SLA Management

The integration of artificial intelligence and machine learning significantly enhances the capabilities of Data SLA management, moving beyond reactive monitoring to predictive and prescriptive actions. This includes:

- Predictive Monitoring for SLA Breaches: AI algorithms can analyze historical data patterns to predict potential SLA breaches before they occur. For example, forecasting pipeline failures or data quality degradation based on past trends or early indicators.

- Anomaly Detection for Data Quality: ML models are highly effective at identifying subtle anomalies in data patterns that might indicate a quality issue, even if it doesn’t immediately violate a hard-coded rule. This catches novel errors that rule-based systems might miss.

- Automated Root Cause Analysis (RCA): When an SLA breach occurs, AI can assist in rapidly pinpointing the root cause by analyzing logs, metadata, and historical performance data, drastically reducing the time to resolution.

Challenges and Barriers to Data SLA Adoption

While the benefits of Data SLAs are clear, their successful implementation is not without challenges. Organizations often encounter several barriers to comprehensive adoption:

- Defining Clear and Measurable Metrics: The subjective nature of “quality” and “availability” can make it difficult to define objective, quantifiable metrics that satisfy all stakeholders. Translating business needs into technical thresholds requires significant collaboration.

- Stakeholder Alignment and Buy-in: Securing consensus from diverse data producers, consumers, and business units on what constitutes acceptable data availability and quality can be a lengthy and complex process, often leading to negotiation and compromise.

- Technical Complexity of Monitoring: Implementing robust monitoring across disparate data sources, heterogeneous data pipelines, and varying consumption patterns is technically challenging. This often requires integrating multiple tools and building custom solutions.

- Dynamic Data Environments: Modern data ecosystems are constantly evolving with new sources, schemas, and processing requirements. Keeping Data SLAs relevant and updated in such dynamic environments is a continuous effort. Data drift, where the statistical properties of production data change over time, can subtly degrade data quality and make existing SLAs less effective without continuous recalibration.

- Integration with Legacy Systems: Older data systems often lack the APIs or observability features needed to effectively monitor and report on SLA metrics, posing significant integration hurdles.

- Cost and Resource Allocation: Investing in the necessary tools, expertise, and processes for effective Data SLA management can be substantial, requiring a clear demonstration of ROI to secure executive sponsorship.

- Lack of a Single Source of Truth: Without consolidated metadata management and data lineage capabilities, understanding the impact of data issues and assigning accountability for SLA breaches can be incredibly difficult.

Business Value and ROI of Robust Data SLAs

Despite the challenges, the strategic advantages and return on investment (ROI) derived from implementing comprehensive Data SLAs are profound:

- Increased Trust and Confidence in Data: By providing clear assurances about data quality and availability, Data SLAs foster greater confidence in data-driven insights across all business units. This translates to faster, more confident decision-making.

- Enhanced Accountability: Data SLAs clearly define roles and responsibilities between data providers and consumers, streamlining operations and reducing potential disputes or “finger-pointing” when issues arise. This clarity improves operational efficiency.

- Improved Data Governance and Compliance: Robust Data SLA frameworks inherently strengthen overall data governance by instilling discipline and measurable standards. They also aid in compliance efforts for regulatory requirements (e.g., GDPR, CCPA, HIPAA) by ensuring data integrity and availability.

- Reduced Operational Costs and Risks: Proactive monitoring and remediation enabled by Data SLAs minimize the impact of data issues, reducing the time and resources spent on reactive problem-solving. This lowers operational risks associated with poor data quality or unavailable data.

- Faster Model Deployment and Better AI Outcomes: For organizations leveraging AI and Machine Learning, high-quality, available data is the lifeblood of successful models. Data SLAs ensure MLOps pipelines are fed with reliable data, leading to faster model deployment cycles, reduced model drift, and more accurate AI outcomes.

- Strategic Resource Allocation: By identifying critical data assets and their associated SLA tiers, organizations can strategically allocate resources (e.g., monitoring, engineering effort) where they will have the most impact.

Comparative Insight: Data Management With vs. Without Data SLAs

To truly appreciate the value of Data SLAs, it’s insightful to compare a data environment that actively manages data through these agreements against one that operates without such explicit commitments.

In a traditional data lake or data warehouse model operating without formal Data SLAs, data consumption often becomes a reactive, high-risk endeavor. Data providers push data, and consumers pull it, but without explicit agreements, there’s a significant knowledge gap. When a data quality issue or availability problem arises, it often leads to:

- Blame Games and Disputes: Without clear definitions of ownership and responsibility, data consumers might blame data engineers for poor quality, while engineers might attribute issues to source systems or unclear requirements. This leads to unproductive cycles of investigation and blame.

- Erosion of Trust: Repeated instances of unavailable or unreliable data erode consumer trust, leading to skepticism about data-driven insights and a reluctance to rely on central data platforms. Business units may resort to shadow IT or manual data workarounds.

- Delayed Decision-Making: When data validity is constantly in question, decision-makers hesitate, waiting for manual validation or reconciliation, which slows down critical business processes.

- Inefficient Resource Allocation: Data teams spend disproportionate amounts of time on reactive firefighting – troubleshooting unexpected data issues, performing manual data cleansing, or restoring lost data, rather than on strategic initiatives.

- Compliance Risks: Without formal quality and availability standards, organizations run higher risks of failing compliance audits related to data integrity and retention, potentially incurring significant penalties.

Conversely, an environment fortified by well-implemented Data SLAs transforms data management into a proactive, transparent, and collaborative process. It elevates data from a mere commodity to a managed asset with guaranteed performance characteristics:

- Proactive Problem Solving: With defined metrics and automated monitoring (often utilizing `Data Observability Platforms` and `Data Quality Monitoring Tools`), potential issues are detected and addressed before they impact downstream consumers. AI-driven `Predictive Monitoring for SLA Breaches` further enhances this proactive stance.

- Shared Understanding and Accountability: SLAs foster a common language and understanding between data producers and consumers. Everyone knows what to expect and who is responsible for what, leading to smoother collaboration and quicker resolution of genuine issues.

- Increased Data Reliability: Consistent adherence to availability and quality metrics means consumers can trust the data they receive, leading to greater adoption of data-driven strategies and more confident decision-making.

- Optimized Resource Utilization: Data teams can focus on innovation and value creation, spending less time on reactive fixes and more on developing new data products and improving existing ones.

- Stronger Governance and Compliance: Data SLAs are a tangible manifestation of effective `Data Governance`, providing auditable proof of data quality and availability efforts, which is crucial for regulatory compliance.

World2Data Verdict: Embracing Data SLAs for Future-Proof Data Strategies

The era of merely hoping for good data is over. As organizations increasingly depend on data for their competitive edge, the strategic imperative to define, monitor, and enforce Data SLAs has become undeniable. For World2Data.com, our analysis clearly indicates that organizations must proactively embed Data SLAs as a cornerstone of their data strategy. This involves not just signing agreements, but also investing in the “Core Technology/Architecture” of `Data Observability`, `Data Quality Monitoring Tools`, and `Metadata Management`. It also means leveraging “Primary AI/ML Integration” for `Predictive Monitoring` and `Automated Root Cause Analysis` to move from reactive firefighting to proactive data health management. We recommend that organizations establish cross-functional data councils to define pragmatic, measurable Data SLAs, regularly review their performance, and foster a culture of data accountability. Embracing a robust Data SLA framework is not just good practice; it’s a critical differentiator, ensuring that your data assets consistently deliver on their promise, thereby future-proofing your entire data ecosystem against the inherent complexities and challenges of the modern data landscape.