Data Sourcing Strategies: How Enterprises Acquire Quality Data for Competitive Advantage

Platform Category: Data Integration and Governance Platforms

Core Technology/Architecture: API-driven Connectivity, ETL/ELT Frameworks, Data Streaming Architectures

Key Data Governance Feature: Data Quality Rules Engine, Metadata Management, Data Lineage

Primary AI/ML Integration: AI-powered Data Profiling, Automated Data Classification, Predictive Data Quality Monitoring

Main Competitors/Alternatives: Internal Enterprise Systems, Commercial Data Vendors, Public Data Sources, Data Marketplaces

In today’s hyper-competitive landscape, the ability to effectively execute data sourcing strategies is paramount for any enterprise aiming for sustainable growth and innovation. This article delves into the intricate world of acquiring high-quality, relevant data, exploring the methodologies and technologies that empower organizations. From leveraging internal operational systems to navigating the vast external data ecosystem, mastering data sourcing is the bedrock upon which all successful data-driven initiatives, from business intelligence to advanced analytics, are built. Understanding and optimizing these processes is not just a technical necessity but a strategic imperative that directly translates into superior decision-making and a distinct competitive edge.

Understanding the Core of Data Sourcing for Strategic Advantage

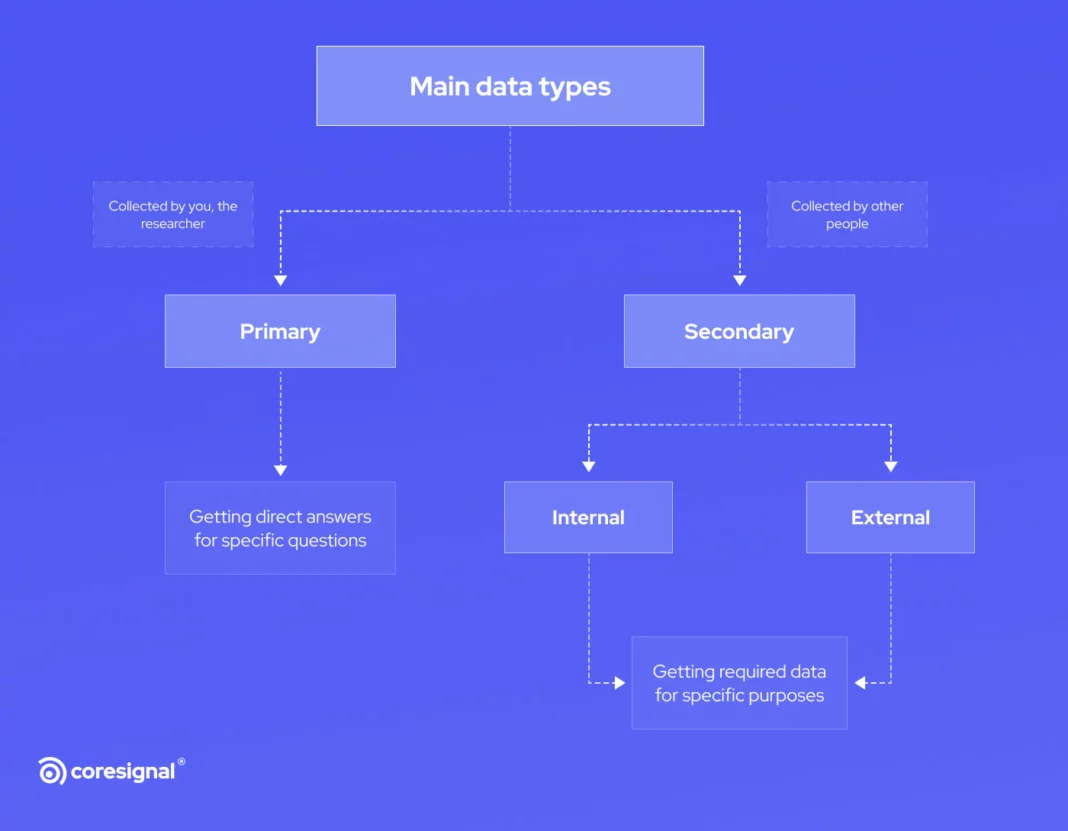

The foundation of any robust analytics strategy, business intelligence initiative, or machine learning model lies in the quality and relevance of its underlying data. Data sourcing is the comprehensive process of identifying, locating, acquiring, and ingesting data from various internal and external sources into an organization’s analytical environment. It encompasses everything from establishing direct database connections and API integrations to sophisticated web scraping techniques and partnerships with commercial data vendors. The objective is not merely to collect data, but to secure high-quality, timely, and compliant data that directly supports an enterprise’s strategic objectives and operational needs.

In today’s data-driven world, robust data sourcing is the backbone of informed decision-making. Enterprises constantly seek effective methods to acquire quality data, recognizing that accurate and relevant information fuels growth and innovation. From market analysis to customer behavior predictions, the strategies employed for data sourcing directly impact business outcomes and competitive advantage, making it a critical business function. This deep dive will explore the multifaceted approaches enterprises adopt to ensure they are equipped with the best possible data assets, minimizing risks and maximizing the potential for transformative insights.

Core Breakdown: Architecting Effective Data Sourcing Pathways

Effective data sourcing is a multi-layered discipline, requiring a strategic blend of technical infrastructure, operational processes, and a clear understanding of data needs. It begins with identifying the information gaps an organization needs to fill and then meticulously planning the acquisition process.

Leveraging Internal Data Assets: The Foundation of Operational Insight

Enterprises often begin their data acquisition journey by tapping into internal resources, which represent a goldmine of proprietary information. This includes vast amounts of transactional data from Customer Relationship Management (CRM) and Enterprise Resource Planning (ERP) systems, providing invaluable insights into customer interactions, sales patterns, and operational efficiency. These systems typically generate structured data that is relatively easier to integrate via direct database connections, ETL (Extract, Transform, Load) processes, or real-time API integrations.

Furthermore, website analytics platforms (e.g., Google Analytics, Adobe Analytics) offer granular detail on user behavior, navigation paths, conversion rates, and content engagement, crucial elements of comprehensive data sourcing for digital strategies. Data from IoT devices and sensors provides real-time operational intelligence, critical for manufacturing, logistics, and smart infrastructure. This often involves processing high-volume, streaming data, requiring robust data ingestion pipelines capable of handling diverse formats and velocities. Internal logs from applications, servers, and network devices also serve as rich sources for security monitoring, performance optimization, and root cause analysis.

The technical architecture for internal data sourcing typically involves:

- Database Connectors: Direct connections to relational databases (SQL, Oracle, PostgreSQL) and NoSQL databases (MongoDB, Cassandra).

- API Integrations: Utilizing RESTful or SOAP APIs provided by internal applications for programmatic data extraction.

- ETL/ELT Frameworks: Tools like Apache NiFi, Talend, Informatica, or cloud-native services (AWS Glue, Azure Data Factory, GCP Dataflow) to move and transform data from operational systems into data warehouses or data lakes.

- Data Streaming Architectures: Platforms like Apache Kafka, Amazon Kinesis, or Google Cloud Pub/Sub for real-time ingestion of event data from IoT devices, web clicks, and application logs.

- Data Virtualization: Technologies that provide a unified, virtual view of disparate internal data sources without physical movement, enabling quicker access for analytics.

Exploring External Data Opportunities: Expanding the Horizon of Intelligence

Beyond internal systems, external data sourcing opens up new dimensions, offering competitive intelligence, market trends, demographic insights, and broader contextual information. This category can be broken down into several key areas:

- Public Datasets: Often available from government agencies (e.g., census data, economic indicators), research institutions, and open data initiatives. These datasets are typically free or low-cost but require significant cleansing and integration efforts.

- Commercial Data Providers: Companies specializing in curated, high-quality information, ranging from demographic profiles and financial market data to consumer behavior segments and geospatial data. Acquisition often involves licensing agreements and proprietary APIs or bulk file transfers.

- Data Marketplaces: Platforms (e.g., AWS Data Exchange, Snowflake Data Marketplace) that facilitate buying and selling of data products, simplifying discovery and acquisition of third-party data.

- Web Scraping and Social Media Monitoring: Ethical web scraping can capture public sentiment, competitor activities, pricing intelligence, and emerging trends from publicly accessible websites. Social media monitoring tools analyze platforms like Twitter, Facebook, and LinkedIn for brand perception, trending topics, and customer feedback. This unstructured data requires advanced natural language processing (NLP) and machine learning techniques for extraction and analysis.

- Partnership Data: Data exchanged with business partners, suppliers, or customers, often via secure file transfer protocols (SFTP) or dedicated APIs, to optimize supply chains, co-marketing efforts, or shared service delivery.

Challenges and Barriers to Effective Data Sourcing Adoption

Despite its critical importance, implementing robust data sourcing strategies is fraught with challenges. Enterprises frequently encounter:

- Data Quality Issues: The most pervasive problem, encompassing inaccuracies, inconsistencies, incompleteness, and outdated information. Poor data quality can invalidate analytical models and lead to flawed business decisions.

- Integration Complexity: Connecting disparate internal and external systems, each with unique data formats, protocols, and APIs, requires significant engineering effort and can create brittle data pipelines.

- Data Privacy and Compliance: Navigating stringent regulations like GDPR, CCPA, and industry-specific mandates (e.g., HIPAA) for data collection, storage, and usage is a major hurdle. Ensuring consent, anonymization, and secure handling is paramount.

- Cost of Acquisition: Commercial datasets can be expensive, and the infrastructure, tools, and personnel required for effective data sourcing (especially for real-time or big data volumes) represent substantial investments.

- Data Silos: Internal organizational structures often lead to data being trapped in departmental silos, making it difficult to achieve a holistic view and integrated analytics.

- Technical Debt in Legacy Systems: Older systems may lack modern APIs or robust data export capabilities, complicating data extraction and integration.

- Skill Gap: A shortage of data engineers, data scientists, and data governance experts capable of designing, implementing, and managing complex data sourcing pipelines.

Business Value and ROI of Strategic Data Sourcing

Overcoming these challenges yields significant returns, as strategic data sourcing directly contributes to an enterprise’s bottom line and competitive standing:

- Improved Decision-Making: Access to comprehensive, high-quality data empowers leaders with deeper insights, enabling more informed and proactive strategic decisions.

- Enhanced Competitive Advantage: Enterprises that can acquire and leverage unique or superior datasets gain an edge in market understanding, product development, and customer engagement.

- Operational Efficiency: Real-time data from internal systems helps optimize processes, reduce waste, predict maintenance needs, and streamline supply chains.

- Customer Insights and Personalization: Combining internal behavioral data with external demographic and lifestyle data allows for hyper-personalized marketing campaigns, product recommendations, and customer service.

- New Product and Service Development: Identifying market gaps and emerging trends through external data sourcing can spark innovation and lead to the creation of new revenue streams.

- Risk Mitigation: Better data can improve fraud detection, strengthen cybersecurity, and enhance compliance monitoring, reducing financial and reputational risks.

Comparative Insight: Evolving from Ad-Hoc to Strategic Data Sourcing

Traditionally, data sourcing often occurred in a reactive, ad-hoc manner. Departments or individual projects would acquire data as needed, leading to fragmented datasets, redundant efforts, and significant data quality issues. This approach might involve manual data exports, simple CSV file transfers, or point-to-point integrations that were difficult to scale or govern.

In contrast, modern enterprises are shifting towards a strategic, platform-centric approach to data sourcing. Instead of disparate efforts, this involves building a centralized data acquisition strategy underpinned by robust data integration and governance platforms. This paradigm shift offers several advantages:

- Unified Data Pipelines: Modern platforms (e.g., Data Integration and Governance Platforms, Cloud Data Warehouses, Data Lakes) offer connectors and tools to ingest data from a multitude of sources into a centralized repository. This replaces fragmented, manual processes with automated, scalable pipelines.

- Enhanced Data Governance: Strategic data sourcing integrates data governance from the outset. Metadata management, data lineage tracking, and automated data quality rules engines are built into the ingestion process, ensuring data accuracy, compliance, and discoverability across the organization. This contrasts sharply with traditional methods where governance was often an afterthought or manually enforced.

- Scalability and Flexibility: Cloud-native data platforms provide elastic scalability, allowing enterprises to ingest and process vast volumes and varieties of data without being limited by on-premise infrastructure. This flexibility is crucial for handling the increasing velocity and volume of both internal and external data streams.

- Reduced Redundancy and Cost: By centralizing data acquisition and making data assets discoverable, organizations can reduce redundant data purchases or duplicate efforts in data extraction and transformation.

- Real-time Capabilities: Modern architectures, often leveraging data streaming technologies, enable near real-time data sourcing and processing, which is critical for applications like fraud detection, personalized customer experiences, and operational monitoring. Traditional batch-oriented sourcing often resulted in delayed insights.

- Self-Service and Democratization: With a well-governed and accessible data platform, business users and analysts can leverage self-service tools to discover and access approved datasets, reducing reliance on IT and accelerating time to insight.

While traditional data lakes and data warehouses have historically served as destinations for sourced data, the evolution lies in the *process* of getting data into these platforms. Strategic data sourcing ensures that data arrives not just in bulk, but with metadata, quality assurances, and lineage, making it immediately valuable for downstream analytics and AI applications. It’s the difference between collecting raw materials haphazardly and establishing a sophisticated, quality-controlled supply chain for information.

World2Data Verdict: Prioritizing Intelligent Data Sourcing for Future Readiness

The future success of any enterprise will be inextricably linked to its prowess in data sourcing. World2Data.com asserts that organizations must move beyond reactive data acquisition to adopt a proactive, intelligent, and platform-driven approach. This involves a dual focus: investing in advanced data integration and governance platforms that leverage AI for automated profiling and quality monitoring, and cultivating a data-literate culture that understands the strategic value of quality data. Enterprises should prioritize the establishment of clear data ownership, robust metadata management, and continuous validation processes at the point of ingestion to ensure every piece of data acquired is a valuable asset, not a liability. The ultimate recommendation is to view data sourcing not just as a technical task, but as a core strategic capability, continuously optimized to fuel innovation and maintain a leading position in the rapidly evolving digital economy.