Modern Data Stack: Unlocking Next-Gen Analytics and AI Capabilities

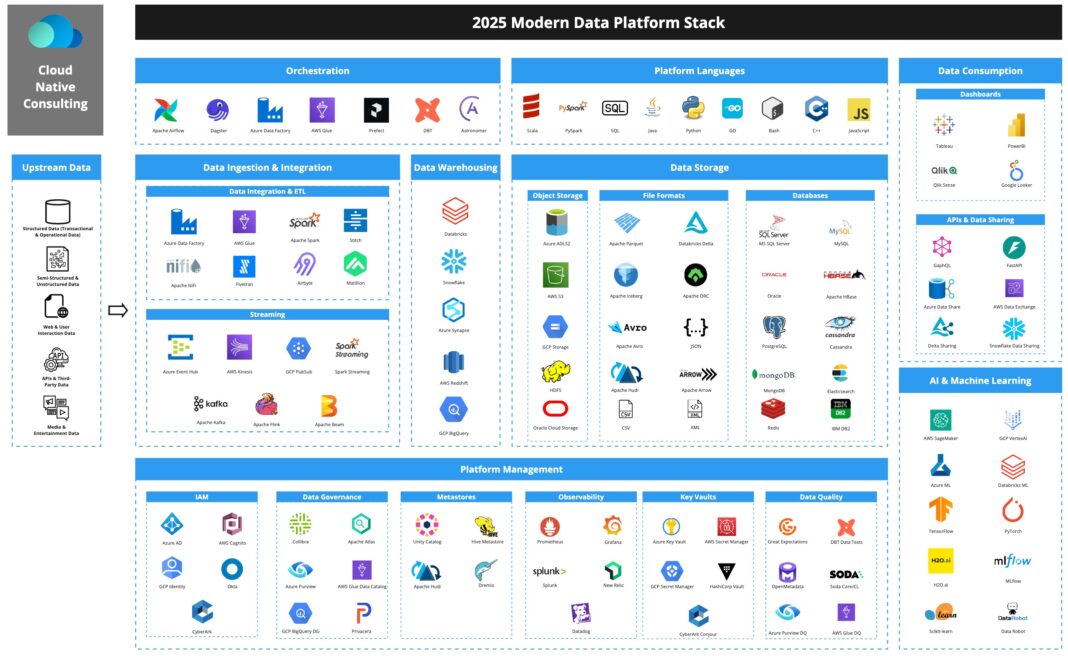

The Modern Data Stack (MDS) is a transformative collection of specialized, cloud-native tools revolutionizing how organizations manage, process, and derive insights from their data. It encompasses Cloud Data Warehouses/Lakes, ELT/Data Ingestion, Data Transformation, Business Intelligence, Data Observability, Data Catalogs, and Reverse ETL solutions, designed to deliver unprecedented agility and analytical power.

Its core architecture is predominantly cloud-native, serverless, and SaaS-based, favoring an ELT (Extract Load Transform) first approach within a highly composable framework, often leveraging a Data Lakehouse pattern to unify structured and unstructured data.

Key data governance features within the Modern Data Stack include comprehensive Data Catalogs for discovery and metadata management, robust Role-Based Access Control (RBAC) across data assets, automated Data Quality monitoring, and sophisticated compliance management capabilities.

For AI/ML integration, the MDS provides a robust and integrated data foundation for machine learning models, offering direct connectors and APIs for major cloud ML platforms (e.g., AWS SageMaker, Google Vertex AI). It often includes built-in ML functions within cloud data warehouses and extensive support for MLOps workflows, ensuring seamless model development and deployment.

The primary competitors and alternatives to the Modern Data Stack include traditional on-premise data warehouse systems (e.g., Teradata, Oracle Exadata), legacy ETL tools (e.g., Informatica PowerCenter without cloud modernization), monolithic enterprise data platforms, and integrated data lakehouse platforms (e.g., Databricks Lakehouse Platform) that aim to consolidate many MDS functions into a single vendor solution.

The Modern Data Stack is rapidly redefining how organizations leverage information, moving beyond traditional limitations to embrace real-time processing, massive scalability, and advanced analytics. Understanding the full potential of a robust Data Stack is crucial for competitive advantage in today’s data-driven world. This paradigm shift offers unprecedented agility and insights, empowering businesses to make data-driven decisions at an accelerated pace and unlock new frontiers in artificial intelligence and machine learning.

Introduction: The Imperative of a Modern Data Stack in the Analytics Era

In an increasingly data-intensive global economy, organizations are awash in information from disparate sources, ranging from customer interactions and operational logs to IoT sensors and third-party feeds. The ability to effectively capture, process, analyze, and act upon this data is no longer a luxury but a fundamental requirement for survival and growth. Traditional data architectures, often characterized by monolithic systems and rigid batch processing, frequently buckle under the weight of modern data volumes, velocity, and variety. This is where the Modern Data Stack emerges as a revolutionary paradigm. It represents a fundamental shift towards agile, scalable, and cloud-native solutions designed to power next-generation analytics and drive the sophisticated demands of artificial intelligence and machine learning.

This article will delve deep into the components, architecture, benefits, and challenges of the Modern Data Stack. We will explore how this collection of specialized tools addresses the shortcomings of legacy systems, fostering a culture of data democratization and innovation. By dissecting its core elements, understanding its business value, and comparing it to traditional approaches, World2Data aims to provide a comprehensive overview for businesses looking to embark on or optimize their journey towards a truly data-driven future.

Core Breakdown: Dissecting the Architecture of the Modern Data Stack

The Modern Data Stack is not a single product but rather an ecosystem of best-of-breed, interconnected tools, each specializing in a particular phase of the data lifecycle. This composable architecture, typically built on cloud infrastructure, allows organizations to pick and choose components that best fit their specific needs, fostering flexibility and scalability. At its heart, the MDS embodies a philosophy centered on an ELT (Extract, Load, Transform) approach, where raw data is first loaded into a powerful cloud data warehouse or data lake and then transformed within that environment, leveraging its immense processing capabilities.

Key Components and Technologies

- Cloud Data Warehouses/Lakes: These form the central repository for the Modern Data Stack. Tools like Snowflake, Google BigQuery, Amazon Redshift, and Databricks (with its Lakehouse platform) offer petabyte-scale storage and compute, serverless operation, and SQL-first interfaces. They serve as the single source of truth, consolidating data from various operational systems, and are optimized for analytical workloads. The shift from traditional data warehouses to cloud-native platforms provides unparalleled scalability, cost-effectiveness, and performance, crucial for handling the massive datasets required for advanced analytics and AI.

- ELT/Data Ingestion Tools: Unlike legacy ETL tools that transform data before loading, modern ELT tools (e.g., Fivetran, Stitch, Airbyte) focus on efficiently extracting data from diverse sources—databases, SaaS applications, APIs, event streams—and loading it directly into the cloud data warehouse/lake in its raw form. This approach preserves all raw data for future analysis and defers transformation until necessary, making pipelines more robust and flexible.

- Data Transformation: Once data resides in the cloud warehouse/lake, tools like dbt (data build tool) enable data engineers and analysts to transform, clean, and model it using SQL. This “Transform” layer is critical for creating curated datasets, aggregate tables, and semantic layers optimized for downstream analytics and machine learning applications. It democratizes data modeling by empowering analytics engineers to build robust data pipelines, moving logic closer to the business users.

- Business Intelligence (BI) Tools: Solutions such as Tableau, Power BI, Looker, and Metabase connect directly to the transformed data in the cloud data warehouse to provide interactive dashboards, reports, and visualizations. These tools make complex data accessible and understandable to business users, enabling them to explore data and uncover insights without requiring deep technical expertise. The agility of the Modern Data Stack ensures that BI tools always have access to the freshest and most comprehensive data.

- Data Observability: A relatively newer but crucial component, data observability platforms (e.g., Monte Carlo, Soda) continuously monitor the health, quality, and reliability of data across the entire data pipeline. They detect anomalies, identify data drift, track schema changes, and alert teams to potential issues before they impact downstream analytics or machine learning models. This proactive approach to data quality is paramount for maintaining trust in data assets and ensuring the integrity of insights.

- Data Catalogs: Essential for data governance and discovery, data catalogs (e.g., Alation, Collibra, Atlan) provide a searchable inventory of all data assets. They capture metadata, lineage, usage statistics, and ownership information, helping users find, understand, and trust data. A comprehensive Data Catalog fosters data democratization, improves collaboration, and supports compliance efforts by providing a single pane of glass for data asset management and access control.

- Reverse ETL: While traditional ETL moves data into a warehouse for analysis, Reverse ETL tools (e.g., Hightouch, Census) push cleaned, transformed, and often enriched data from the data warehouse back into operational SaaS applications (e.g., CRM, marketing automation, support platforms). This ensures that operational teams have access to the latest, most relevant customer insights directly within the tools they use daily, driving personalized customer experiences and automating workflows.

Challenges and Barriers to Adoption

Despite its undeniable advantages, implementing and managing a Modern Data Stack is not without its challenges:

- Tool Proliferation and Integration Complexity: The best-of-breed nature means managing multiple vendors and ensuring seamless integration between diverse tools. While composable, this can lead to operational overhead and requires specialized expertise to stitch everything together effectively.

- Cost Management: Cloud services are highly scalable, but costs can quickly escalate if not properly managed. Optimizing compute and storage usage, especially in complex ELT pipelines and large data warehouses, requires diligent monitoring and cost awareness.

- Data Governance and Security: With data spread across various cloud services and tools, maintaining consistent data governance, privacy, and security policies becomes complex. Ensuring compliance with regulations like GDPR or CCPA across the entire stack requires robust strategies and automated solutions.

- Talent Gap: The specialized skills required to design, implement, and maintain a Modern Data Stack—including expertise in cloud platforms, SQL, data modeling, MLOps, and specific vendor tools—are often in high demand and short supply.

- Data Drift and Quality Assurance: Even with data observability, ensuring consistent data quality and managing data drift (changes in data patterns over time that can degrade model performance) across dynamic pipelines remains a significant ongoing challenge.

Business Value and Return on Investment (ROI)

The strategic investment in a Modern Data Stack yields substantial business value and ROI:

- Faster Insights and Decision Making: By streamlining data pipelines and leveraging powerful cloud analytics, organizations can reduce the time from data ingestion to actionable insight, enabling quicker, more informed strategic decisions and operational improvements. This agility translates directly into competitive advantage.

- Enhanced Data Quality for AI: A well-architected Data Stack provides clean, reliable, and high-quality data, which is the lifeblood of effective AI and machine learning models. Better data quality leads to more accurate predictions, fewer model failures, and more trustworthy AI applications.

- Democratization of Data Access: The composable nature and user-friendly interfaces of MDS tools make data accessible to a broader range of users, from data scientists and analysts to business stakeholders. This fosters a data-aware culture and empowers departmental teams to drive their own analyses.

- Scalability and Flexibility: Cloud-native components offer elastic scalability, allowing businesses to handle fluctuating data volumes and analytical demands without upfront infrastructure investments. This flexibility supports rapid experimentation and adaptation to new business requirements.

- Reduced Operational Overhead (Long-term): While initial setup may require effort, the automation capabilities of ELT tools, serverless architectures, and SaaS solutions significantly reduce the manual effort required for data management, freeing up data teams to focus on higher-value analytical work.

- Robust Support for MLOps: The integrated data foundation facilitates seamless MLOps workflows, from feature engineering and model training to deployment, monitoring, and retraining. This accelerates the deployment of ML models into production and ensures their continuous performance.

Comparative Insight: Modern Data Stack vs. Traditional Data Architectures

To truly appreciate the transformative power of the Modern Data Stack, it’s essential to compare it against its predecessors: traditional on-premise data warehouses and monolithic enterprise data platforms. These legacy systems, while foundational for decades, were designed for a different era of data processing and business needs.

Traditional Data Warehouses & Legacy ETL

Historically, organizations relied on relational database management systems (RDBMS) configured as data warehouses. These systems, often from vendors like Teradata, Oracle Exadata, or Microsoft SQL Server, were built for structured data and rigid schema-on-write approaches. Data was typically ingested and transformed using traditional ETL (Extract, Transform, Load) tools like Informatica PowerCenter, which required significant upfront design and processing before data even reached the warehouse. This approach worked well for reporting on historical, aggregated data, but it presented several critical limitations:

- Scalability Bottlenecks: On-premise hardware had finite capacity, making it challenging and costly to scale compute and storage independently to meet growing data volumes or sudden spikes in analytical demand.

- High Upfront Investment: Significant capital expenditure was required for hardware, software licenses, and specialized personnel.

- Limited Data Variety: Primarily designed for structured, tabular data, these systems struggled with semi-structured (JSON, XML) and unstructured data (text, images, video), which are prevalent in today’s data landscape.

- Rigidity and Slow Development: Changes to schemas or transformation logic were often time-consuming and complex, leading to slow development cycles for new reports or analyses.

- Batch-Oriented Processing: Real-time analytics and continuous data ingestion were difficult to achieve, limiting operational responsiveness.

Monolithic Enterprise Data Platforms

Some enterprise solutions attempted to consolidate many data management functions into a single, often proprietary, platform. While offering a “one-stop shop,” these platforms frequently suffered from vendor lock-in, limited flexibility in adopting best-of-breed tools, and often struggled to keep pace with the rapid innovation seen in specialized cloud services. Their integrated nature could also lead to a single point of failure and make it harder to swap out underperforming components.

The Modern Data Stack Difference

The Modern Data Stack fundamentally diverges from these traditional models by embracing:

- Cloud-Native Architecture: Leveraging the elastic scalability, cost-effectiveness, and managed services of cloud providers (AWS, Azure, GCP). This eliminates the need for managing underlying infrastructure.

- ELT-First Paradigm: Rather than transforming data before loading, ELT loads raw data directly into powerful cloud data warehouses/lakes. Transformation then occurs within the warehouse, harnessing its massive compute power. This allows for schema-on-read flexibility and preserves raw data for multiple analytical use cases.

- Composability and Best-of-Breed: Instead of a single vendor solution, the MDS advocates for combining specialized tools, each excelling in its niche. This creates a flexible, adaptable ecosystem where organizations can choose the best data ingestion, transformation, BI, data catalog, or reverse ETL tool for their specific needs, avoiding vendor lock-in.

- SaaS and Serverless Models: Many MDS components are offered as SaaS, reducing operational burden, while serverless computing handles scaling automatically, minimizing idle costs and management overhead.

- Data Lakehouse Integration: The emerging Data Lakehouse pattern, exemplified by platforms like Databricks, further blurs the lines between data lakes and data warehouses, allowing organizations to manage both structured and unstructured data in a single, unified platform with ACID transactions, schema enforcement, and other data warehouse features directly on data lake storage. This provides unparalleled flexibility for both traditional analytics and advanced machine learning workloads.

- Focus on Data Democratization and MLOps: The MDS is built to empower a wider range of users, from data engineers and analysts to business stakeholders and machine learning engineers. It provides the robust, high-quality data foundation and integrated toolchain necessary for seamless MLOps workflows, accelerating the journey from raw data to deployed AI models.

In essence, the Modern Data Stack represents an agile, scalable, and cost-efficient evolution, perfectly suited to the demands of real-time processing, diverse data types, and the sophisticated analytical requirements of the AI era, making it a superior choice for next-gen analytics.

World2Data Verdict: The Indispensable Backbone for AI-Driven Enterprises

At World2Data, our analysis firmly positions the Modern Data Stack not merely as an architectural upgrade but as an indispensable strategic imperative for any organization aspiring to be data-driven and AI-first. The days of monolithic, rigid data systems are definitively over. The sheer volume, velocity, and variety of data generated today, coupled with the escalating demand for real-time insights and the complex data requirements of machine learning, necessitate an agile, composable, and cloud-native foundation.

Our recommendation is clear: enterprises must embrace and strategically invest in building and continually optimizing their Modern Data Stack. This involves not just adopting cloud data warehouses and ELT tools, but also integrating advanced capabilities like data observability for proactive quality management, comprehensive data catalogs for robust governance, and reverse ETL for actionable operational insights. The future success of AI initiatives hinges on the quality and accessibility of data, and the MDS provides the bedrock for this. Organizations that fail to modernize their data infrastructure risk being outmaneuvered by competitors who can leverage data faster, more intelligently, and at scale.

Looking ahead, we predict the Modern Data Stack will continue to evolve, with increasing consolidation of capabilities within platforms adopting the Data Lakehouse pattern, further enhancing the synergy between analytics and AI workloads. Automation, powered by AI itself, will streamline data pipeline management, making the stack even more efficient and accessible. The emphasis will shift from mere data storage to pervasive data activation, where insights are not just discovered but seamlessly integrated into every operational process. The future belongs to those who build a robust, intelligent, and adaptable Data Stack, unlocking unprecedented analytical depth and fueling the next generation of AI-powered innovation.