Forecast Modeling: How Companies Leverage Data to Predict the Future with Precision

Forecast Modeling is no longer a futuristic concept but a present reality for organizations striving to maintain a competitive edge in today’s dynamic global marketplace. At its essence, this powerful analytical discipline empowers businesses to transform vast amounts of raw, historical data into actionable, forward-looking insights. By systematically anticipating market shifts, consumer behavior patterns, and operational demands, companies can proactively shape their strategies, optimize resource allocation, and significantly mitigate potential risks. This sophisticated approach to data utilization provides a crucial lens through which organizations can navigate uncertainty and unlock new avenues for growth and efficiency.

Unlocking Tomorrow’s Insights Today: An Introduction to Forecast Modeling

In an increasingly data-driven world, the ability to predict future events is paramount for sustained success. Forecast Modeling stands at the forefront of this capability, offering a systematic and rigorous method for peering into what lies ahead. It involves the application of various statistical algorithms, machine learning techniques, and mathematical models to historical data, with the objective of making informed predictions about future trends, outcomes, and behaviors.

The core objective of forecast modeling is to minimize uncertainty and enable proactive decision-making. Whether predicting sales volumes for the next quarter, anticipating supply chain disruptions, or understanding customer churn rates, robust forecast models provide the intelligence needed to move from reactive responses to strategic foresight. The accuracy and reliability of these predictions are intrinsically linked to the quality and comprehensiveness of the data fed into the models. High-quality, relevant data acts as the bedrock, allowing algorithms to uncover subtle patterns, correlations, and causal relationships that drive future outcomes, thereby transforming data from a mere record of the past into a powerful predictor of the future.

The Architecture and Mechanics of Modern Forecast Modeling

Modern Forecast Modeling is a complex interplay of sophisticated platforms, advanced technologies, and robust data governance. It’s not just a single tool but rather an integrated capability built into various enterprise systems, designed to extract maximum predictive value from organizational data assets.

Integrating Forecast Modeling Across Data Platforms

Effective forecast modeling thrives when integrated seamlessly into existing data ecosystems:

- Analytics Platforms: These provide user-friendly interfaces and visualization tools, making forecast insights accessible to business users. They often incorporate pre-built forecasting modules, allowing for rapid deployment and scenario analysis.

- Data Warehouses: Serving as the centralized repository for clean, structured historical data, data warehouses are fundamental to forecast modeling. They ensure data consistency and quality, which are critical inputs for accurate predictions.

- Machine Learning Platforms: For more advanced and custom forecasting needs, ML platforms (like Databricks, AWS SageMaker, or Google AI Platform) offer the computational power and algorithmic flexibility to build, train, and deploy complex models, including deep learning architectures specifically designed for time-series data.

Core Technologies and Architectural Foundations

The technological backbone of forecast modeling is diverse and powerful:

- Cloud-based Analytics: The elastic scalability and immense processing power of cloud environments (AWS, Azure, Google Cloud) are crucial for handling large datasets and complex model training. Cloud platforms also facilitate collaboration and offer a wide array of managed analytical services.

- Big Data Processing: Tools like Apache Spark and Hadoop are essential for processing the sheer volume, velocity, and variety of data required for comprehensive forecasting, especially in real-time or near-real-time scenarios.

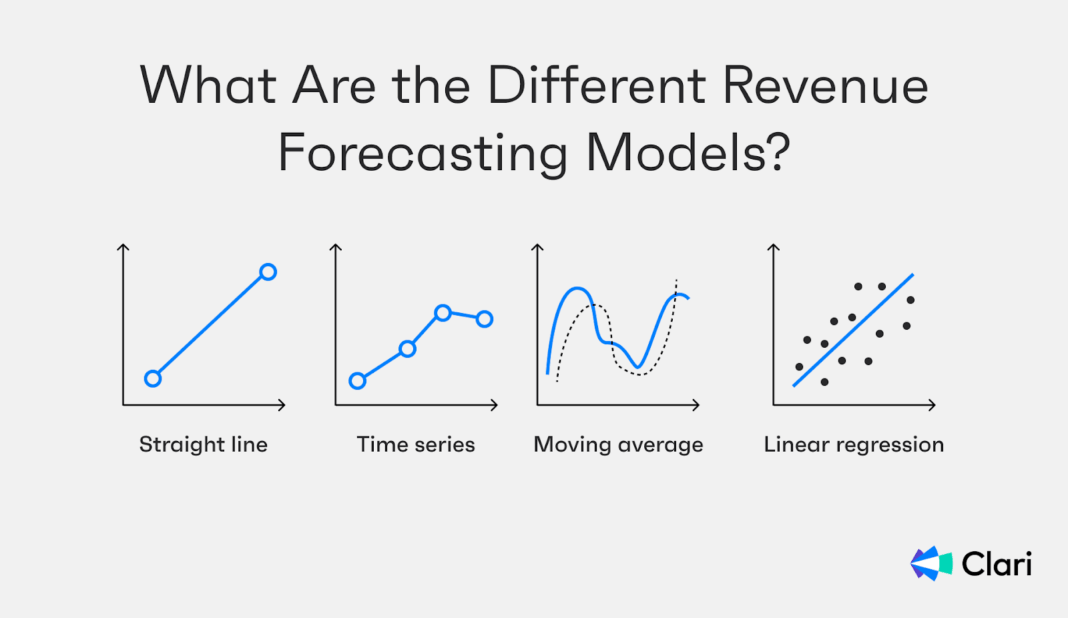

- Time-Series Analysis: This specialized field focuses on data points collected over a period of time. Algorithms such as ARIMA (AutoRegressive Integrated Moving Average), Prophet (developed by Facebook for forecasting time-series data), and Exponential Smoothing are specifically designed to identify trends, seasonality, and cyclic patterns unique to time-series data.

- Statistical Modeling: Beyond time-series specific methods, classical statistical models like linear regression, logistic regression, and generalized additive models provide foundational predictive capabilities, especially when relationships are less dependent on temporal order.

Key Data Governance Features for Robust Forecasting

The reliability of forecast models is directly proportional to the quality of their input data, necessitating strong data governance:

- Data Quality Management: Ensuring data is accurate, complete, consistent, and timely is paramount. This involves data profiling, cleansing, validation rules, and establishing clear data ownership. “Garbage in, garbage out” remains a cardinal rule in forecasting.

- Metadata Management: Understanding the origin, transformation, and meaning of data (data lineage) is crucial for interpretability and trustworthiness of forecast models. Metadata management helps track how historical data becomes predictive insight.

- Access Control: Given that forecast models often rely on sensitive historical data (e.g., sales figures, customer demographics) and generate strategic future predictions, robust access control mechanisms are vital to ensure security and compliance.

Primary AI/ML Integration: Elevating Predictive Power

The integration of Artificial Intelligence and Machine Learning has revolutionized forecast modeling:

- Predictive Analytics: This broad category encompasses all techniques that use historical data to predict future events. ML models amplify this by identifying non-linear patterns and complex interactions that traditional statistical methods might miss.

- Time-Series Forecasting Algorithms: While statistical methods like ARIMA are potent, ML algorithms like Gradient Boosting Machines (e.g., XGBoost, LightGBM) and specialized deep learning architectures (e.g., Recurrent Neural Networks – RNNs, LSTMs, Transformers) excel at capturing intricate temporal dependencies and handling multivariate time series, leading to significantly improved accuracy.

- Machine Learning Models: Beyond time-series, general ML models such as Random Forests, Support Vector Machines, and various regression models are employed to predict discrete events or continuous values based on a diverse set of input features.

Challenges and Barriers to Adoption

Despite its immense benefits, implementing and sustaining effective forecast modeling presents several challenges:

- Data Quality and Availability: Poor data quality, missing values, or insufficient historical data can severely hamper model accuracy and reliability. Data silos also make comprehensive forecasting difficult.

- Model Complexity and Interpretability: Advanced ML models, particularly deep learning, can be “black boxes,” making it difficult for stakeholders to understand how predictions are derived, leading to skepticism and reduced trust.

- Data Drift and Concept Drift: Real-world phenomena are dynamic. Models trained on past data can become stale and inaccurate as underlying data distributions or relationships change over time (data drift) or as the definition of what is being predicted shifts (concept drift). Continuous monitoring and retraining are essential.

- MLOps Complexity: Moving a forecast model from development to production, monitoring its performance, scaling it, and retraining it requires a robust MLOps (Machine Learning Operations) framework, which can be challenging to build and maintain.

- Skill Gap: There’s a persistent shortage of data scientists, machine learning engineers, and domain experts who possess the necessary blend of statistical knowledge, programming skills, and business acumen to effectively design, implement, and interpret forecast models.

Business Value and Return on Investment (ROI)

The investment in sophisticated forecast modeling capabilities yields substantial ROI across various business functions:

- Faster Model Deployment: Streamlined pipelines and cloud resources enable quicker development and deployment of forecasting models, allowing businesses to react faster to market changes.

- Enhanced Decision Making: Accurate predictions empower proactive, data-driven decisions, reducing reliance on intuition and improving strategic outcomes across all departments.

- Optimized Resource Allocation: By precisely predicting demand, companies can optimize inventory levels, workforce scheduling, manufacturing capacity, and capital expenditure, leading to significant cost savings and increased profitability.

- Improved Data Quality for AI: The iterative nature of forecasting often highlights data quality issues, prompting improvements that benefit all other AI/ML initiatives within the organization.

- Competitive Advantage: Organizations with superior forecasting capabilities can anticipate market trends, identify opportunities, and mitigate risks faster and more effectively than competitors, creating a significant edge.

The visual representation above provides a simplified look into how different inputs feed into various models to generate forecasts. Understanding this intricate architecture is key to appreciating the power and complexity of modern forecast modeling, where data flows seamlessly from collection to insightful prediction.

Forecast Modeling vs. Traditional Data Architectures: A Paradigm Shift

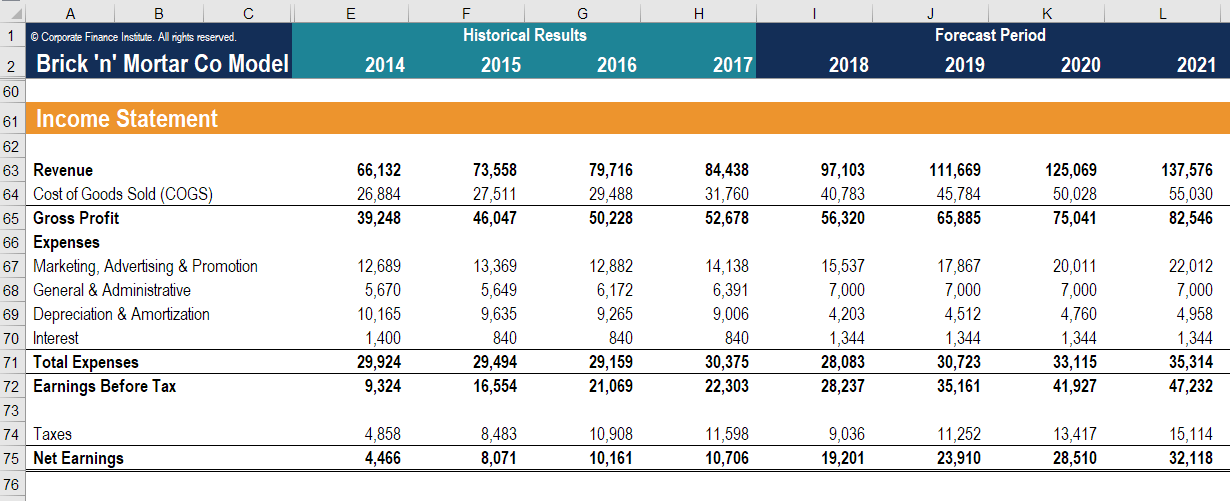

The advent of sophisticated Forecast Modeling marks a significant evolution from traditional data architectures like data lakes and data warehouses. While these foundational systems remain indispensable, their primary strength lies in descriptive analytics – answering “what happened?” and “why did it happen?” They serve as robust repositories for historical data, enabling comprehensive reporting, business intelligence dashboards, and retrospective analysis.

Forecast modeling, however, transcends this by focusing on predictive and prescriptive analytics – addressing “what will happen?” and “what should we do?”. It builds upon the solid foundation of clean, accessible data within data warehouses and the raw, diverse datasets often stored in data lakes, but adds a crucial layer of intelligence. Instead of merely aggregating past events, forecast modeling actively processes this data through complex algorithms to project future states. This shift is not about replacing traditional architectures but about augmenting them, transforming static historical records into dynamic, forward-looking insights. Modern forecast platforms integrate directly with these sources, pulling in operational data, external market indicators, and real-time streams to make predictions that are not only accurate but also actionable, enabling businesses to move from understanding the past to actively shaping their future.

The financial statement analysis depicted here is a prime example of how historical financial data, typically residing in traditional data warehouses, is utilized as a fundamental input for robust financial forecast modeling. By analyzing past income, expenses, and asset movements, businesses can project future financial performance, cash flows, and overall economic health.

World2Data Verdict: Embracing a Predictive Future

The landscape of data-driven decision-making is irreversibly shifting towards foresight. Forecast Modeling is no longer a luxury but a strategic imperative for any organization aiming to thrive in an increasingly volatile and competitive world. From enterprise-grade solutions offered by key competitors like Anaplan, SAP Analytics Cloud, IBM Cognos Analytics, and SAS, to the flexible, open-source power of Python and R programming languages with their rich libraries (scikit-learn, TensorFlow, Prophet), and specialized ML platforms such as Databricks, AWS SageMaker, and Google AI Platform, the tools and technologies available are more potent than ever.

The World2Data verdict is clear: companies must strategically invest in developing mature forecast modeling capabilities. This involves a multi-pronged approach: establishing a robust data governance framework to ensure data quality and accessibility, adopting a hybrid technology strategy that leverages both commercial platforms for ease of use and specialized ML environments for custom, high-accuracy models, and critically, fostering a culture of continuous learning and MLOps to ensure models remain relevant and effective. The future belongs to those who can not only collect and analyze data but also skillfully extrapolate from it, turning data into a compass that accurately guides business strategy and operational efficiency. Organizations that master Forecast Modeling will consistently outmaneuver their peers, securing a sustainable competitive advantage through proactive and intelligent decision-making.