Unlocking Enterprise Potential: A Deep Dive into Modern Big Data Architecture

Platform Category: Data Lakehouse

Core Technology/Architecture: Decoupled Storage and Compute, Open Table Formats (e.g., Delta Lake, Apache Iceberg), Cloud-Native

Key Data Governance Feature: Unified Data Catalog, Fine-Grained Access Control

Primary AI/ML Integration: Direct data access for ML frameworks, Integration with major ML Clouds

Main Competitors/Alternatives: Data Mesh, Traditional Data Warehouse, Two-tier Lambda Architecture

Modern Big Data Architecture for the Enterprise is no longer an optional luxury but a strategic imperative. Businesses seeking to harness the immense power of their information streams understand that a robust, forward-thinking Modern Big Data Architecture underpins every critical decision and innovative leap. It’s about transforming raw, disparate data into actionable intelligence at an unprecedented scale and speed, moving beyond traditional silos to a unified, intelligent data ecosystem.

Introduction: Why Modern Big Data Architecture Matters in the Enterprise

In today’s hyper-competitive and data-saturated landscape, enterprises are grappling with an ever-increasing volume, velocity, and variety of data. From customer interactions and sensor readings to financial transactions and operational logs, the sheer scale of information can be overwhelming without a sophisticated framework to manage it. This is precisely why a well-conceived Modern Big Data Architecture has become indispensable. These architectures empower organizations to navigate the complexities of today’s data explosion, moving from retrospective analysis to proactive, predictive insights. They are designed to be agile, scalable, and cost-efficient, providing the foundation for everything from advanced analytics to cutting-edge artificial intelligence and machine learning applications.

The objective of this deep dive is to explore the fundamental principles, core components, and strategic advantages of implementing a Modern Big Data Architecture. We will delve into how these architectures provide scalability and flexibility to grow effortlessly with increasing data volumes, facilitate real-time insights enabling swift responses to market changes, and achieve cost efficiency through optimized resource utilization and cloud adoption. Ultimately, understanding and deploying a robust Modern Big Data Architecture is crucial for any enterprise aiming to maintain a competitive edge and foster a data-driven culture.

Core Breakdown: Dissecting the Modern Big Data Architecture

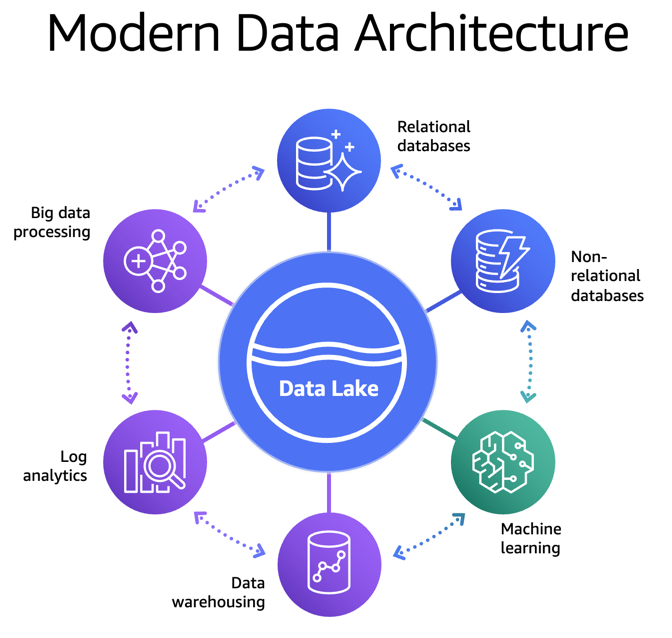

A well-designed Modern Big Data Architecture integrates several critical elements for comprehensive, end-to-end data handling, forming what is often referred to as a “Data Lakehouse” paradigm. This approach effectively marries the flexibility and low-cost storage of a data lake with the structure and management capabilities of a data warehouse.

The Data Lakehouse Foundation: Bridging Flexibility and Structure

At its heart, the Modern Big Data Architecture leverages a Data Lakehouse approach. This begins with a data lake acting as a central repository, capable of storing diverse data types—structured, semi-structured, and unstructured—in their native format. Unlike traditional data warehouses that demand a predefined schema, data lakes offer schema-on-read flexibility, making them ideal for raw, incoming data streams. However, pure data lakes often suffer from a lack of ACID (Atomicity, Consistency, Isolation, Durability) transactions, data quality issues, and poor governance. The “lakehouse” concept addresses these shortcomings by adding a transactional layer on top of the data lake, typically via open table formats.

Decoupled Storage and Compute with Open Table Formats

A hallmark of modern architectures is the clear separation of storage and compute. This architectural decision brings immense benefits in terms of scalability, cost-efficiency, and flexibility. Data can reside in cost-effective object storage (e.g., S3, ADLS Gen2, GCS) without being tied to specific compute resources. This allows compute engines to scale independently based on workload demands, whether for batch processing, real-time analytics, or machine learning model training.

This decoupled model is further enhanced by the adoption of open table formats like Delta Lake, Apache Iceberg, and Apache Hudi. These formats provide critical data management features directly on top of object storage, including:

- ACID Transactions: Ensuring data reliability and consistency, even with concurrent reads and writes.

- Schema Evolution: Allowing schemas to change over time without breaking existing applications.

- Time Travel: The ability to query historical versions of data, crucial for audits, rollbacks, and reproducible ML experiments.

- Data Versioning and Rollbacks: Enabling reliable data management and recovery.

- Unified Batch and Streaming: Simplifying data pipelines by handling both modes within the same framework.

Data Processing Engines: Powering Insights

Advanced processing engines are the workhorses of a Modern Big Data Architecture, enabling high-speed data manipulation, transformation, and analysis.

- Apache Spark: Remains a dominant force for large-scale data processing, offering in-memory computation, support for various languages (Scala, Python, Java, R), and modules for SQL, streaming, and machine learning. Its ability to handle both batch and stream processing makes it incredibly versatile.

- Apache Flink: Excels in real-time stream processing, offering true event-at-a-time processing with low latency, stateful computations, and fault tolerance. It’s ideal for use cases requiring immediate insights, such as fraud detection or personalized recommendations.

- Presto/Trino: These distributed SQL query engines are designed for interactive analytical queries across vast datasets, often federating queries across different data sources within the lakehouse.

Data Governance and Security: The Pillars of Trust

Ensuring the integrity, quality, and protection of data is paramount within any Modern Big Data Architecture. This goes beyond simple access control and encompasses a holistic approach to data lifecycle management.

- Unified Data Catalog: A central metadata repository that provides a comprehensive view of all data assets across the enterprise. It helps data consumers discover, understand, and trust data by providing context, lineage, and usage policies. Tools like Apache Atlas or commercial offerings play a crucial role here.

- Fine-Grained Access Control: Beyond role-based access, modern architectures demand granular control, allowing permissions to be set at the column, row, or even cell level. This is essential for compliance (e.g., masking PII) and ensuring only authorized users or applications can access sensitive data segments.

- Data Quality and Observability: Robust data pipelines and validation processes are integrated at every stage to maintain high standards of data accuracy, completeness, and consistency. Data observability platforms monitor data health, proactively identifying anomalies and potential drift.

- Compliance and Privacy: Adherence to evolving data regulations like GDPR, CCPA, HIPAA, and industry-specific mandates is built directly into the architectural framework. This includes data masking, encryption at rest and in transit, auditing capabilities, and data retention policies.

Leveraging Cloud for Agility and Scalability

Cloud platforms are foundational to the agility, elasticity, and efficiency of Modern Big Data Architecture implementations. They provide the underlying infrastructure and a rich ecosystem of managed services.

- Infrastructure as a Service (IaaS): Provides foundational compute (VMs), storage (object storage, block storage), and networking resources on demand, offering maximum control.

- Platform as a Service (PaaS): Offers managed services for data processing (e.g., AWS EMR, Azure Databricks, Google Dataproc), analytics (e.g., Snowflake, BigQuery), and machine learning (e.g., SageMaker, Azure ML, Vertex AI). These abstract away infrastructure management, allowing teams to focus on data and applications.

- Serverless Computing: Further abstracts infrastructure, allowing focus purely on code execution and data pipelines without provisioning or managing servers (e.g., AWS Lambda, Azure Functions, Google Cloud Functions for event-driven processing).

Challenges and Barriers to Adoption

While the benefits of a Modern Big Data Architecture are compelling, enterprises often face significant hurdles during adoption and implementation:

- Complexity and Skill Gaps: The integration of diverse technologies (data lakes, open table formats, various processing engines, cloud services) can be complex. There’s a persistent shortage of skilled data engineers, architects, and MLOps practitioners who can design, build, and maintain these sophisticated systems.

- Data Quality and Governance: Ensuring consistent data quality across vast, disparate datasets is an ongoing challenge. Implementing robust data governance, including data cataloging, lineage tracking, and fine-grained access control, requires meticulous planning and continuous effort.

- Cost Management: While cloud offers elasticity, managing costs in a dynamic big data environment can be tricky. Without proper optimization and FinOps practices, cloud bills can quickly escalate, especially with inefficient resource utilization or runaway compute jobs.

- Data Silos and Integration: Despite efforts to unify data, legacy systems and departmental silos can persist, hindering the creation of a truly holistic view of enterprise data. Integrating these disparate sources cleanly and efficiently is a major engineering effort.

- MLOps Complexity and Data Drift: For AI-driven organizations, operationalizing machine learning models (MLOps) within this architecture adds another layer of complexity. Challenges include managing model versions, deploying models reliably, monitoring performance, and addressing data drift—where the statistical properties of the target variable, or the relationship between the input variables and the target variable, change over time, degrading model accuracy.

Business Value and Return on Investment (ROI)

Overcoming these challenges yields substantial business value and a significant ROI. A robust Modern Big Data Architecture provides:

- Faster Time to Insight and Market: By streamlining data ingestion, processing, and analysis, businesses can derive insights much quicker. This enables faster decision-making, quicker responses to market changes, and accelerated deployment of new products and services.

- Enhanced Data Quality for AI: The structured and governed nature of the Data Lakehouse ensures that AI/ML models are trained on high-quality, reliable data, leading to more accurate predictions and better model performance. Direct data access for ML frameworks and integration with major ML clouds simplify the MLOps lifecycle.

- Significant Cost Efficiencies: The decoupled storage and compute model, combined with the elasticity of cloud resources, allows organizations to pay only for the resources they consume. This eliminates the need for expensive, fixed-capacity on-premise hardware and reduces operational expenditures.

- Improved Decision-Making and Innovation: Democratizing access to high-quality, comprehensive data across the organization empowers every department to make data-informed decisions. This fosters a culture of innovation, enabling the development of new data products, services, and business models.

- Scalability and Future-Proofing: The architecture is designed to scale effortlessly, accommodating petabytes of data and thousands of concurrent users. Its open nature and cloud-native design ensure it can readily adopt new technologies and methodologies as they emerge, future-proofing the enterprise’s data strategy.

Comparative Insight: Modern Big Data Architecture vs. Traditional Approaches

To fully appreciate the paradigm shift brought by Modern Big Data Architecture, particularly the Data Lakehouse model, it’s crucial to compare it against its predecessors: the Traditional Data Warehouse and the basic Data Lake.

Traditional Data Warehouse

The traditional data warehouse, epitomized by relational databases like Teradata or Oracle Exadata, has served enterprises for decades.

- Strengths: Excellent for structured data, strong ACID compliance, mature tools for reporting and BI, robust governance for known data.

- Weaknesses: Rigid schema-on-write model, expensive scalability (especially for compute and storage), poor handling of unstructured/semi-structured data, limited support for real-time processing and advanced analytics like machine learning directly on raw data. It’s often slow to adapt to new data sources or business requirements.

Traditional Data Lake

Emerging to address the limitations of data warehouses, the data lake offered a low-cost repository for all data types.

- Strengths: Stores raw data in native formats, schema-on-read flexibility, cost-effective storage (object storage), scalable for vast data volumes, excellent for exploratory data science and machine learning on raw data.

- Weaknesses: “Data swamps” often arise due to lack of governance, poor data quality, absence of ACID transactions, difficulty in ensuring data consistency and reliability, making it challenging for mission-critical BI and reporting. Security and access control can also be more complex to manage uniformly.

The Modern Big Data Architecture (Data Lakehouse) Advantage

The Modern Big Data Architecture, centered around the Data Lakehouse concept, seeks to combine the best aspects of both.

- Unified Platform: It provides a single platform that can handle diverse data types, from raw unstructured data to highly curated structured datasets. This eliminates the need for separate, often redundant, data copies in a data lake for raw data and a data warehouse for curated data.

- ACID Transactions & Data Quality: By layering open table formats (Delta Lake, Iceberg) over object storage, the lakehouse brings the reliability and data integrity of data warehouses to the flexible, scalable environment of a data lake. This ensures data consistency and quality for both analytics and ML workloads.

- Flexibility & Governance: It supports both schema-on-read for exploration and schema enforcement for critical reporting, coupled with robust data governance features like unified data catalogs and fine-grained access control.

- Real-time & Batch Processing: Designed to support a wide array of workloads, from low-latency streaming analytics to large-scale batch transformations, all on the same underlying data.

- AI/ML Native: Directly integrates with AI/ML frameworks, allowing data scientists to access high-quality, versioned data directly from the lakehouse without complex ETL processes into separate feature stores or ML platforms.

While alternatives like the Data Mesh propose a decentralized approach to data ownership and architecture, and the Two-tier Lambda Architecture aimed to combine batch and speed layers, the Data Lakehouse stands out for its practical unification of data storage and processing capabilities under a single, governed paradigm. It streamlines the data journey, reduces complexity, and accelerates the path from raw data to actionable insights for enterprise-wide applications.

World2Data Verdict: Embracing the Evolving Data Landscape

The era of siloed data systems and rigid architectures is rapidly drawing to a close. World2Data.com asserts that for enterprises aiming to truly thrive in the data-driven economy, embracing a sophisticated Modern Big Data Architecture is not merely an upgrade, but a fundamental strategic repositioning. The Data Lakehouse paradigm, with its decoupled storage and compute, reliance on open table formats, and cloud-native DNA, represents the optimal blend of flexibility, scalability, and governance required for today’s diverse data needs. We recommend that organizations prioritize investments in comprehensive data governance tooling, particularly unified data catalogs and fine-grained access controls, to unlock the full potential of their data assets while maintaining compliance and trust. Furthermore, fostering a culture of continuous learning and upskilling in key areas such as MLOps and cloud data engineering will be crucial for successful implementation and sustained innovation. The future of enterprise data management lies in these intelligent, integrated, and adaptable architectures, empowering businesses not just to analyze the past but to confidently shape the future.