Processing Engine: Running Large-Scale Data Computations – World2Data Deep Dive

The digital age is characterized by an unprecedented deluge of data, making efficient and scalable data processing not just an advantage, but a fundamental requirement for innovation and competitive edge. A robust Processing Engine stands as the architectural backbone for tackling this challenge, enabling organizations to transform raw, massive datasets into actionable intelligence at speed. This deep dive by World2Data explores the critical role of processing engines in modern data landscapes, dissecting their core components, challenges, and profound impact on business intelligence and advanced analytics.

Introduction: The Imperative for Powerful Data Processing

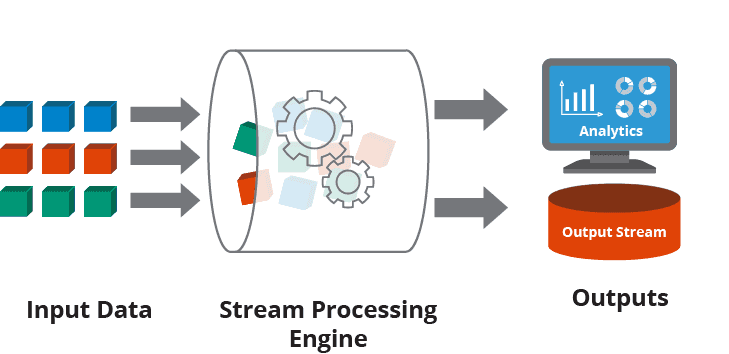

In today’s data-driven world, the ability to process and analyze vast quantities of information is paramount for any organization aiming to stay competitive. From real-time financial transactions to complex scientific simulations and personalized customer experiences, the need for a highly efficient Processing Engine is universal. These engines are sophisticated frameworks designed to execute complex computations across distributed datasets, overcoming the limitations of traditional, single-machine processing. They are the workhorses that power big data analytics, machine learning pipelines, and artificial intelligence initiatives by providing the necessary computational horsepower to handle data at scale. Understanding the architecture, capabilities, and strategic importance of these engines is crucial for any enterprise navigating the complexities of large-scale data computations.

As a foundational element within the Big Data Processing Engine/Framework category, these systems are not merely about speed; they are about resilience, scalability, and the ability to extract value from data that would otherwise remain untapped. Our objective in this article is to provide a comprehensive analysis of what makes a world-class processing engine, exploring its technical underpinnings, its role in modern data stacks, and its transformative potential for businesses across all sectors.

Core Breakdown: Architecture and Capabilities of a Modern Processing Engine

A modern Processing Engine is a marvel of distributed computing, built to handle petabytes of data with unparalleled efficiency and reliability. At its heart, it leverages a complex interplay of technologies and architectural patterns to achieve its monumental task.

Underlying Technologies and Architecture

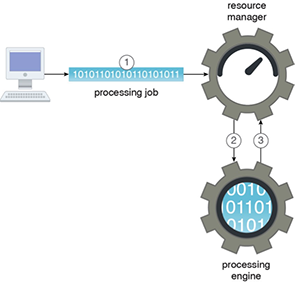

- Distributed Computing: The fundamental principle is to break down large computational tasks into smaller parts and distribute them across a cluster of machines. This parallelization significantly reduces processing time, making it feasible to analyze datasets that would overwhelm a single server. Frameworks like Apache Hadoop MapReduce, Apache Spark, and Apache Flink exemplify this paradigm, orchestrating thousands of nodes to work in concert.

- In-memory Processing: A major leap forward in performance came with the advent of in-memory processing. Instead of constantly reading and writing data to slower disk storage, these engines keep frequently accessed data in RAM, drastically accelerating iterative algorithms and real-time analytics. This is a key differentiator for engines like Apache Spark, enabling lightning-fast operations for tasks such as machine learning model training and interactive queries.

- Fault Tolerance: Given the complexity and scale of distributed systems, hardware and software failures are inevitable. A robust processing engine incorporates sophisticated fault tolerance mechanisms. This typically involves replicating data and computation states across multiple nodes, ensuring that if one node fails, the system can automatically recover and continue processing without data loss or interruption. This guarantees high availability and data integrity, which are non-negotiable for critical business operations.

- Often Open Source: Many of the leading processing engines, such as Apache Spark, Apache Flink, and Apache Hadoop, are open-source projects. This fosters a vibrant community of developers, leading to continuous innovation, extensive documentation, and a rich ecosystem of connectors and libraries. The open-source nature also provides flexibility and cost-effectiveness for organizations, allowing them to customize and integrate these engines into their existing infrastructure.

Key Components and Features

- Scalable Data Ingestion: Before processing, data must be ingested efficiently. Modern engines integrate seamlessly with various data sources, including streaming platforms (e.g., Apache Kafka), batch storage (e.g., HDFS, S3), and relational databases.

- Transformation and ETL Capabilities: These engines provide powerful APIs and domain-specific languages (e.g., Spark SQL, PySpark) for complex data transformations, cleaning, aggregation, and integration – essential steps in any Extract, Transform, Load (ETL) or Extract, Load, Transform (ELT) pipeline.

- Programmatic Access Control Mechanisms: Data governance is critical. Processing engines often provide granular, programmatic controls to manage who can access, process, and transform data. This includes role-based access control (RBAC), attribute-based access control (ABAC), and integration with enterprise identity management systems.

- Data Masking Capabilities via Transformations: For sensitive data, engines can apply transformation-based data masking, anonymization, or pseudonymization techniques during processing. This ensures compliance with privacy regulations (e.g., GDPR, CCPA) without hindering analytical capabilities.

- Integration with External Governance Tools: Beyond built-in features, robust processing engines are designed to integrate with external data governance platforms, metadata management tools, and data catalogs, providing a unified view and control plane for data assets.

Primary AI/ML Integration

The synergy between a powerful Processing Engine and AI/ML is profound. These engines are not just for processing raw data; they are foundational for building, training, and deploying machine learning models at scale.

- Built-in Libraries for Scalable Machine Learning Algorithms: Many processing engines, particularly Apache Spark with its MLlib library, offer optimized, distributed implementations of common machine learning algorithms. This allows data scientists to train models like linear regression, logistic regression, decision trees, and clustering algorithms on massive datasets without needing to re-engineer them for distributed execution.

- Distributed Feature Engineering Support: Feature engineering – the process of transforming raw data into features suitable for machine learning models – is often the most time-consuming part of an ML project. Processing engines provide robust capabilities for distributed feature engineering, allowing the creation of complex features across vast datasets efficiently and scalably.

- API Integration with External ML Frameworks: While some engines have built-in ML capabilities, they also provide seamless API integration with popular external ML frameworks such as TensorFlow, PyTorch, and Scikit-learn. This allows data scientists to leverage their preferred tools while still benefiting from the processing engine’s distributed computation power for data preparation and model inference.

Challenges and Barriers to Adoption

Despite their immense power, implementing and managing large-scale Processing Engines comes with its own set of challenges:

- Complexity of Deployment and Management: Setting up, configuring, and maintaining a distributed processing cluster requires significant expertise. Issues like resource allocation, performance tuning, and troubleshooting failures across hundreds or thousands of nodes can be daunting for organizations without specialized IT teams.

- Cost and Resource Intensiveness: While open-source options reduce software licensing costs, the infrastructure required to run these engines can be substantial. Large clusters demand significant compute, memory, and storage resources, leading to considerable operational expenses, especially in cloud environments if not managed efficiently.

- Talent Gap: There’s a persistent shortage of skilled professionals proficient in big data technologies, distributed systems, and specific processing engine frameworks like Spark or Flink. This talent gap can hinder adoption and effective utilization.

- Data Governance and Security: Ensuring robust data governance, access control, and security across a distributed data landscape is complex. Implementing consistent policies for data lineage, quality, privacy, and compliance across various data sources and processing stages requires careful planning and specialized tools.

- Evolving Ecosystem: The big data ecosystem is constantly evolving, with new frameworks, tools, and best practices emerging rapidly. Keeping up with these changes and integrating them into existing pipelines can be a continuous challenge.

Business Value and ROI

The investment in a powerful Processing Engine yields significant returns for businesses across various sectors:

- Faster Insights and Decision-Making: By enabling rapid processing of large datasets, these engines drastically reduce the time from data ingestion to actionable insights. This agility allows businesses to respond quickly to market changes, customer demands, and emerging threats, fostering a proactive rather than reactive strategy.

- Enhanced Operational Efficiency: Automation of complex ETL processes, real-time anomaly detection, and predictive maintenance capabilities powered by these engines lead to optimized operations, reduced downtime, and lower operational costs.

- Competitive Advantage through Data Monetization: Organizations can uncover hidden patterns, customer behaviors, and market trends from their vast data reserves. This leads to the development of new data products, personalized services, and innovative business models, creating a distinct competitive edge.

- Improved Data Quality for AI/ML: By efficiently processing, cleaning, and transforming data at scale, processing engines provide the high-quality, structured datasets essential for training robust and accurate AI and machine learning models. This directly translates to more reliable predictions and better automated decision-making.

- Scalability and Flexibility: Businesses can scale their data processing capabilities up or down based on demand, avoiding costly over-provisioning and ensuring that their infrastructure can grow alongside their data volumes and analytical needs. This flexibility is particularly beneficial in dynamic cloud environments.

Comparative Insight: Processing Engine vs. Traditional Data Lake/Data Warehouse

Understanding the role of a modern Processing Engine requires drawing a clear distinction from traditional data storage and analytical paradigms like Data Lakes and Data Warehouses. While these technologies often coexist within a modern data architecture, their primary functions and capabilities differ significantly.

A **Traditional Data Warehouse** is primarily optimized for structured data storage and OLAP (Online Analytical Processing) queries. Data is typically cleaned, transformed, and loaded into a predefined schema before being stored. This structured approach ensures high data quality and fast analytical query performance for known business questions, but it struggles with diverse, unstructured data and real-time processing needs. Its schema-on-write approach offers strong governance but limits flexibility and agility, making it less suitable for exploratory data science or raw data processing at scale.

A **Data Lake**, conversely, is designed to store vast amounts of raw, un-transformed data in its native format, regardless of its structure. It employs a “schema-on-read” approach, offering immense flexibility for storing everything from relational databases to images, videos, and IoT sensor data. While a Data Lake provides a cost-effective storage solution for big data, it typically lacks the inherent processing capabilities to transform and analyze this raw data efficiently on its own. It serves as a vast repository, but without a powerful processing engine, extracting value from this raw data can be computationally intensive and slow.

This is where the Processing Engine steps in. It is not primarily a storage solution like a Data Warehouse or Data Lake, but rather the computational layer that acts upon the data stored within them. A processing engine leverages the raw data in a Data Lake for exploratory analysis, feature engineering, and real-time streams, or it can efficiently transform and cleanse data before it’s loaded into a Data Warehouse. It provides the “compute” for big data, enabling organizations to:

- Process Unstructured and Semi-structured Data: While Data Lakes store it, processing engines effectively analyze it, converting raw logs, social media feeds, or sensor data into usable formats.

- Execute Complex Transformations: Beyond simple SQL queries, processing engines handle highly complex, iterative transformations and aggregations across massive datasets that would be inefficient or impossible in a traditional Data Warehouse.

- Enable Real-time Analytics: Unlike the batch-oriented nature of many Data Warehouses, modern processing engines like Apache Flink or Spark Streaming are built for continuous, low-latency processing of data streams, crucial for real-time dashboards, fraud detection, and immediate customer responses.

- Power Machine Learning and AI: As highlighted, the distributed computing capabilities of engines like Spark are indispensable for training and deploying machine learning models on large datasets, a function far beyond the scope of a traditional Data Warehouse or the raw storage of a Data Lake.

In essence, a Data Lake provides the reservoir of data, a Data Warehouse provides a structured view for business intelligence, but a Processing Engine provides the industrial-strength machinery to refine, analyze, and extract intelligence from these vast data stores. They are complementary components, with the processing engine acting as the crucial bridge that turns raw data potential into tangible business outcomes.

World2Data Verdict: The Unstoppable Momentum of Processing Engines

The trajectory of data processing technology is unequivocally towards more intelligent, efficient, and scalable engines. At World2Data, we believe that the modern Processing Engine is not merely an infrastructure component; it is the central nervous system of any data-driven enterprise. Its capability to handle massive, diverse datasets at unprecedented speeds is critical for fueling innovation, from personalized customer experiences to groundbreaking scientific discoveries. Looking ahead, we predict a deeper convergence of processing engines with serverless computing paradigms and even more sophisticated AI/ML integration, making them virtually invisible yet immensely powerful tools for developers and data scientists. Organizations must strategically invest in these technologies, focusing not just on deployment, but on fostering the talent and governance frameworks necessary to fully harness their transformative power. The future of competitive advantage lies squarely in the hands of those who can master large-scale data computations, making the processing engine an indispensable asset in the digital economy.