Compute Layer: Scaling Data Workloads for Peak Efficiency and Performance

The Compute Layer is the engine of modern data platforms, orchestrating the processing power required to transform raw data into actionable insights. Its ability to scale efficiently is paramount for handling ever-increasing data volumes and diverse analytical demands. This article delves into the architecture and strategies that enable robust compute layers, highlighting their crucial interplay with the underlying Storage Layer to achieve unparalleled performance and cost-effectiveness in distributed data processing infrastructure.

Introduction: Understanding the Compute Layer Paradigm

In today’s data-driven world, organizations face the relentless challenge of processing colossal volumes of information with increasing speed and complexity. At the heart of this capability lies the Compute Layer, the dynamic processing infrastructure that executes data workloads, from simple queries to intricate machine learning model training. World2Data.com identifies the efficient scaling of this layer as a core principle in modern IT, deeply influenced by and dependent on a robust Storage Layer.

This deep dive explores how modern data platforms achieve this scalability, focusing on architectural innovations and best practices. We will examine the core technologies, the inherent challenges, and the significant business value derived from an optimized compute layer, ultimately comparing its advanced capabilities against traditional data architectures. Our objective is to provide a comprehensive understanding of how data workloads scale efficiently, ensuring that resources are perfectly matched to demand, thereby driving faster insights and significant ROI.

Core Breakdown: The Architecture of Scalable Compute Layers

The Paradigm Shift: Decoupling Compute and Storage

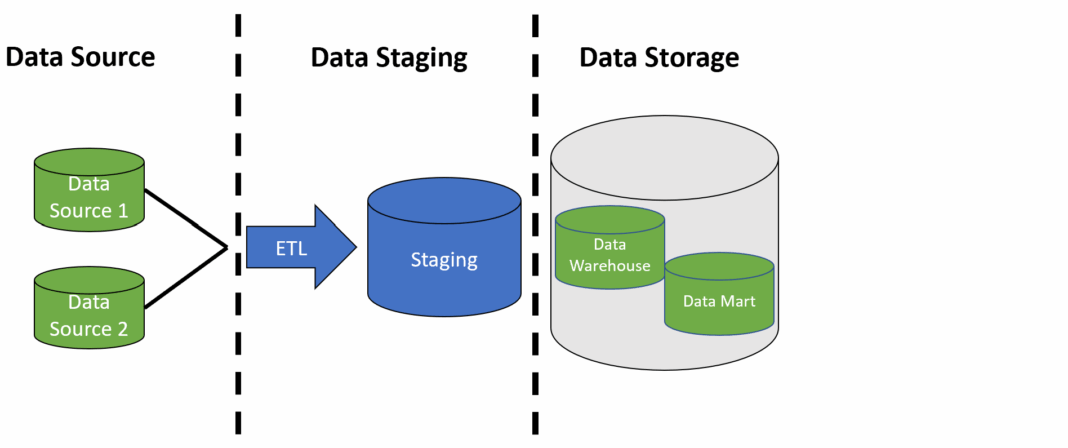

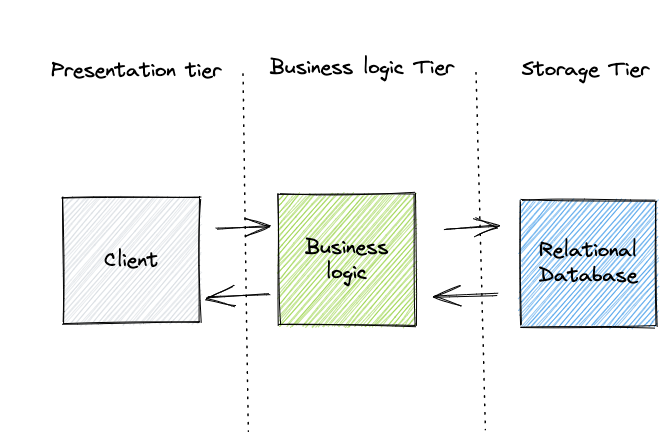

The foundation of modern, scalable data processing lies in the architectural principle of separating compute from storage. This decoupling is a fundamental shift from monolithic systems where these components were tightly coupled, leading to resource contention and inflexible scaling. In a decoupled architecture, the Compute Layer can be dynamically provisioned and scaled independently based on workload needs, without impacting data persistence or accessibility. This agility allows organizations to right-size their processing power for any given task, from ad-hoc analytics to continuous ETL pipelines, ensuring efficiency without the overhead of oversized infrastructure. The Storage Layer, often comprising distributed object storage like Amazon S3, Google Cloud Storage, or Azure Blob Storage, then serves as a highly available, durable, and performant repository for all data. This setup ensures data is consistently available and accessible, with high-throughput and low-latency access directly translating to efficient processing within the compute layer, especially for data-intensive applications.

Key Components and Technologies

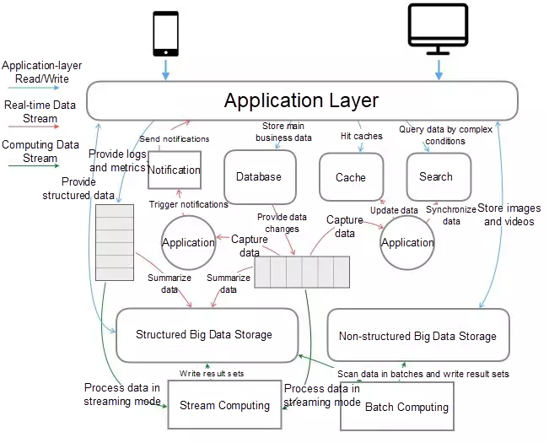

To achieve efficient data workload scaling, the Compute Layer leverages an array of advanced technologies and architectural patterns:

- Distributed Processing Engines: Platforms like Apache Spark, Presto/Trino, and Apache Flink form the backbone of distributed data processing. They enable massively parallel processing (MPP) by breaking down large tasks into smaller sub-tasks that can be executed concurrently across multiple nodes. These engines are designed to interact seamlessly with distributed Storage Layer solutions, fetching and processing data in parallel.

- Resource Orchestration: Technologies such as Kubernetes and Apache YARN play a critical role in managing and allocating compute resources. They provide the frameworks for deploying, scaling, and managing containerized applications or distributed jobs, ensuring optimal resource utilization and isolation across diverse workloads.

- Serverless and Elastic Compute: Cloud-native solutions like Snowflake’s virtual warehouses, Google BigQuery, AWS Athena, and Databricks’ auto-scaling clusters exemplify elastic and serverless compute. These services automatically scale resources up or down based on real-time demand, often offering pay-as-you-go pricing models. This elasticity minimizes idle capacity and optimizes operational costs.

- Specialized Compute Units: For specific, highly demanding workloads, specialized compute units are integrated into the layer. Graphics Processing Units (GPUs) are essential for accelerating machine learning model training and inference, while Field-Programmable Gate Arrays (FPGAs) can be used for high-performance, custom data processing tasks. The ability of the Compute Layer to integrate these specialized resources flexibly further enhances its efficiency for complex AI/ML integrations.

Challenges and Barriers to Efficient Scaling

While modern compute layers offer immense advantages, several challenges must be addressed to unlock their full potential:

- Workload Contention: Managing diverse, concurrent workloads (e.g., real-time analytics, batch processing, ML training) on shared compute resources can lead to contention and performance degradation if not properly isolated and prioritized. Resource isolation and workload management features are crucial for maintaining consistent performance.

- Data Transfer Bottlenecks: Despite the decoupling of compute and storage, significant latency and throughput issues can arise if data transfer between the Compute Layer and the Storage Layer is not optimized, especially over network boundaries. Strategies like data locality awareness and intelligent caching are essential.

- Cost Management and Optimization: The elasticity of cloud compute can lead to runaway costs if not carefully monitored and managed. Proper cost management and attribution, coupled with intelligent auto-scaling policies, are vital to preventing budget overruns.

- Operational Complexity: Managing sophisticated distributed systems and integrating them with MLOps platforms for scalable ML workflows can introduce significant operational complexity, requiring specialized skills and robust automation.

- Data Locality vs. Separation: While separation offers flexibility, achieving optimal performance often requires bringing computation closer to the data. Advanced caching strategies and intelligent query planners are used to minimize data movement and leverage data locality where beneficial.

Business Value and ROI of an Optimized Compute Layer

Investing in a well-architected and efficiently scaled Compute Layer yields substantial business value and a strong return on investment:

- Faster Time to Insight: By accelerating data processing and analysis, organizations can derive insights more rapidly, enabling quicker decision-making and a competitive edge. This directly contributes to business agility.

- Cost Efficiency: Elastic, serverless compute models ensure that organizations pay only for the resources they consume. Optimized resource utilization and reduced idle capacity lead to significant cost savings compared to traditional fixed-capacity infrastructure.

- Enhanced Agility and Innovation: A scalable Compute Layer supports rapid experimentation and the agile development of new data products and services. It provides the flexible infrastructure needed for diverse workloads, including intensive ML model training and inference, facilitating innovation across the enterprise.

- Improved Data Governance and Security: Modern compute layers offer features like role-based access control (RBAC) for compute resources, resource isolation, and audit trails, enhancing data governance and security postures for processing sensitive information.

Comparative Insight: Modern Compute Layers vs. Traditional Data Architectures

The evolution of the Compute Layer highlights a stark contrast with traditional data architectures, which often struggled with rigidity and scalability bottlenecks. Understanding this comparison is key to appreciating the transformative impact of modern approaches.

Traditional Monolithic Systems

Historically, enterprise data processing relied heavily on tightly coupled systems, primarily exemplified by:

- Traditional Data Warehouses: Systems like Teradata or early versions of Oracle Exadata featured integrated compute and storage. Scaling compute often meant scaling storage simultaneously, leading to inefficient resource utilization and high costs. Performance could degrade significantly with increasing data volumes or concurrent user queries, requiring expensive hardware upgrades.

- Early Hadoop Architectures: While Hadoop introduced distributed processing, its initial versions (like MapReduce with HDFS) tightly coupled compute with storage. Data processing jobs ran on nodes that also stored data, which was great for data locality but made independent scaling challenging and resource sharing difficult across diverse workloads.

These architectures were characterized by high upfront capital expenditure, complex capacity planning, limited flexibility for diverse workloads, and significant operational overhead. They were often not well-suited for the dynamic, unpredictable nature of modern data workloads, especially those involving AI and machine learning, where compute demands can fluctuate wildly.

Modern Decoupled Architectures

In contrast, modern data platforms, particularly those in the cloud, have championed the decoupled approach, where the Compute Layer and Storage Layer operate independently but in concert. Examples include:

- Cloud Data Warehouses (e.g., Snowflake, Google BigQuery, AWS Redshift): These platforms epitomize the separation of compute and storage. Snowflake, for instance, allows users to provision “virtual warehouses” (compute clusters) of various sizes that can scale up and down independently, reading data from a central, shared Storage Layer. This enables concurrent workloads to run without contention and offers unparalleled elasticity.

- Cloud Data Lakes/Lakehouses (e.g., Databricks, AWS EMR, Google Dataproc): These solutions combine the flexibility of data lakes (using object storage for raw data) with the analytical capabilities of data warehouses. They provide highly elastic compute engines (often based on Apache Spark) that can dynamically scale to process data stored in the lake. Databricks’ Lakehouse platform, for example, offers serverless compute options that abstract away infrastructure management entirely, allowing users to focus solely on their data workloads.

The advantages are clear: unprecedented elasticity, granular cost control (pay-as-you-go), support for a wide array of data types and workloads (batch, streaming, AI/ML), and significantly reduced operational burden. The modern Compute Layer, powered by these innovative architectures and backed by highly available object storage, has democratized access to scalable data processing, making it accessible and efficient for organizations of all sizes.

World2Data Verdict: The Future of Dynamic Data Processing

The ability of the Compute Layer to scale efficiently is no longer just a technical advantage; it is a fundamental prerequisite for competitive success in the data economy. As our deep dive has shown, the synergy between a flexible Compute Layer and a resilient Storage Layer forms the bedrock of modern, high-performance data platforms. World2Data.com asserts that organizations must prioritize adopting architectures that embrace separation of compute and storage, leveraging elastic auto-scaling and serverless capabilities to optimize both performance and cost. The future will see an even deeper integration of AI-driven resource management, where systems proactively anticipate demand and orchestrate compute and storage resources with minimal human intervention.

Our recommendation is clear: future-proof your data infrastructure by investing in cloud-native solutions that offer robust resource isolation, intelligent workload management, and comprehensive cost attribution. Embrace platforms that provide scalable infrastructure for ML model training and inference, with native support for distributed ML frameworks. This strategic shift will not only ensure your data workloads scale efficiently but also empower your teams to innovate faster, derive insights quicker, and maintain a decisive edge in an increasingly data-intensive landscape.

In essence, mastering the Compute Layer and its symbiotic relationship with the Storage Layer is critical for any enterprise aiming to build a truly agile, cost-effective, and powerful distributed data processing infrastructure. It’s about designing systems where processing power is a fluid resource, always available, always optimized, and always ready to extract maximum value from your data.