Scalable Data Architecture: Growing Your Business with Trusted Data

Platform Category: Data Lake, Data Warehouse, Data Streaming Platforms, Data Lakehouse

Core Technology/Architecture: Cloud-native, Serverless, Distributed Computing, Data Mesh, Microservices

Key Data Governance Feature: Data Catalog, Role-Based Access Control, Metadata Management, Data Lineage

Primary AI/ML Integration: MLOps Platforms, Feature Stores, Integration with major ML Clouds, Data preparation for ML

Main Competitors/Alternatives: AWS Data Services, Azure Data Services, Google Cloud Data Services

In today’s data-driven world, a robust Scalable Data Architecture is not merely an IT buzzword; it’s the strategic backbone enabling enterprises to thrive and adapt at unprecedented speeds. At its core, successful scaling hinges on the availability of Trusted Data, information that is accurate, consistent, and readily accessible for decision-making across all business functions. This deep dive explores how organizations can build and leverage such architectures to ensure sustained growth and competitive advantage.

Introduction: The Imperative for Scalable Data Architecture

The relentless explosion of data volume, velocity, and variety presents both immense opportunities and significant challenges for businesses worldwide. From customer interactions and sensor readings to financial transactions and operational logs, data streams inundate organizations, demanding sophisticated infrastructure capable of ingestion, processing, storage, and analysis at scale. A truly Scalable Data Architecture transcends simply adding more servers; it’s about designing a resilient, flexible, and cost-effective system that can effortlessly expand or contract to meet fluctuating demands without compromising performance or integrity. Crucially, at the heart of any effective scalable architecture lies the principle of Trusted Data – data that stakeholders can rely on for critical insights and strategic decisions. Without trust, even the most advanced data systems are rendered ineffective, leading to flawed analysis, misguided strategies, and erosion of confidence.

This article will delve into the technical and architectural components that define a modern scalable data architecture, emphasizing the critical role of Trusted Data. We will explore the latest technological enablers, discuss common challenges in adoption, and highlight the tangible business value and return on investment. Furthermore, we will draw a clear comparison between these modern paradigms and traditional data management approaches, culminating in World2Data’s expert verdict on navigating this evolving landscape. The objective is to provide a comprehensive understanding for businesses looking to future-proof their data strategies and harness their data assets for maximum impact.

Core Breakdown: Building the Backbone of Future-Proof Data Systems

Designing a Scalable Data Architecture requires foresight, envisioning not just current needs but future expansion and unforeseen data demands. It involves building robust frameworks that can effortlessly handle increasing data volumes and user demands without performance degradation, all while ensuring the underlying data remains Trusted Data. This proactive approach ensures your infrastructure supports, rather than hinders, business momentum, particularly as companies increasingly rely on AI and Machine Learning.

The Foundational Pillars of Scalable Data Architecture

Modern scalable architectures are often composite, leveraging several interconnected platforms to manage the data lifecycle effectively:

- Data Lakes: Providing flexible, schema-on-read storage for raw, structured, semi-structured, and unstructured data at massive scale, often leveraging cloud object storage. They are crucial for exploratory analytics, machine learning, and retaining historical data.

- Data Warehouses: Optimized for structured, historical data analysis, reporting, and business intelligence (BI). Modern cloud data warehouses offer elasticity and separate compute from storage, vastly improving scalability.

- Data Streaming Platforms: Technologies like Apache Kafka enable real-time ingestion, processing, and delivery of data. This is critical for applications requiring immediate insights, fraud detection, real-time personalization, and operational monitoring.

- Data Lakehouse: A hybrid approach combining the flexibility and cost-effectiveness of data lakes with the ACID transactions and schema enforcement capabilities of data warehouses. This architecture aims to provide the best of both worlds, simplifying data management and ensuring Trusted Data quality for diverse workloads, including advanced analytics and AI.

Technological Enablers for Scalability

The underlying technologies are paramount for achieving true scalability and maintaining Trusted Data:

- Cloud-native: Leveraging services and deployment models intrinsic to cloud platforms (AWS, Azure, GCP). This provides elasticity, high availability, and reduced operational overhead.

- Serverless: Abstracting server management, allowing developers to focus on code. Services like AWS Lambda or Azure Functions enable automatic scaling and pay-per-execution models.

- Distributed Computing: Frameworks like Apache Spark process vast datasets across clusters of machines, ensuring high throughput and resilience.

- Data Mesh: An organizational and architectural paradigm that decentralizes data ownership and empowers domain teams to treat data as a product. This enhances agility, promotes data quality, and helps establish Trusted Data within specific contexts.

- Microservices: Breaking down monolithic applications into smaller, independently deployable services. This allows for individual scaling of components and fosters agility.

Ensuring Trusted Data Through Robust Data Governance

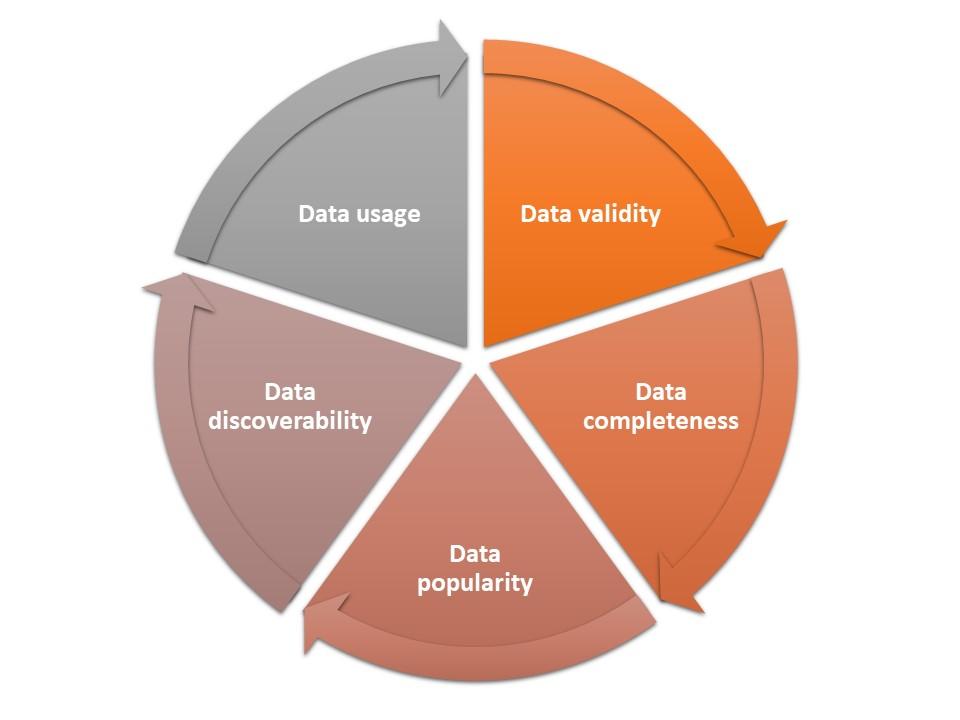

Trusted Data is paramount for reliable insights, especially in a scalable, distributed environment. Implementing strong data governance policies and rigorous validation processes is essential to maintain accuracy across all sources. Key features include:

- Data Catalog: A comprehensive inventory of all data assets, providing metadata, data definitions, and ownership information, making data discoverable and understandable.

- Role-Based Access Control (RBAC): Ensures that only authorized users and systems can access specific data, upholding security and compliance.

- Metadata Management: Capturing and managing technical, business, and operational metadata is crucial for understanding data context, quality, and usage patterns.

- Data Lineage: Tracing data’s journey from source to consumption, including all transformations. This is vital for auditing, debugging, and confirming the trustworthiness of insights.

AI/ML Integration: Powering Intelligent Growth

A truly scalable data architecture is inherently designed to support advanced analytics and AI/ML workloads. The ability to prepare, process, and serve Trusted Data efficiently is foundational for successful machine learning initiatives.

- MLOps Platforms: Streamlining the entire machine learning lifecycle, from data preparation and model training to deployment, monitoring, and governance. A scalable architecture provides the underlying infrastructure for MLOps to function effectively.

- Feature Stores: Centralized repositories for pre-computed and re-usable features for ML models. This ensures consistency, reduces data duplication, and accelerates model development and deployment, directly contributing to the reliability and trustworthiness of AI outputs.

- Integration with Major ML Clouds: Seamless connectivity with platforms like AWS Sagemaker, Azure Machine Learning, and Google AI Platform, allowing data scientists to leverage powerful tools without data gravity issues.

- Data Preparation for ML: Providing scalable tools and services for data cleaning, transformation, and feature engineering, ensuring models are trained on high-quality, Trusted Data.

Challenges and Barriers to Adoption

While the benefits of a Scalable Data Architecture are clear, several hurdles can impede successful adoption:

- Data Silos and Integration Complexity: Merging data from disparate legacy systems and applications into a cohesive, centralized or distributed architecture can be technically challenging and time-consuming. Ensuring consistency across these integrated sources is critical for Trusted Data.

- Ensuring Data Quality and Trusted Data at Scale: As data volumes grow, maintaining high data quality becomes exponentially harder. Data drift, schema evolution, and inconsistent input sources can quickly erode trust in the data.

- Skill Gaps and Organizational Change: Implementing and managing modern data platforms requires specialized skills in cloud engineering, distributed systems, data governance, and MLOps. Organizations often face a shortage of such talent, and cultural resistance to new ways of working can slow progress.

- Cost Management and ROI Justification: While cloud-native solutions offer flexibility, controlling costs in a dynamically scaling environment can be complex. Proving the tangible ROI to stakeholders requires clear metrics and strategic planning.

- Governance and Compliance in a Distributed Landscape: Managing data privacy, security, and regulatory compliance (e.g., GDPR, CCPA) across a complex, distributed data landscape like a data mesh requires robust and automated governance frameworks.

Business Value and ROI of a Scalable Data Architecture

Ultimately, investing in a Scalable Data Architecture that prioritizes Trusted Data is an investment in your company’s future. It establishes a resilient ecosystem where information flows freely and reliably, empowering every department to achieve more, adapt faster, and unlock unprecedented levels of success in a dynamic marketplace. The returns are multifaceted:

- Accelerated Decision Making and Innovation: Rapid access to high-quality, Trusted Data enables faster and more informed decisions, shortening the cycle from insight to action. This fuels innovation by allowing businesses to experiment with new products and services based on real-time data.

- Enhanced Operational Efficiency and Cost Savings: Automation of data pipelines, optimized resource utilization through cloud elasticity, and reduced manual data preparation efforts lead to significant operational efficiencies and cost reductions over time.

- Superior Customer Experience and Personalization: With a unified view of customer data, organizations can deliver highly personalized experiences, tailored product recommendations, and proactive support, fostering stronger customer loyalty.

- Competitive Advantage Through Data-Driven Insights: Companies that can effectively leverage their Trusted Data to uncover market trends, predict customer behavior, and optimize internal processes gain a significant edge over competitors.

- Future-Proofing for Trusted Data Growth and Evolving Business Needs: A truly scalable architecture embraces flexibility. It allows for swift adaptation to new business models, market shifts, and emerging data types (e.g., IoT, streaming data) without extensive re-engineering, ensuring the data infrastructure remains a valuable asset, continually serving the company’s strategic objectives and maintaining data trust.

Comparative Insight: Modern Scalable Architecture vs. Traditional Approaches

To truly appreciate the value of a modern Scalable Data Architecture, it’s essential to understand how it contrasts with traditional data management paradigms. Historically, data was often managed in siloed databases, purpose-built for specific applications, or aggregated into monolithic data warehouses with rigid schemas and limited scalability.

Traditional data approaches, typically characterized by on-premise infrastructure, manual provisioning, and highly structured relational databases, often suffer from several limitations when faced with today’s data demands. They struggle with:

- Limited Scalability: Scaling up usually involves significant hardware upgrades and complex reconfiguration, leading to high capital expenditure and downtime.

- Data Silos: Data is locked in application-specific databases, making cross-functional analysis difficult and hindering the creation of a unified view of Trusted Data.

- Rigidity: Schema-on-write approaches make it challenging to incorporate new data types or adapt to evolving business requirements without extensive re-engineering.

- Cost Inefficiency: Over-provisioning to handle peak loads and significant operational costs for maintenance and upgrades.

- Lack of Real-time Capabilities: Batch processing dominates, making real-time analytics or streaming insights difficult, if not impossible.

- Poor Support for Diverse Data Types: Primarily designed for structured data, struggling with semi-structured or unstructured data common in modern applications.

In stark contrast, a modern Scalable Data Architecture, particularly those leveraging cloud-native, distributed, and serverless technologies, offers:

- Elasticity and Agility: Resources can scale up or down automatically based on demand, ensuring optimal performance and cost-efficiency. Rapid deployment and iteration cycles are standard.

- Data Democratization: Concepts like Data Mesh foster domain-driven data ownership, making Trusted Data products accessible and usable across the organization.

- Flexibility and Schema Agnostic: Data lakes and lakehouses support schema-on-read, allowing for ingestion of raw data without prior transformation, and then applying schema as needed for analysis.

- Cost Optimization: Pay-as-you-go models, serverless computing, and separation of compute and storage significantly reduce capital expenditure and offer better cost control.

- Real-time Processing: Integrated streaming platforms enable immediate data processing and analytics, driving real-time decision-making and operational intelligence.

- Support for All Data Types: Handles structured, semi-structured, and unstructured data natively, unlocking insights from a broader range of sources.

- Enhanced Data Governance: Modern tools for data cataloging, lineage, and access control are built into the architecture, facilitating the creation and maintenance of Trusted Data across diverse environments.

The shift from traditional to scalable architectures is more than a technological upgrade; it’s a fundamental change in how organizations perceive, manage, and derive value from their data. It’s a move from data as an operational byproduct to data as a strategic asset, with Trusted Data as its most precious commodity.

World2Data Verdict: Embracing the Future of Trusted Data Growth

The journey towards a truly Scalable Data Architecture is not a destination but a continuous evolution. For businesses looking to grow, innovate, and maintain a competitive edge, the adoption of modern data platforms and architectural patterns is no longer optional; it is a strategic imperative. World2Data.com unequivocally recommends that organizations prioritize the development of cloud-native, flexible data ecosystems that champion data democratization while rigorously upholding the principles of Trusted Data. The future demands architectures that are not only robust and elastic but also deeply integrated with AI/ML capabilities, allowing for rapid experimentation and deployment of intelligent solutions. Focusing on data governance from the outset, investing in skilled talent, and fostering a data-driven culture will be paramount. Those who commit to building a resilient, scalable foundation powered by Trusted Data will be best positioned to navigate the complexities of tomorrow’s digital landscape and unlock unprecedented levels of business success.