Data Reliability: How to Build Trustworthy Data Systems for Confident Decision-Making

In today’s hyper-connected, data-driven world, Data Reliability is not merely a technical concern but a strategic imperative. It forms the bedrock of sound decision-making, operational excellence, and sustained competitive advantage. Organizations worldwide are now prioritizing initiatives to ensure their data systems consistently deliver accurate, consistent, and complete information, fostering an environment of trust and innovation. High Data Reliability empowers businesses to confidently navigate complex challenges and seize emerging opportunities, transforming raw data into actionable intelligence.

This deep dive by World2Data explores the multifaceted aspects of building truly trustworthy data systems. We’ll delve into the core principles, architectural components, and technological advancements, including the rise of Data Observability Platforms and advanced Data Quality Tools, essential for achieving unparalleled data trust. By understanding and implementing robust strategies for Data Reliability, organizations can unlock their data’s full potential.

The Imperative of Data Reliability in the Modern Enterprise

At its core, Data Reliability encompasses the accuracy, completeness, and consistency of datasets across an organization’s entire data landscape. In an era where data underpins everything from customer experience and supply chain management to financial reporting and AI model training, the consequences of unreliable data are severe. Poor strategic decisions, significant financial losses, damaged reputation, and compliance breaches are just a few of the potential repercussions. Every piece of data utilized for analysis, reporting, or automated processes must genuinely reflect the actual conditions it purports to represent.

The journey towards achieving and maintaining high Data Reliability is complex, requiring a holistic approach that integrates technology, process, and people. It means moving beyond reactive fixes to proactive strategies, leveraging modern data stacks to anticipate and prevent data quality issues before they impact business operations. This includes embracing solutions from categories such as Data Observability Platforms and Data Quality Tools, which provide the necessary infrastructure for vigilance and immediate remediation.

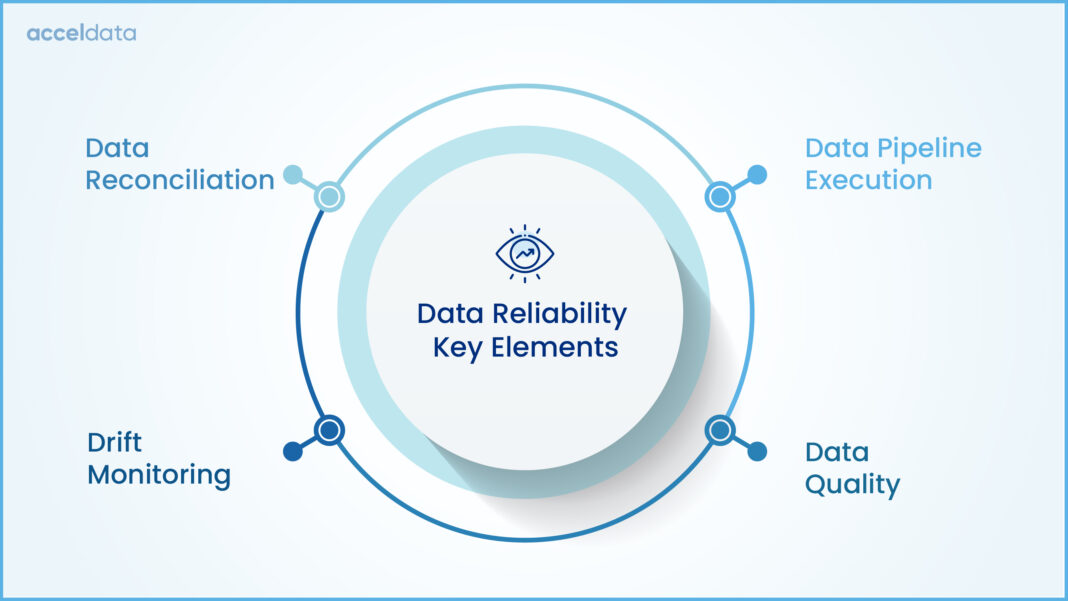

Core Breakdown: Architecting for Enduring Data Trust

Building trustworthy data systems demands a multi-pronged architectural and process-oriented approach. It begins with foundational practices and extends into continuous monitoring and sophisticated technological leverage. The goal is to establish an ecosystem where data quality is baked in, not bolted on.

Essential Pillars for Data Trustworthiness

- Rigorous Data Validation and Cleaning Processes: The first line of defense against unreliable data occurs at the point of ingestion. Implementing strong schema enforcement, type validation, range checks, and referential integrity rules prevents erroneous data from entering the system. Automated data cleaning routines, including deduplication, standardization, and imputation for missing values, are crucial for refining raw data into a usable state.

- Designing Resilient Data Pipelines: Data pipelines are the arteries of any data system. Their design must prioritize resilience, ensuring smooth, uncorrupted, and timely data flow. This involves implementing robust error handling mechanisms, retry logics, idempotency, and mechanisms for handling schema evolution. Modern ETL/ELT frameworks often incorporate features that support these requirements, minimizing data loss or corruption during transit and transformation.

- Effective Metadata Management and Data Cataloging: Metadata Management provides the crucial documentation and context for all data assets. This includes technical metadata (schema, data types, lineage), business metadata (definitions, ownership, usage), and operational metadata (ingestion times, quality metrics). A comprehensive Data Cataloging solution makes this metadata discoverable and understandable, fostering better data literacy and trust across the organization. It clarifies data origins, transformations, and intended uses.

Proactive Strategies for Maintaining Quality

- Automated Data Monitoring and Proactive Alerting: Maintaining Data Reliability demands continuous vigilance. Implementing Automated Data Monitoring systems allows for real-time detection of anomalies, deviations from expected patterns, and quality issues. These systems often leverage baselining and statistical methods to identify data drift, schema changes, or sudden drops in data volume. Proactive Alerting ensures that data teams are immediately notified of potential issues, enabling rapid investigation and remediation before downstream impacts become significant.

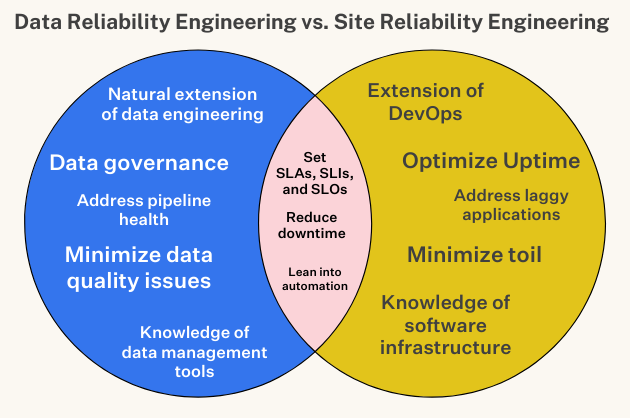

- Strong Data Governance Frameworks: Effective data governance defines the roles, responsibilities, standards, and policies for managing data throughout its lifecycle. This includes establishing Data Quality Rule Enforcement, defining data ownership, implementing Access Controls, and setting clear data retention policies. A well-defined governance framework ensures accountability and promotes a culture of data quality.

- Regular Audits and Data Quality Metrics: Continuous improvement in Data Reliability is achieved through regular audits of data quality metrics. Key Performance Indicators (KPIs) like completeness, accuracy rate, consistency score, and timeliness should be tracked via dashboards. These metrics provide objective insights into data health, highlight areas for improvement, and demonstrate the ROI of data quality initiatives.

Leveraging Technology for Enhanced Reliability

Modern technology offers powerful tools to augment human effort in ensuring data trust:

- Advanced Data Quality Tools: Beyond basic validation, these tools offer Automated Data Profiling, rule-based validation, and machine learning-driven anomaly detection to identify subtle data quality issues that might escape manual checks. They streamline the process of defining, monitoring, and enforcing data quality rules at scale.

- Data Lineage and Traceability Solutions: Data Lineage Tracking provides end-to-end transparency, clarifying the origin, transformations, and destinations of data. This is invaluable for root cause analysis when issues arise, for impact analysis of proposed changes, and for demonstrating compliance. Understanding the full journey of data builds profound trust.

- Scalable Infrastructure and Data Observability Platforms: Cloud-native, distributed infrastructure supports growing data volumes while ensuring consistent quality and performance. More importantly, comprehensive Data Observability Platforms integrate monitoring, lineage, and quality checks into a unified view. These platforms provide Predictive Pipeline Health insights, often leveraging AI to anticipate failures and proactively manage data integrity across complex data stacks. Competitors in this space like Monte Carlo, Datafold, Acceldata, and Soda are leading the charge in bringing these capabilities to the forefront.

Challenges and Barriers to Adoption in Building Reliable Data Systems

Despite the clear benefits, organizations often face significant hurdles in establishing and maintaining high Data Reliability:

- Data Volume, Velocity, and Variety (The 3 Vs): The sheer scale and complexity of modern data make manual quality checks impossible. Data streams at high velocity, and the diversity of data formats and sources introduce immense challenges in ensuring consistency and quality across the board.

- Data Drift and Schema Evolution: Data schemas and underlying data distributions are rarely static. As source systems evolve, so does the data, leading to subtle or drastic changes (data drift) that can break pipelines and invalidate models. Detecting and adapting to these changes automatically is a non-trivial problem.

- Siloed Data Teams and Ownership: Often, data ownership and responsibility are fragmented across different departments. This lack of centralized accountability can lead to inconsistent data quality standards and a ‘not my problem’ attitude when issues arise.

- Legacy Systems and Technical Debt: Older systems frequently lack the APIs, metadata, and inherent quality mechanisms needed to integrate seamlessly into a modern, reliable data architecture. Overcoming technical debt requires significant investment and strategic planning.

- Lack of Data Literacy and Culture: Without a strong organizational culture that values data quality, even the best tools and processes can fall short. Data users may not understand the implications of poor data or how to contribute to its reliability.

Business Value and ROI of High Data Reliability

Investing in Data Reliability yields substantial returns across the enterprise:

- Improved Decision-Making: Leaders can trust their data insights, leading to more informed, strategic, and successful business decisions. This reduces risk and increases agility.

- Enhanced Operational Efficiency: Reliable data minimizes the time spent on data debugging, reconciliation, and firefighting. Automated processes run smoothly, freeing up data engineers and analysts to focus on innovation rather than remediation.

- Regulatory Compliance and Risk Mitigation: Many regulations (GDPR, CCPA, HIPAA, financial regulations) require verifiable data quality and lineage. High Data Reliability simplifies compliance audits and significantly reduces the risk of penalties and reputational damage.

- Superior Customer Experiences: Accurate and consistent customer data enables highly personalized services, targeted marketing, and proactive support, fostering stronger customer loyalty and satisfaction.

- Optimized AI/ML Model Performance: AI and Machine Learning models are notoriously sensitive to data quality. Reliable, clean data leads to more accurate, robust, and fair models, improving predictions and automation capabilities. AI-driven Anomaly Detection for Data Quality also plays a pivotal role in maintaining the integrity of data used for training and inference.

Comparative Insight: Data Reliability in the Modern Stack vs. Traditional Approaches

The concept of data quality isn’t new, but the approach to achieving Data Reliability has dramatically evolved. Traditionally, data quality efforts were often reactive, manual, and siloed:

- Traditional Data Lakes/Warehouses: Often focused on merely *storing* large volumes of data (data lakes) or structuring it for reporting (data warehouses), with data quality checks applied at later stages, or often, not at all. This frequently led to “data swamps” – repositories of untrustworthy, uncataloged, and unusable data. Quality issues were typically discovered by end-users, causing delays and eroding trust. Data Lineage Tracking was rudimentary, making root cause analysis arduous.

- Manual Processes: Data validation and cleaning were heavily reliant on manual SQL scripts, spreadsheet checks, and human oversight. This was inherently slow, error-prone, and unsustainable given the growth of data.

- Limited Monitoring: Basic monitoring focused on infrastructure uptime rather than data integrity, meaning data issues could persist undetected for extended periods.

The modern data stack, powered by Data Observability Platforms and advanced Data Quality Tools, represents a paradigm shift:

- Proactive and Automated: Modern approaches emphasize embedding quality checks and Automated Data Monitoring throughout the data pipeline, from ingestion to consumption. Tools leverage AI-driven Anomaly Detection for Data Quality and Automated Data Profiling to identify issues proactively, often before they even impact downstream systems.

- Unified Observability: Platforms like those offered by Monte Carlo, Datafold, Acceldata, and Soda integrate metadata, lineage, quality, and performance monitoring into a single pane of glass. This provides comprehensive visibility and Predictive Pipeline Health, allowing data teams to address issues with speed and precision.

- Collaborative and Governed: Modern solutions facilitate better collaboration among data engineers, analysts, and business users through shared data catalogs and clear Data Quality Rule Enforcement. Data Cataloging is no longer an afterthought but a central component for discoverability and trust.

- Scalable and Adaptable: Designed for the cloud-native, big data environment, these solutions scale effortlessly with data growth and adapt to schema changes and evolving business requirements.

World2Data Verdict: The Future is Proactive, Integrated Data Trust

The pursuit of Data Reliability has evolved from a reactive chore to a proactive, strategic imperative, driven by sophisticated Data Observability Platforms and intelligent Data Quality Tools. World2Data’s recommendation is clear: organizations must move beyond piecemeal solutions and embrace an integrated strategy that converges data governance, data quality, and data observability. Focus on building an architecture that inherently promotes trust, leveraging Automated Data Monitoring, comprehensive Data Lineage Tracking, and Metadata Management as core tenets. Prioritize solutions that offer AI-driven Anomaly Detection for Data Quality and Predictive Pipeline Health to anticipate and mitigate issues before they impact the business. Establishing robust Data Quality Rule Enforcement and a pervasive culture of data accountability are equally critical. The future of data success hinges on an unwavering commitment to Data Reliability, transforming every dataset into a source of unwavering confidence.