Event-Driven Architecture for Enterprises: Unlocking Real-time Responsiveness and Agility

Event-Driven Architecture (EDA) is fundamentally transforming how modern businesses operate, shifting from rigid, monolithic systems to dynamic, responsive ecosystems. By embracing a design centered around asynchronous events, enterprises can achieve unparalleled agility and resilience in today’s fast-paced digital landscape. This architectural paradigm has become a cornerstone for innovation, enabling real-time data integration, scalable microservices, and enhanced operational efficiency across diverse business domains.

Introduction: Responding to the Pulse of Modern Business

In an increasingly interconnected and data-rich world, enterprises are under immense pressure to react instantly to market shifts, customer behaviors, and internal system changes. Traditional architectures, often characterized by tightly coupled components and synchronous request-response patterns, frequently struggle to meet these demands for speed, scalability, and resilience. This is where Event-Driven Architecture emerges as a pivotal solution. As a robust Real-time Data Integration and Streaming Platform category, EDA redefines how applications communicate and collaborate.

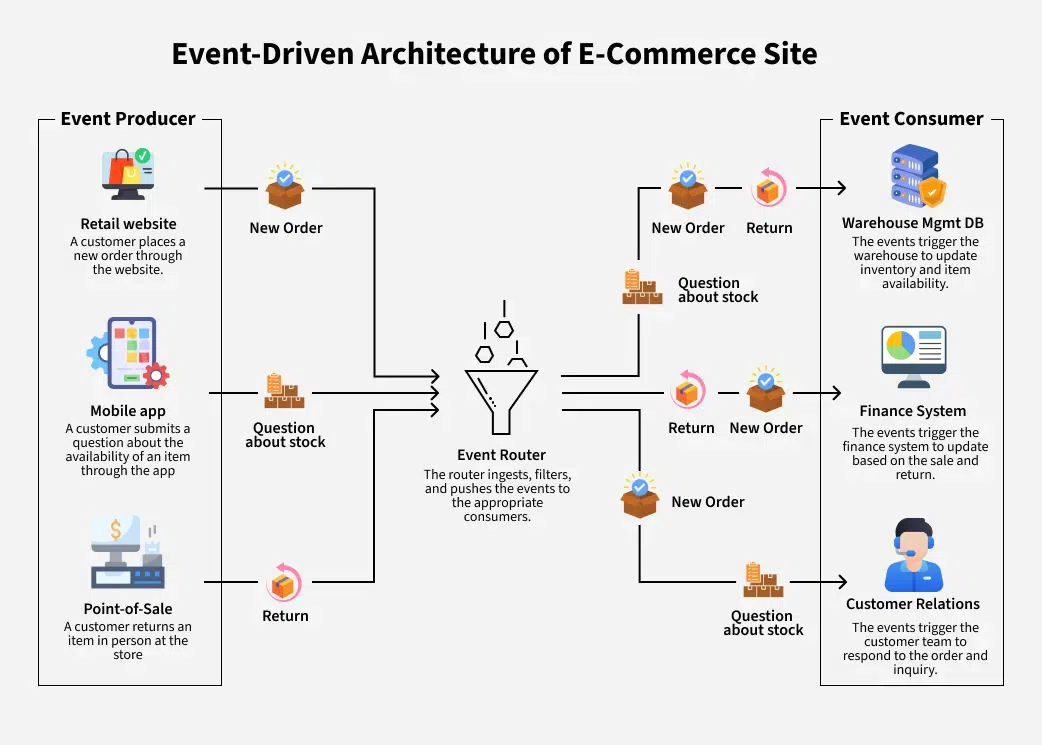

At its core, Event-Driven Architecture is a design pattern where microservices and other components communicate by producing, detecting, consuming, and reacting to events. These events represent significant occurrences within a system, such as a customer placing an order, a sensor detecting a temperature change, or a stock price update. By decoupling producers from consumers through mechanisms like Pub/Sub messaging and robust Message Brokers, EDA facilitates a highly distributed, responsive, and scalable environment. This article delves deep into the technical intricacies, business advantages, and implementation considerations of Event-Driven Architecture, providing a comprehensive understanding for enterprises looking to harness its transformative power.

Core Breakdown: Dissecting the Event-Driven Architecture Paradigm

Understanding Event-Driven Architecture requires a thorough examination of its constituent parts and underlying principles. Unlike traditional monolithic applications or synchronous request-response patterns, EDA thrives on asynchronous communication and loose coupling, making systems inherently more resilient and scalable.

The Anatomy of Event-Driven Systems

- Events: The fundamental building blocks of EDA. An event is a record of something that happened, an immutable fact. It typically contains data about the event itself, such as a timestamp, event type, and relevant payload data. For instance, a “ProductAddedToCart” event might contain the customer ID, product ID, and quantity.

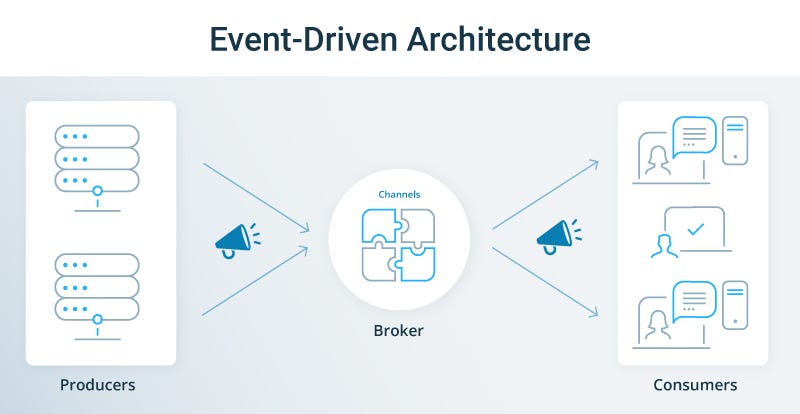

- Event Producers: These are the components or services that generate and publish events when a significant state change occurs within their domain. Producers do not know or care which consumers will receive their events, promoting significant decoupling.

- Event Consumers: These are services or applications that subscribe to specific event types and react to them. A single event can be consumed by multiple, independent consumers, each performing a different action. For example, a “ProductOrdered” event could trigger one consumer to update inventory, another to send a confirmation email, and a third to log analytics data.

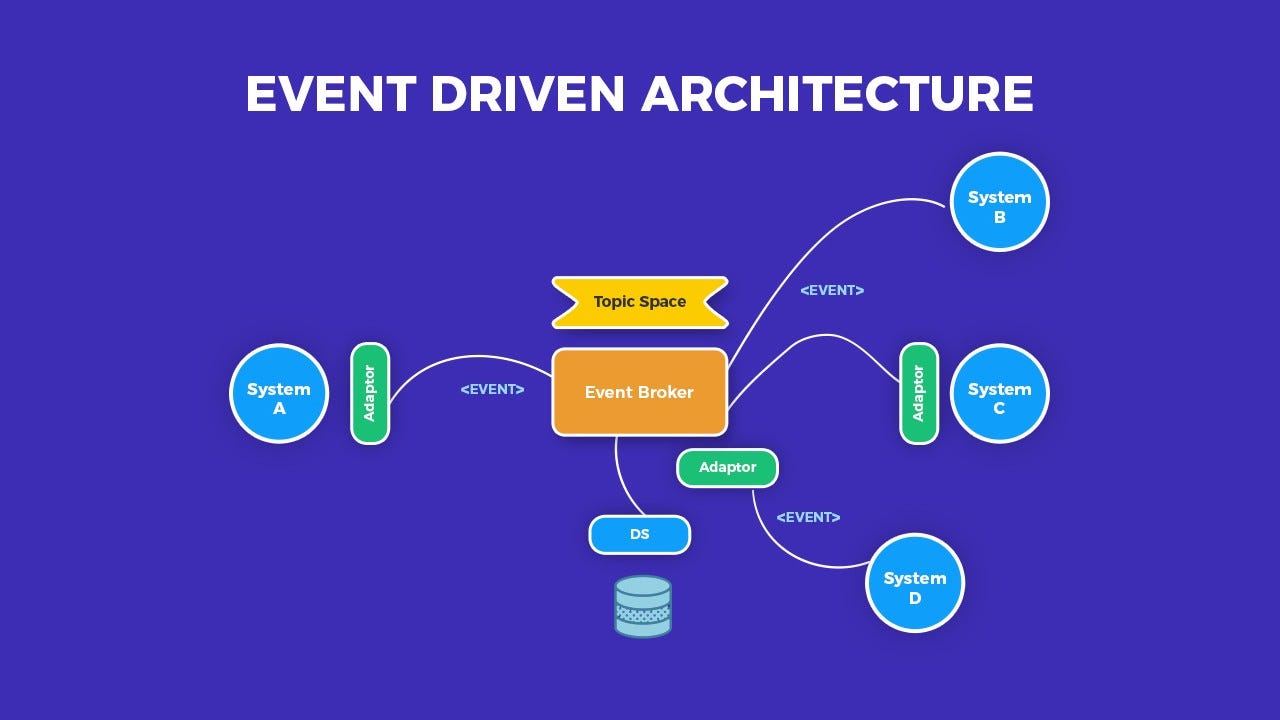

- Event Brokers (Message Brokers/Streaming Platforms): At the heart of EDA lies the event broker, which acts as an intermediary responsible for receiving events from producers and delivering them to interested consumers. Technologies like Apache Kafka, RabbitMQ, and Apache Pulsar serve this purpose, providing features such as durable storage, guaranteed delivery, and high throughput. These brokers ensure that events are reliably transmitted and allow consumers to process events at their own pace.

- Event Streams: Events are often organized into ordered, immutable sequences called event streams. These streams provide a historical record of all significant occurrences within a system, enabling powerful capabilities like event sourcing and stream processing for real-time analytics.

The alignment of EDA with microservices is particularly potent. Each microservice can act as both an event producer and consumer, owning specific business capabilities and communicating primarily through events. This fosters independent development, deployment, and scaling, dramatically reducing inter-service dependencies.

Ensuring Data Quality and Consistency with Schema Registry

One of the critical challenges in distributed systems is maintaining data contract compatibility, especially when multiple services produce and consume the same event types. This is where a Schema Registry plays an indispensable role for event contract enforcement. A Schema Registry acts as a centralized repository for event schemas, defining the structure and data types of events. By enforcing these schemas, it ensures that producers always publish events in a compatible format and consumers can reliably parse them. This prevents breaking changes, facilitates schema evolution, and significantly enhances data quality and interoperability across the enterprise.

Challenges and Barriers to Adoption in Event-Driven Architectures

While the benefits of Event-Driven Architecture are compelling, its implementation is not without hurdles. Enterprises must carefully consider and plan for these challenges:

- Increased Operational Complexity: Managing a multitude of decoupled microservices and event streams introduces significant operational overhead. Debugging distributed systems, tracing events across multiple services, and ensuring end-to-end data flow can be complex. This parallels the challenges seen in managing sophisticated MLOps pipelines, requiring robust monitoring, logging, and tracing tools.

- Eventual Consistency: Unlike traditional ACID transactions, EDA often relies on eventual consistency. This means that after an event is published, it takes some time for all affected consumers to process it and update their state. While often acceptable and even desirable for scalability, it requires a different mindset and careful design to handle scenarios where immediate consistency is perceived as critical by end-users.

- Data Quality and Data Drift: Even with a Schema Registry, ensuring consistent data quality across an evolving event landscape is crucial. Without vigilant governance, “data drift” can occur, where the meaning or format of data subtly changes over time, leading to misinterpretations or errors in consuming services.

- Observability and Monitoring: Gaining a holistic view of system health and performance becomes challenging in a highly distributed event-driven system. Comprehensive observability solutions, including distributed tracing, centralized logging, and metrics aggregation, are essential to effectively monitor event flows and service interactions.

- Cultural Shift: Adopting EDA requires a significant cultural shift from synchronous, imperative thinking to asynchronous, reactive thinking within development and operations teams. This can be a substantial barrier, requiring training and new organizational practices.

Business Value and ROI of Event-Driven Architecture

Despite the challenges, the return on investment (ROI) from implementing Event-Driven Architecture is substantial, translating directly into tangible business advantages:

- Enhanced Agility and Responsiveness: EDA enables businesses to react in real-time to events, whether it’s personalizing customer experiences, detecting fraud, or optimizing supply chains. This responsiveness directly impacts customer satisfaction and operational efficiency, allowing for faster time-to-market for new features.

- Superior Scalability and Resilience: By decoupling services, components can scale independently based on demand. If one service fails, others can continue to operate, significantly increasing system resilience and fault tolerance. This makes EDA ideal for handling unpredictable loads and ensuring high availability.

- Improved Data Flow and Analytics: Event streams provide a continuous, real-time feed of business activity, creating a rich source for analytics and insights. This enables Real-time Model Inference and Online ML, where machine learning models can consume live event data to make predictions or adapt in real-time, providing immediate business value.

- Decoupling and Independent Development: Teams can develop, deploy, and update services independently without affecting other parts of the system. This accelerates development cycles, reduces coordination overhead, and allows for greater technological diversity within the organization.

- Foundation for Future Innovation: EDA provides a robust foundation for integrating emerging technologies like Artificial Intelligence, Machine Learning, and the Internet of Things (IoT), which inherently rely on real-time data streams for optimal performance.

Comparative Insight: Event-Driven Architecture vs. Traditional Models

To fully appreciate the transformative power of Event-Driven Architecture, it’s crucial to compare it with the traditional architectural paradigms that have long dominated enterprise IT landscapes.

The Limitations of Traditional Request-Response Architectures

Most conventional enterprise applications are built on a synchronous Request-Response Architecture. In this model, a client sends a request to a server, waits for the server to process it, and then receives a response. While straightforward for simple interactions, this model presents several limitations in complex, distributed environments:

- Tight Coupling: Services are often tightly coupled, meaning changes in one service can necessitate changes in others, leading to complex dependencies and hindering independent development and deployment.

- Cascading Failures: A failure in a downstream service can block the upstream service, potentially leading to a ripple effect across the entire system.

- Scalability Bottlenecks: Scaling often involves replicating entire application instances, which can be inefficient. High loads can quickly overwhelm a single service, creating performance bottlenecks.

- Synchronous Blocking: The client must wait for a response, introducing latency and reducing overall system throughput, especially in microservices architectures where multiple calls might be chained.

The Constraints of Batch Processing

Another prevalent model is Batch Processing, where data is collected and processed in large chunks at scheduled intervals. While effective for analytical tasks that don’t require immediate results (like monthly financial reports or overnight data warehousing), it falls short in scenarios demanding real-time responsiveness:

- High Latency: Insights and actions are delayed until the batch job completes, making it unsuitable for real-time decision-making or proactive customer engagement.

- Historical Focus: Batch processing primarily deals with historical data, making it difficult to react to current events as they happen.

- Resource Spikes: Batch jobs often require significant computational resources for short periods, which can lead to inefficient resource utilization or contention.

The Distinct Advantages of Event-Driven Architecture

Event-Driven Architecture directly addresses the shortcomings of these traditional models by:

- Asynchronous and Non-Blocking: Services communicate asynchronously, meaning producers don’t wait for consumers. This significantly improves system throughput, responsiveness, and user experience.

- Loose Coupling: Producers and consumers are completely independent of each other, communicating only through events via an intermediary broker. This reduces dependencies, increases resilience, and allows for independent scaling and technology choices.

- High Scalability: Individual services can be scaled independently, and the event broker itself is designed to handle high volumes of events. This makes EDA highly adaptable to varying workloads.

- Real-time Processing: Events are processed as they occur, enabling immediate reactions and insights crucial for modern business operations, fraud detection, real-time recommendations, and dynamic pricing.

- Auditability and Replayability: Event streams provide an immutable log of all system changes, offering inherent audit trails and the ability to “replay” events for debugging, testing, or rebuilding system states.

While EDA offers significant advantages, it’s important to note that it’s not a silver bullet. Many enterprises adopt hybrid architectures, leveraging the strengths of each paradigm where appropriate. For instance, critical synchronous interactions might still use request-response, while analytical tasks might benefit from batch processing, all potentially coordinated or enriched by an underlying event stream.

World2Data Verdict: Charting the Future with Event-Driven Agility

The imperative for enterprises to be agile, responsive, and scalable has never been stronger. World2Data’s analysis unequivocally shows that Event-Driven Architecture is not merely an architectural trend but a strategic necessity for businesses aiming to thrive in the digital economy. Its ability to decouple services, facilitate real-time data flow, and enhance system resilience directly addresses the core challenges of modern distributed systems and the ever-increasing demand for instant insight and action.

Our recommendation for enterprises is to embark on an incremental adoption of Event-Driven Architecture, starting with critical, high-value domains where real-time responsiveness and scalability are paramount. Prioritize foundational elements such as establishing robust messaging platforms, implementing a Schema Registry for stringent event contract enforcement, and investing in comprehensive observability tools. This structured approach mitigates initial complexity and allows teams to gradually build expertise and confidence. Furthermore, fostering a culture that embraces asynchronous thinking and distributed system design is crucial for long-term success.

Looking ahead, the synergy between Event-Driven Architecture and emerging technologies like Artificial Intelligence, Machine Learning, and the Internet of Things will only deepen. As enterprises increasingly rely on real-time data streams for Real-time Model Inference, Online ML, and autonomous decision-making, EDA will serve as the indispensable backbone. It empowers systems to not only react to events but to intelligently anticipate and shape future outcomes. Event-Driven Architecture will remain a pivotal enabler, ensuring businesses are not just adaptable, but truly forward-thinking and strategically positioned for sustained innovation and competitive advantage in their strategic endeavors.