Real-Time ETL Case Study: Cutting Data Latency from Hours to Seconds

This Real-Time ETL Case Study details a significant leap in data processing capabilities, showcasing a monumental shift from archaic batch processing to immediate, actionable insights. It illustrates the profound impact of instantaneous data, transforming how organizations approach their operational and analytical challenges by drastically cutting data latency from hours to mere seconds. Our focus here is on a Stream Processing Platform, leveraging Change Data Capture (CDC) and Event-Driven Architecture to redefine data flows.

The Imperative for Real-Time Data Processing

In today’s hyper-connected, data-driven world, the speed at which organizations can ingest, process, and analyze information is a critical determinant of success. For our client, the traditional paradigm of batch Extract, Transform, Load (ETL) processes, often running on a schedule of hours or even days, had become an insurmountable bottleneck. This significant data latency created a chasm between business events and actionable intelligence, leading to delayed customer responses, missed revenue opportunities, and suboptimal operational efficiency. The need for real-time data was not merely an enhancement; it was an imperative to maintain competitive relevance and operational agility.

The core challenge stemmed from a critical business problem: stale data regularly hindered critical operations. Decision-making, particularly in areas requiring immediate action like fraud detection, personalized customer experiences, and dynamic inventory management, was severely impacted. The operational implications were costly, affecting both efficiency and revenue streams. The demand for immediate access to fresh data for everything from real-time dashboards to feeding machine learning models for immediate predictions highlighted the urgency of this transformation. This particular Real-Time ETL Case Study delves into how this challenge was meticulously addressed, unlocking unprecedented levels of responsiveness.

Core Breakdown: Dissecting the Architecture of Speed

Our innovative Real-Time ETL Solution was meticulously engineered using a combination of cutting-edge technologies, primarily focusing on a robust Stream Processing Platform. The architectural design departed sharply from conventional batch processing, embracing an Event-Driven Architecture to react to data as it’s generated. This foundational shift allowed for a continuous flow of data, minimizing the time between data creation and its availability for consumption.

Leveraging Change Data Capture (CDC)

At the heart of our low-latency extraction strategy was Change Data Capture (CDC). Instead of periodically dumping entire datasets or large deltas, CDC mechanisms directly monitor and capture row-level changes (inserts, updates, deletes) from source databases as they occur. Technologies like Debezium or Apache Kafka Connect with CDC connectors played a pivotal role in streaming these changes directly into our processing pipeline. This method drastically reduces the load on source systems and eliminates the large, resource-intensive windows typically associated with batch extractions, ensuring that data is extracted and ready for the next stage in milliseconds. This is a fundamental component for any successful Real-Time ETL implementation.

The Power of Event-Driven Architecture and Stream Processing

Once captured by CDC, data changes are published as events to a central messaging system, typically Apache Kafka. Kafka, a distributed streaming platform, serves as the backbone of the Event-Driven Architecture, providing durable, fault-tolerant, and high-throughput messaging capabilities. From Kafka, dedicated stream processing engines like Apache Flink or Apache Spark Streaming consume these events. These engines are designed to process data continuously, performing transformations, aggregations, and enrichments on streams of data as they arrive, rather than waiting for batches to accumulate. This “data in motion” processing paradigm is what truly enabled the transformation from hours to seconds in our Real-Time ETL Case Study.

Ensuring Data Quality and Governance with Schema Registry

In a real-time environment, maintaining data consistency and quality is paramount. This is where a Key Data Governance Feature like a Schema Registry comes into play, providing Schema Enforcement. As data flows through various stages of the pipeline, its structure (schema) must be consistent and validated. A Schema Registry, often integrated with Kafka, stores and manages schemas for the data topics, preventing incompatible data from entering the system. This ensures that downstream applications, including those performing real-time inference on streaming data for immediate predictions, can reliably consume and interpret the data without encountering schema evolution issues, thereby preventing data quality pitfalls that can plague less robust systems.

Scalable Cloud Infrastructure

The entire solution was deployed on a scalable cloud infrastructure, leveraging services from providers like AWS, Azure, or GCP. This allowed for elastic scaling of compute and storage resources based on data volume and processing demands, ensuring high availability and fault tolerance. Containerization (e.g., Docker) and orchestration (e.g., Kubernetes) played a crucial role in managing the complex ecosystem of microservices and stream processing applications, simplifying deployment and management.

Challenges and Barriers to Adoption in Real-Time ETL

While the benefits of Real-Time ETL are compelling, its adoption comes with its own set of challenges:

- Complexity of Distributed Systems: Building and maintaining real-time pipelines involves mastering complex distributed technologies like Kafka, Flink, and CDC, which requires specialized skill sets.

- Data Consistency and Exactly-Once Processing: Ensuring that each data event is processed exactly once, even in the face of failures, is a significant technical hurdle requiring careful design and implementation of fault-tolerant mechanisms.

- Monitoring and Observability: Troubleshooting issues in a continuous stream of data demands sophisticated monitoring tools and strategies to quickly identify and resolve anomalies or data quality issues. This complexity extends to MLOps, where real-time model monitoring is crucial.

- Data Quality and Validation: Validating data in real-time requires robust rules and mechanisms to catch and handle erroneous data before it propagates downstream, potentially impacting critical operations or AI models.

- Data Drift and Schema Evolution: Real-time systems are excellent for detecting data drift, but managing the evolution of data schemas without breaking downstream consumers (Schema Enforcement via Schema Registry helps mitigate this) remains a constant challenge.

- Integration with Legacy Systems: Connecting modern real-time architectures with older, batch-oriented source systems can be complex, often requiring custom connectors and careful data harmonization.

Business Value and ROI of Real-Time Data

The quantifiable results and operational impact of this Real-Time ETL Case Study were dramatic and immediate. Latency reduction metrics showed an impressive shift from typical processing times measured in hours to data availability in mere seconds. This directly enhanced business agility, allowing for rapid deployment of new services and significantly improved user experience across all platforms. The ROI manifested in multiple ways:

- Immediate Decision-Making: Business users and automated systems could react to events as they happened, leading to faster, more informed decisions in areas like fraud detection, algorithmic trading, and dynamic pricing.

- Enhanced Customer Experience: Real-time personalization, targeted offers, and immediate customer support responses became possible, significantly improving satisfaction and engagement.

- Operational Efficiency: Real-time monitoring of systems and processes allowed for proactive identification and resolution of issues, reducing downtime and optimizing resource utilization.

- Faster Insights for AI/ML: The ability to perform real-time inference on streaming data for immediate predictions became a game-changer. This fuels use cases like recommendation engines, anomaly detection, and predictive maintenance, directly contributing to data quality for AI.

- Reduced Operational Costs: By optimizing data pipelines and moving away from expensive, resource-intensive batch windows, organizations can see a reduction in infrastructure costs and improved resource allocation.

- Enabling Advanced MLOps: Real-time ETL directly feeds into advanced MLOps strategies, ensuring that machine learning models have access to the freshest features. This also enables faster model deployment and continuous integration/continuous delivery (CI/CD) for ML.

- Powering Feature Stores: Real-time data streams are ideal for populating a Feature Store, providing low-latency features for both online inference and offline model training, thereby accelerating the development and deployment of AI/ML applications.

Comparative Insight: Real-Time ETL vs. Traditional Data Architectures

Understanding the transformative power of Real-Time ETL is often best achieved by comparing it to the traditional data architectures it seeks to augment or replace: the Data Lake and the Data Warehouse, often relying on Batch ETL/ELT.

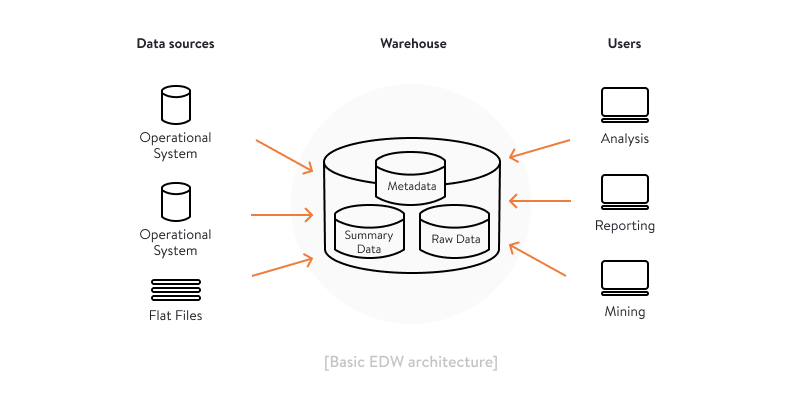

Traditional Data Warehouses and Batch ETL/ELT

Data Warehouses are optimized for structured, historical data analysis, serving business intelligence needs. They typically rely on Batch ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) processes, where data is collected over a period, processed in large chunks, and then loaded into the warehouse.

- Pros: Mature technology, well-understood methodologies, excellent for complex historical queries, aggregate reporting, and structured data analysis. Cost-effective for less time-sensitive insights.

- Cons: Significant data latency (hours to days), not suitable for immediate decision-making, less flexible with unstructured or semi-structured data, and expensive to scale for very large datasets if real-time capabilities are retrofitted.

Data Lakes and Batch/Micro-Batch Processing

Data Lakes store vast amounts of raw data in its native format, including structured, semi-structured, and unstructured data. They offer flexibility for various analytical workloads, including big data analytics and machine learning. While they can ingest data quickly, processing often still involves batch or micro-batch operations.

- Pros: Highly flexible, stores all types of data, cost-effective storage, ideal for exploratory analytics and data science, and can serve as a source for data warehousing.

- Cons: Can become data swamps without proper governance, still prone to latency issues for immediate insights when relying on batch processing, and requires strong data engineering skills.

The Real-Time ETL Distinction

In contrast, Real-Time ETL, as demonstrated in our case study, fundamentally changes the paradigm. It focuses on processing data streams continuously, providing insights with minimal delay.

- Immediacy: Data is available for consumption seconds after its creation, enabling instantaneous reactions and proactive measures.

- Responsiveness: Supports applications requiring instant feedback loops, such as fraud detection, dynamic pricing, and personalized user experiences.

- Scalability for Velocity: Architectures like Apache Kafka and Flink are built to handle high-velocity data streams, scaling horizontally to accommodate increasing data volumes without compromising latency.

- Enabling Modern AI/ML: Feeds real-time Data for AI and machine learning models, supporting online inference and continuously updated Feature Stores.

While traditional systems are vital for historical context and deep analytical dives, real-time ETL is crucial for operational intelligence and immediate action. The modern data landscape increasingly demands a hybrid approach, where real-time streaming pipelines augment and enrich data lakes and warehouses, providing a comprehensive view that combines both historical depth and instantaneous breadth. Competitors like Confluent Platform, Databricks Delta Live Tables, and Apache Flink are at the forefront of enabling these next-generation capabilities, offering robust solutions for Stream Processing Platform needs.

World2Data Verdict: Embracing the Real-Time Future

The strategic advantages of real-time data extend far beyond the initial project scope, as vividly illustrated by this Real-Time ETL Case Study. This foundational shift is vital for future-proofing data infrastructure against evolving business demands, increasing data volumes, and the relentless pressure for immediate insights. It actively cultivates a deeply data-driven culture, empowering teams to make informed, timely decisions and fostering continuous innovation within the organization. The ability to leverage fresh data as it emerges from various sources truly unlocks new avenues for growth and responsiveness in today’s dynamic digital landscape.

Our verdict at World2Data.com is clear: for any enterprise serious about competitive advantage and operational excellence, investing in Real-Time ETL is no longer optional. Organizations must strategically assess their data latency needs, prioritize mission-critical use cases, and systematically transition towards an event-driven, stream-processing architecture. This involves not only technological investment in solutions that support Change Data Capture (CDC) and robust Stream Processing Platforms but also a commitment to developing the necessary skill sets in data engineering, governance (including Schema Registry for Schema Enforcement), and MLOps. The future of data is instantaneous, and those who master the art of cutting data latency will be the ones that truly harness the power of real-time inference on streaming data for immediate predictions, propelling their AI/ML initiatives and securing their place as leaders in their respective industries.